Table of contents

- 1. (BRIEF) INTRODUCTION

- 2. FORMALIZATION OF SCENARIOS AND GENERATION OF THE TRAINING SET

- 2.1 Basic notions

- 2.2 Main features of algorithms for data simulation

- 2.3 Historical model parameterization

- 2.4 Mutation model parameterization (microsatellite and DNA sequence loci)

- 2.5 Prior distributions

- 2.6 Summary statistics as components of the feature vector

- 2.7 Generating the training set

- 3.1 Addition of linear combinations of summary statistics to the vector feature

- 5. RUNNING (EXAMPLE) DATASET TREATMENTS USING THE GRAPHIC USER INTERFACE (GUI)

- 6. PERFORMING RANDOM FOREST ANALYSES

- 7. KEY FILES

USER MANUAL for DIYABC Random Forest v1.0

- 23th of March 2021 -

François-David Collin 1,*, Ghislain Durif 1,*, Louis Raynal 1, Eric Lombaert 2, Mathieu Gautier 3, Renaud Vitalis 3, Jean-Michel Marin 1,&, Arnaud Estoup 3,&

1 IMAG, Univ Montpellier, CNRS, UMR 5149, Montpellier, France

2 ISA, INRAE, CNRS, Univ Côte d’Azur, Sophia Antipolis, France

3 CBGP, Univ Montpellier, CIRAD, INRAE, Institut Agro, IRD, Montpellier, France

* Equal contribution (F-D.C.: computation part of the program; S.D.: interface part of the program)

& These authors are joint senior authors on this work

Corresponding authors:

Arnaud Estoup. E-mail: arnaud.estoup@.inrae.fr

Francois-David.Collin: Francois-David.Collin@umontpellier.fr

Ghislain Durif: ghislain.durif@umontpellier.fr

CONTENTS

Warning: You might want to activate the option “Navigation panel” (accessible through the “Display” tab) after opening the present manual file under Word. This navigation panel, visible on the left size of the document once activated, will allow you reaching directly through simple click actions the different sections and sub-sections described below. You can navigate just by directly clicking on the titles of the content below when using the pdf format of the present document.

1. (BRIEF) INTRODUCTION

1.1 General context

Simulation-based methods such as Approximate Bayesian Computation (ABC) are well adapted to the analysis of complex models of populations and species genetic history (Beaumont 2010). In this context, supervised machine learning (SML) methods provide attractive statistical solutions to conduct efficient inferences about both scenario choice and parameter estimation (Schrider & Kern 2018). The Random Forest methodology (RF) is a powerful ensemble of SML algorithms used for both classification and/or regression problems (Breiman 2001). RF allows conducting inferences at a lower computational cost than ABC, without preliminary selection of the relevant components of the ABC summary statistics, and bypassing the derivation of ABC tolerance levels (Pudlo et al. 2018; Raynal et al. 2019). We have implemented a set of RF algorithms to process inferences using simulated datasets generated from an extended version of the population genetic simulator implemented in DIYABC v2.1.0 (Cornuet et al. 2014). The resulting computer package, named DIYABC Random Forest v1.0, integrates two functionalities into a user-friendly interface: the simulation under custom evolutionary scenarios of different types of molecular data (microsatellites, DNA sequences or SNPs – including traditional IndSeq and more recent PoolSeq SNP data) and RF treatments including statistical tools to evaluate the power and accuracy of inferences (Collin et al. 2020). Because of the properties inherent of the implemented RF methods and the large feature vector (including various summary statistics and their linear combinations) available for SNP data, DIYABC Random Forest v1.0 can efficiently contribute to the analysis of large-size SNP (and other molecular markers) datasets to make inferences about complex population genetic histories.

1.2 How to cite the program DIYABC Random Forest v1.0

Collin F-D, Durif G, Raynal L, Lombaert E, Gautier M, Vitalis R, Marin J-M, Estoup A (2021) DIYABC Random Forest v1.0: extending approximate Bayesian computation with supervised machine learning to infer demographic history from genetic polymorphisms. Molecular Ecology Resources. DOI: 10.22541/au.159480722.26357192

1.3 Web site

You can get from this github site the executable files for different operating systems, the latest version of this manual document, as well as examples of DIYABC Random Forest analyses for different types of markers.

1.4. System requirements, installing and launching the program

-

The software DIYABC Random Forest (hereafter DIYABC-RF) v1.0 is composed of three parts: the dataset simulator, the Random Forest inference engine and the graphical user interface. The whole is packaged as a standalone and user-friendly graphical application named DIYABC-RF GUI and available at https://diyabc.github.io. The different developer and user manuals for each component of the software are available on the same website. DIYABC-RF is a multithreaded software which can be run on three operating systems: GNU/Linux, Microsoft Windows and MacOS. The program can be used through a modern and user-friendly graphical interface designed as an R shiny application (Chang et al. 2019). For a fluid and simplified user experience, this interface is available through a standalone application, which does not require installing R or any dependencies and hence can be used independently. The application is also implemented in an R package providing a standard shiny web application (with the same graphical interface) that can be run locally as any shiny application, or hosted as a web service to provide a DIYABC-RF server for multiple users.

-

To install and run the program, please check the instructions available on the website https://diyabc.github.io/

-

Minimum 4GB of RAM; 6GB of RAM recommended

-

From 1 to 10 GB free disk space for each DIYABC-RF project depending on the project configuration and the number of simulated datasets recorded in the training set file (reftableRF.bin file).

1.5 Acknowledgements

We thank Pierre Pudlo for useful discussions and Jean-Marie Cornuet for computer code expertise at the onset of the ABC Random Forest project. We also thank several “beta-users”, especially XXXNAMEXXX, who tested the software DIYABC Random Forest v1.0 with their data. This work was supported by funds from the French Agence National pour la Recherche (ANR projects SWING and GANDHI), the INRAE scientific division SPE (AAP-SPE 2016), and the LabEx NUMEV (NUMEV, ANR10-LABX-20).

2. FORMALIZATION OF SCENARIOS AND GENERATION OF THE TRAINING SET

2.1 Basic notions

Before processing Random Forest analyses, one need to generate a training set which corresponds to the so called reference table in a standard ABC framework (Cornuet et al. 2014 and see the associated program DIYABC v2.1.0). The datasets composing the training set can be simulated under different models (hereafter referred to as scenarios) and samples (i.e. nb of markers and sampled individuals) configurations, using parameter values drawn from prior distributions. Each resulting dataset is summarized using a set of descriptive statistics. Scenarios and prior distributions are formalized in the main pipeline of the software DIYABC-RF and summary statistics are computed using the “Training set simulation” module of the main pipeline of DIYABC-RF, which essentially corresponds to an extended version of the population genetics simulator implemented in DIYABC v2.1.0 (Cornuet et al. 2014). As in the latter program, DIYABC-RF allows considering complex population histories including any combination of population divergence events, symmetrical or asymmetrical admixture events (but not any continuous gene flow between populations) and changes in past population size, with population samples potentially collected at different times. Statistical analysis based on Random Forest algorithms of an observed dataset using a given training set are computed using the “Random Forest Analysis” module of the main pipeline of DIYABC-RF.

2.2 Main features of algorithms for data simulation

The ABC part of DIYABC-RF is a simulation-based method. Data simulation is based on the Wright-Fisher model. It consists in generating the genealogy of all sampled genes until their most recent common ancestor using (backward in time) coalescence theory. This begins by randomly drawing a complete set of parameters from their own prior distributions and that satisfy all imposed conditions. Then, once events have been ordered by increasing times, a sequence of actions is constructed. If there is more than one locus, the same sequence of actions is used for all successive loci.

Possible actions fall into four categories:

- adding a sample to a population = Add as many gene lineages to the population as there are genes in the sample.

- merge two populations = Move the lineages of the second population into the first population.

- split between two populations = Distribute the lineages of the admixed population among the two parental populations according to the admixture rate.

- coalesce and mutate lineages within a population = There are two possibilities here, depending on whether the population is terminal or not. We call terminal the population including the most recent common ancestor of the whole genealogy. In a terminal population, coalescences and mutations stop when the MRCA is reached whereas in a non-terminal population, coalescence and mutations stop when the upper (most ancient) limit is reached. In the latter case, coalescences can stop before the upper limit is reached because there remains a single lineage, but this single remaining lineage can still mutate.

Two different coalescence algorithms are implemented: a generation by generation simulation or a continuous time simulation. The choice, automatically performed by the program, is based on an empirical criterion which ensures that the continuous time algorithm is chosen whenever it is faster than generation by generation while keeping the relative error on the coalescence rate below 5% (see Cornuet et al. 2008 for a description of this criterion). In any case, a coalescent tree is generated over all sampled genes.

Then the mutational simulation process diverges depending on the type of markers: for microsatellite or DNA sequence loci, mutations are distributed over the branches according to a Poisson process whereas for SNP loci, one mutation is applied to a single branch of the coalescent tree, this branch being drawn at random with probability proportional to its length. Eventually, starting from an ancestral allelic state (established as explained below), all allelic states of the genealogy are deduced forward in time according to the mutation process. For microsatellite loci, the ancestral allelic state is taken at random in the stationary distribution of the mutation model (not considering potential single nucleotide indel mutations). For DNA sequence loci, the procedure is slightly more complicated. First, the total number of mutations over the entire tree is evaluated. Then according to the proportion of constant sites and the gamma distribution of individual site mutation rates, the number and position of mutated sites are generated. Finally, these mutated sites are given ‘A’, ‘T’, ‘G’ or ‘C’ states according to the selected mutation model. For SNP loci, the ancestral allelic state is arbitrarily set to 0 and it becomes equal to 1 after mutation.

Each category of loci has its own coalescence rate deduced from male and female effective population sizes. In order to combine different categories (e.g. autosomal and mitochondrial), we have to take into account the relationships among the corresponding effective population sizes. This can be achieved by linking the different effective population sizes to the effective number of males (NM) and females (NF) through the sum NT=NF+NM and the ratio r=NM/(NF+NM). We use the following formulae for the probability of coalescence (p) of two lineages within this population:

Autosomal diploid loci:

\[p = \frac{1}{8r(1 - r)N_{T}}\]Autosomal haploid loci:

\[p = \frac{1}{4r(1 - r)N_{T}}\]X-linked loci / haplo-diploid loci:

\[p = \frac{1 + r}{9r(1 - r)N_{T}}\]Y-linked loci:

\[p = \frac{1}{rN_{T}}\]Mitochondrial loci:

\[p = \frac{1}{(1 - r)N_{T}}\]Users have to provide a (total) effective size NT (on which inferences will be made) and a sex-ratio r. If no sex ratio is provided, the default value of r is taken as 0.5.

2.3 Historical model parameterization

The evolutionary scenario, which is characterized by the historical

model, can be described in a dedicated panel of the interface of the

program as a succession in time of “events” and “inter event periods”.

In the current version of the program, we consider four categories of

events: population divergence, discrete change of effective population

size, admixture and sampling (the last one allow considering samples

taken at different times). Between two successive events affecting a

population, we assume that populations evolve independently (e.g.

without migration) and with a fixed effective size. The usual parameters

of the historical model are the times of occurrence of the various

events (counted in number of generations), the effective sizes of

populations and the admixture rates. When writing the scenario, events

have to be coded sequentially backward in time (see section 2.3.2 for

examples). Although this choice may not be natural at first sight, it is

coherent with coalescence theory on which are based all data simulations

in the program. For that reason, the keywords for a divergence or an

admixture event are merge and split, respectively. Two keywords

varNe and sample correspond to a discrete change in effective

population size and a gene sampling within a given population,

respectively. A scenario takes the form of a succession of lines (one

line per event), each line starting with the time of the event, then the

nature of the event, and ending with several other data depending on the

nature of the event. Following is the syntax used for each category of

event:

Population sample

$\langle time\rangle$ sample $\langle pop\rangle$

Where $\langle time\rangle$ is the time (always counted in number of generations) at which the sample was collected and $\langle pop\rangle$ is the population number from which is taken the sample. It is worth stressing here that samples are considered in the same order as they appear in the data file. The number of lines will thus be exactly equal to the number of samples in the datafile.

Population size variation

$\langle time\rangle$ varNe $\langle pop\rangle$ $\langle Ne\rangle$

From time $\langle time\rangle$, looking backward in time, population

$\langle pop\rangle$ will have an effective size $\langle Ne\rangle$.

Population divergence

$\langle time\rangle$ merge $\langle pop1\rangle$

$\langle pop0\rangle$

At time $\langle time\rangle$, looking backward in time, population

$\langle pop0\rangle$ “merges” with population $\langle pop1\rangle$.

Hereafter, only $\langle pop1\rangle$ “remains”.

Population admixture

$\langle time\rangle$ split $\langle pop0\rangle$

$\langle pop1\rangle$ $\langle pop2\rangle$ $\langle rate\rangle$

At time $\langle time\rangle$, looking backward in time, population

$\langle pop0\rangle$ “splits” between populations $\langle pop1\rangle$

and $\langle pop2\rangle$. A gene lineage from population

$\langle pop0\rangle$ joins population $\langle pop1\rangle$

(respectively $\langle pop2\rangle$) with probability

$\langle rate\rangle$ (respectively 1-$\langle rate\rangle$). Hereafter,

only $\langle pop1\rangle$ and $\langle pop2\rangle$ “remain”.

Note that one needs to write a first line giving the effective sizes

of the sampled populations before the first event described, looking

backward in time. Expressions between arrows, other than population

numbers, can be either a numeric value (e.g. 25) or a character string

(e.g. t0). In the latter case, it is considered as a parameter of the

model. The program offers the possibility to add or remove scenarios, by

just clicking on the corresponding buttons. The usual shortcuts (e.g.

CTRL+C, CTRL+V and CTRL+X) can be used to edit the different scenarios.

Some or all parameters can be in common among scenarios.

2.3.1 Key notes

-

There are two ways of giving a fixed value to effective population sizes, times and admixture rates. Either the fixed value appears as a numeric value in the scenario windows or it is given as a string value like any parameter. In the latter case, one gives this parameter a fixed value by choosing a Uniform distribution and setting the minimum and maximum to that value in the prior setting of the corresponding interface panel.

-

All expressions must be separated by at least one space.

-

All expressions relative to parameters can include sums or differences of parameters of the same type (e.g. divergence times or effective population size but not a mixture of both). For instance, it is possible to write:

t0 merge 2 3

t0+t1 merge 1 2

This means thatt1is the time elapsed between the two merge events. Note that one cannot mix a parameter and a numeric value (e.g.t1+150will result in an error). This can be done by writingt1+t2and fixingt2by choosing a uniform distribution with lower and upper bounds both equal to 150 in the interface. -

Time is always given in generations. Since we look backward, time increases towards past.

-

Negative times are allowed (e.g. the example given in section 2.3.2), but not recommended.

-

Population numbers must be consecutive natural integers starting at 1. The number of population can exceed the number of samples and vice versa: in other words, unsampled populations can be considered in the scenario on one hand, and the same population can be sampled more than once on the other hand.

-

Multi-furcating population trees can be considered, by writing several divergence events occurring at the same time. However, one has to be careful to the order of the

mergeevents. For instance, the following piece of scenario will fail:

100 merge 1 2

100 merge 2 3

This is because, after the first line, population 2, which has merged with population 1, does not “exist” anymore (the remaining population is population 1). So, it cannot receive lineages of population 3 as it should as a result of the second line. The correct ways are either to put line 2 before line 1, or to change line 2 to:100 merge 1 3. -

Since times of events can be parameters, the order of events can change according to the values taken by the time parameters. In any case, before simulating a dataset, the program sorts out events by increasing times. Note that sorting out events by increasing times can only be done when all time values are known, i.e. when simulating datasets. When checking scenarios in the interface, all time values are not yet defined, so that when visualizing a scenario, events are represented in the same order as they appear in the window used to define the scenario. If two or more events occur at the same time, the order is that of the scenario as written by the user.

-

Most scenarios begin with sampling events. We then need to know the effective size of the populations to perform the simulation of coalescences until the next event concerning each population. We decided to provide the effective size (first line) and the sampling description (following lines) on distinct lines.

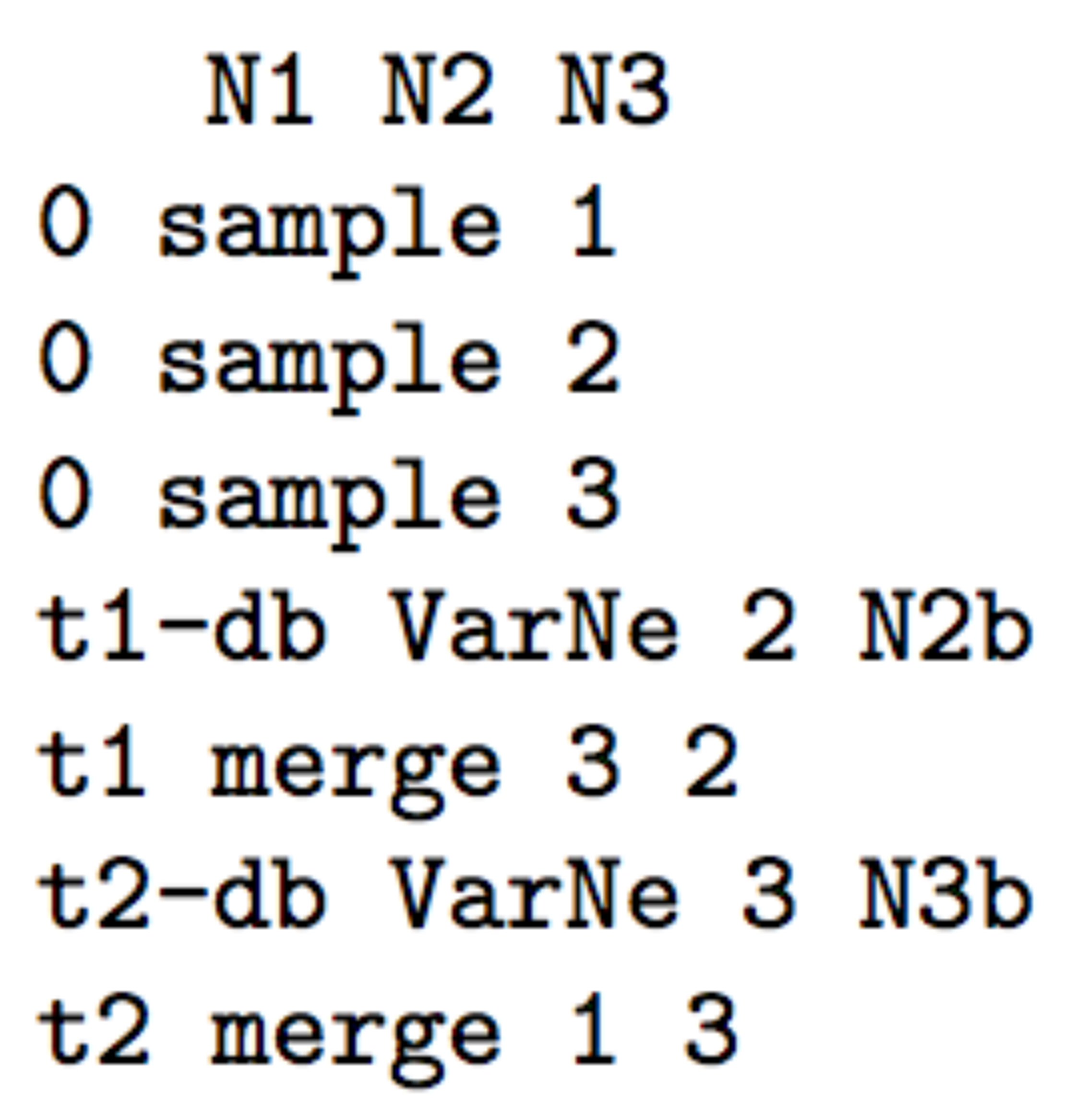

2.3.2 Examples

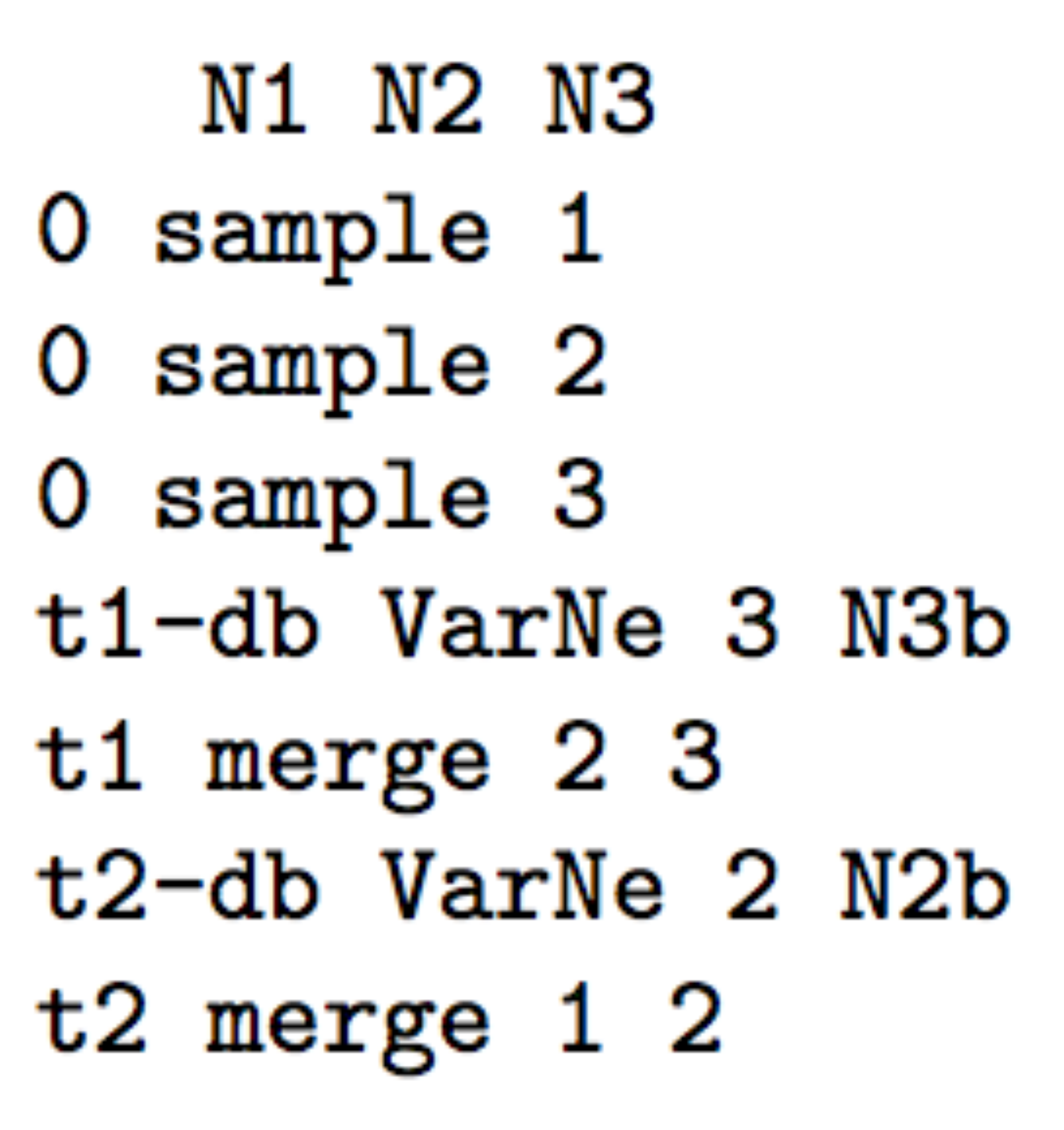

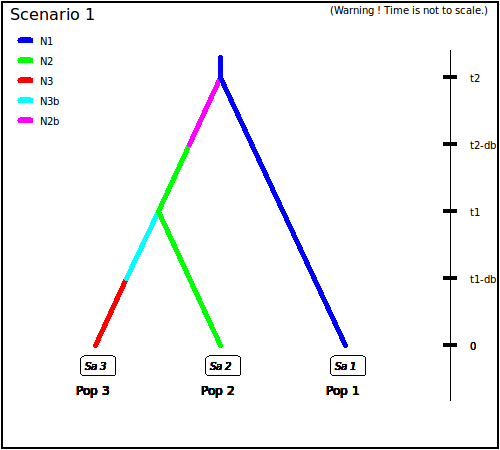

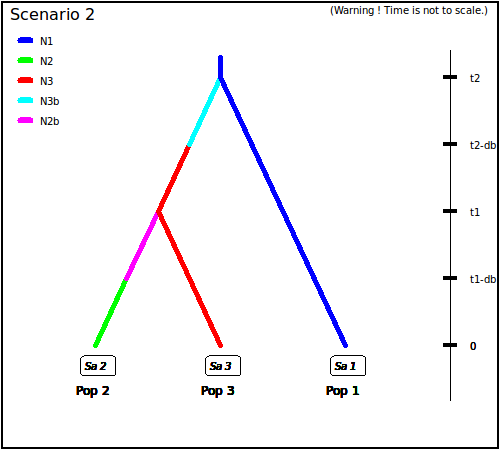

Below are some usual scenarios with increasing complexity. Each scenario is coded (as in the corresponding interface panel of the program) on the left side and a graphic representation (given by DIYABC-RF) is printed on the right side

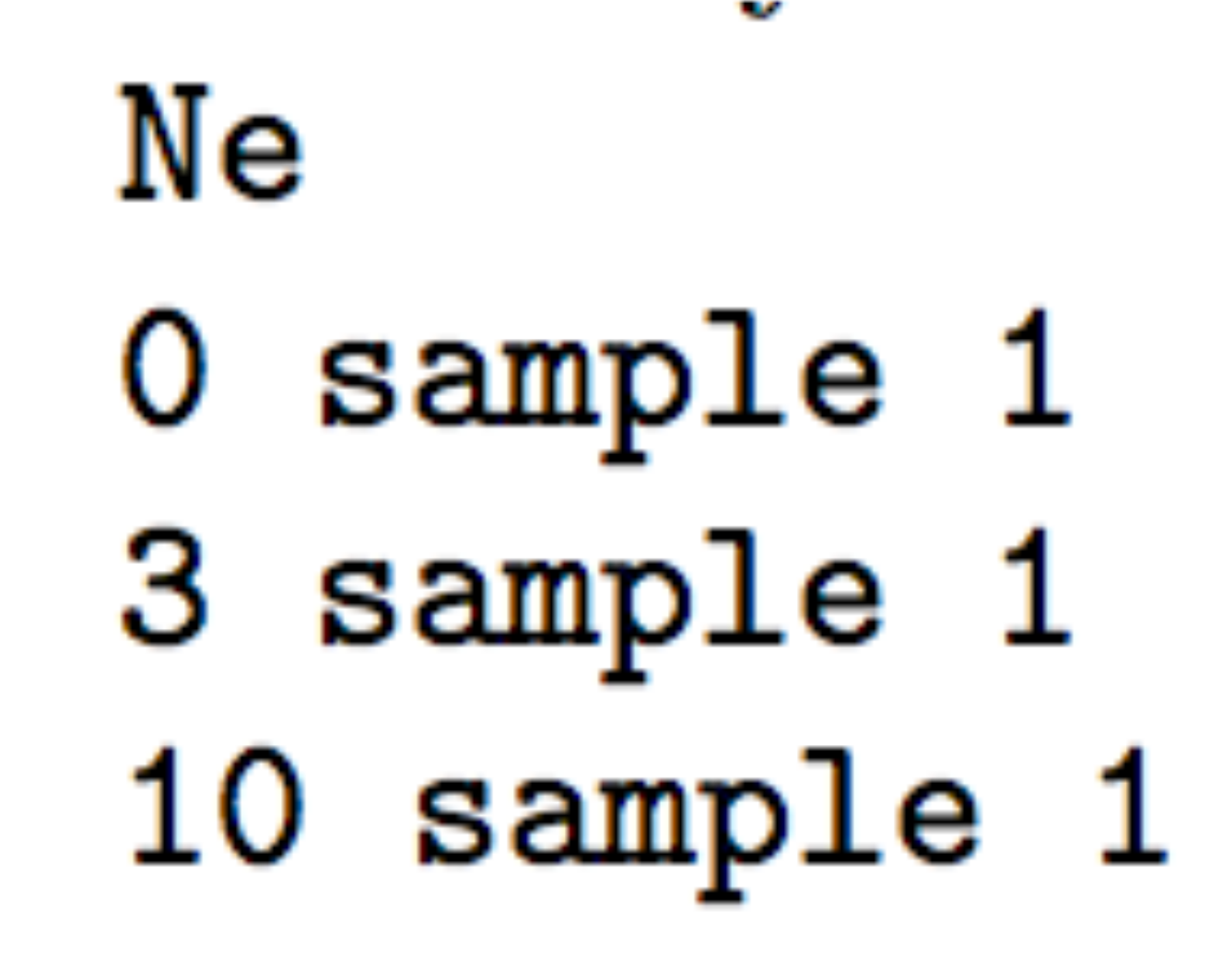

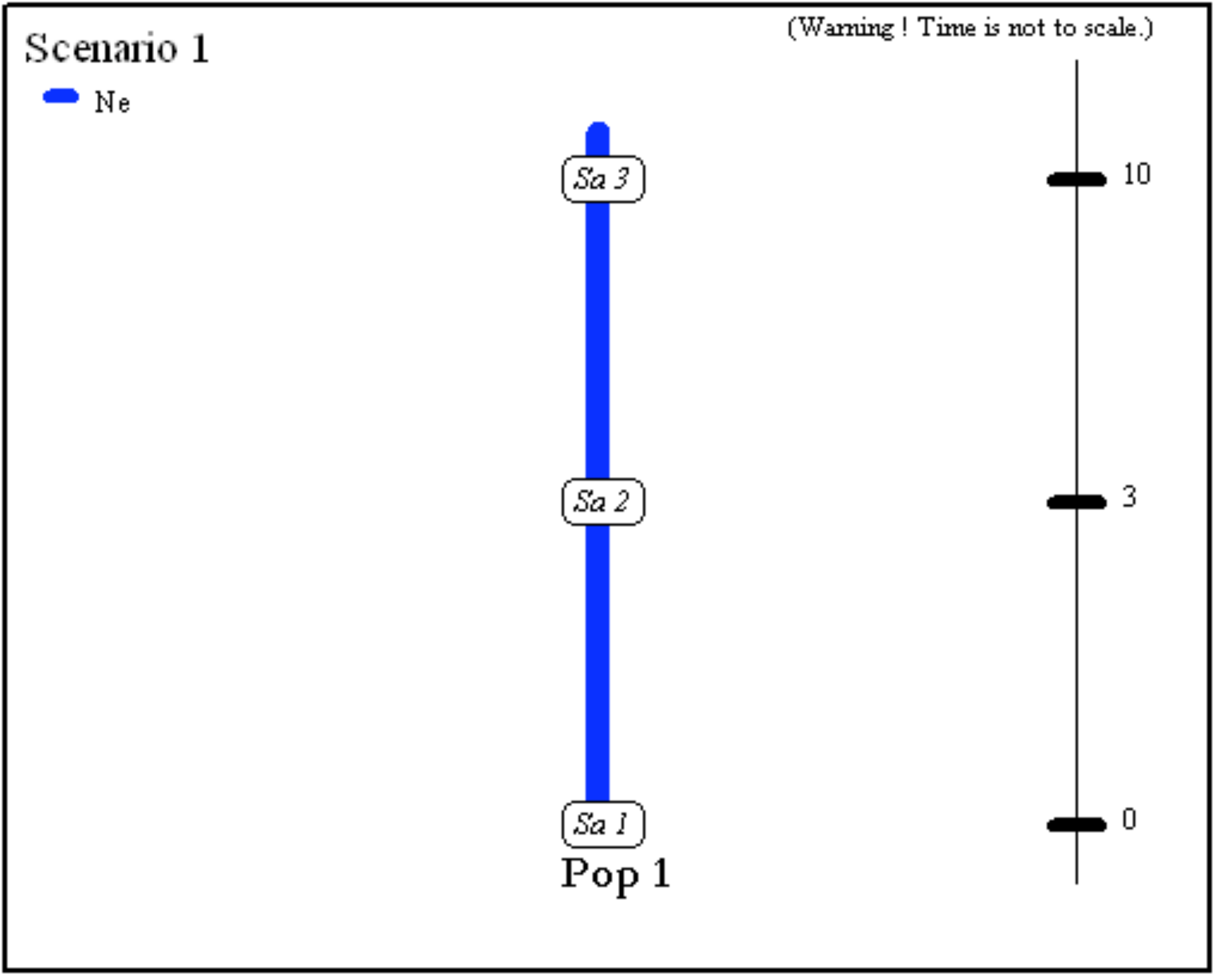

- One population from which several samples have been taken at various generations: 0, 3 and 10. Generation 0 could correspond for instance to the most recent sampling date. The only unknown historical-demographical parameter of the scenario is the (constant) effective population size which is defined in the first line at sampling time.

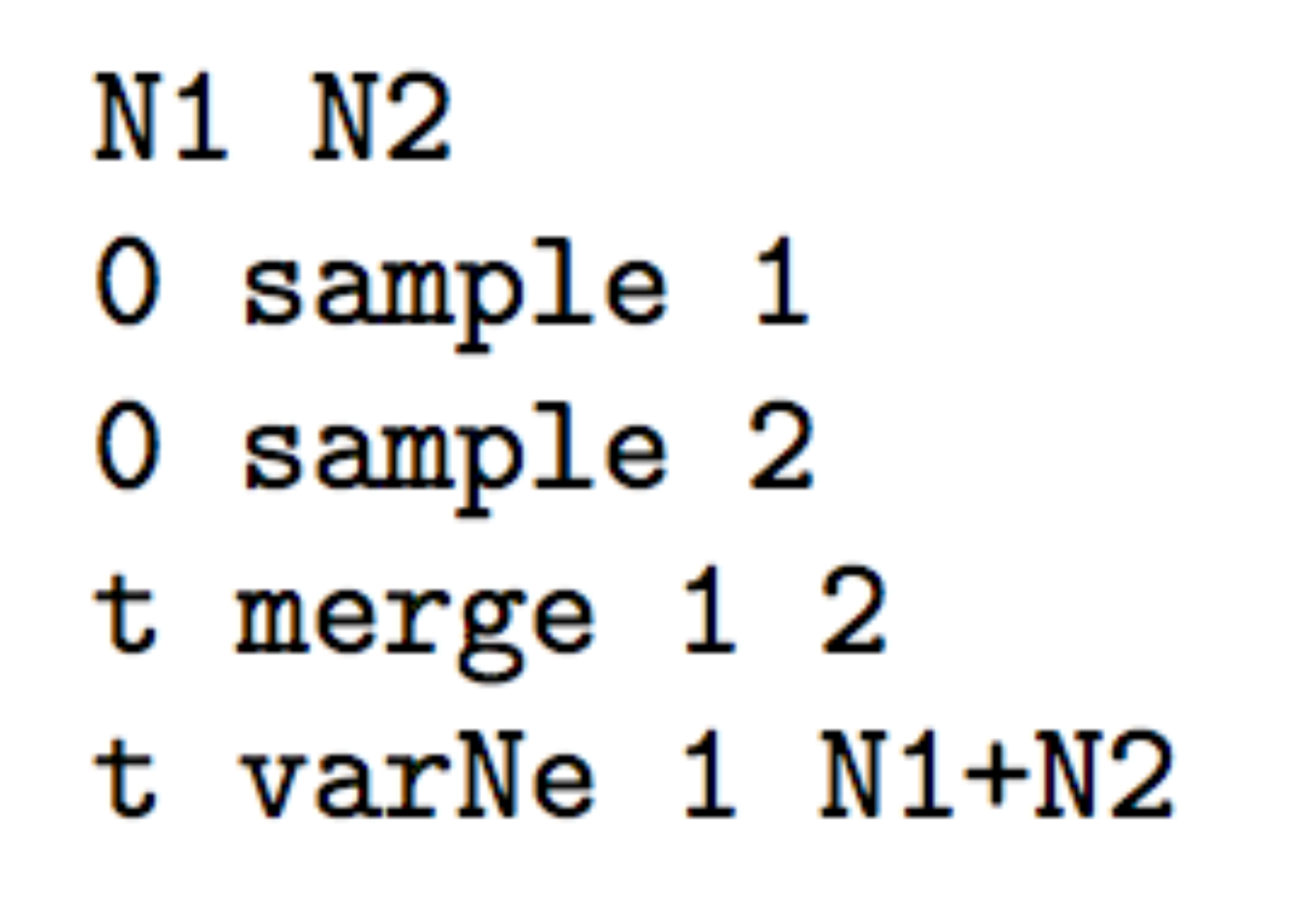

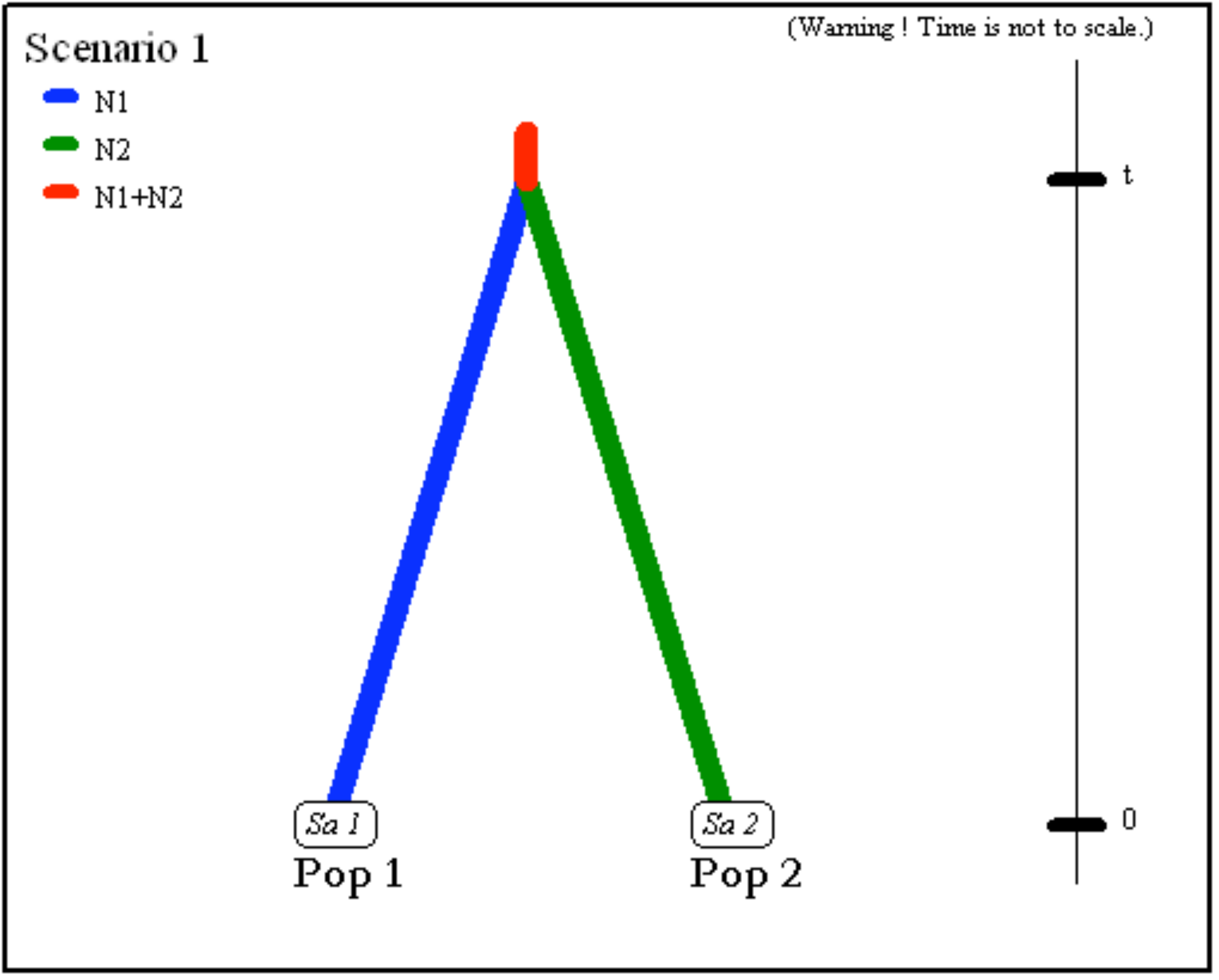

- Two populations of size

N1andN2(defined in the first line at sampling time) have divergedtgenerations in the past from an ancestral population of sizeN1+N2.

- Two parental populations (1 and 2) with constant effective

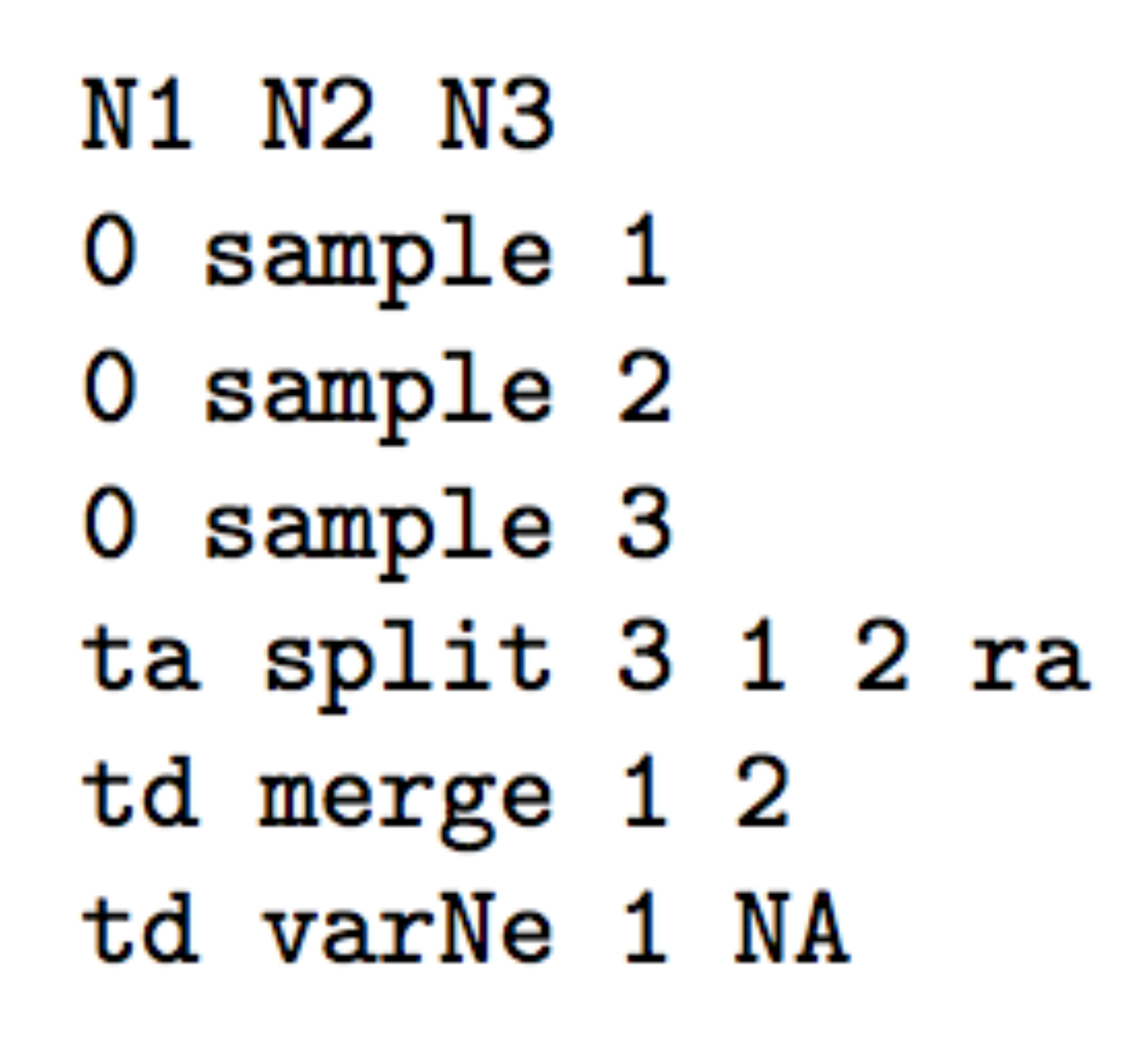

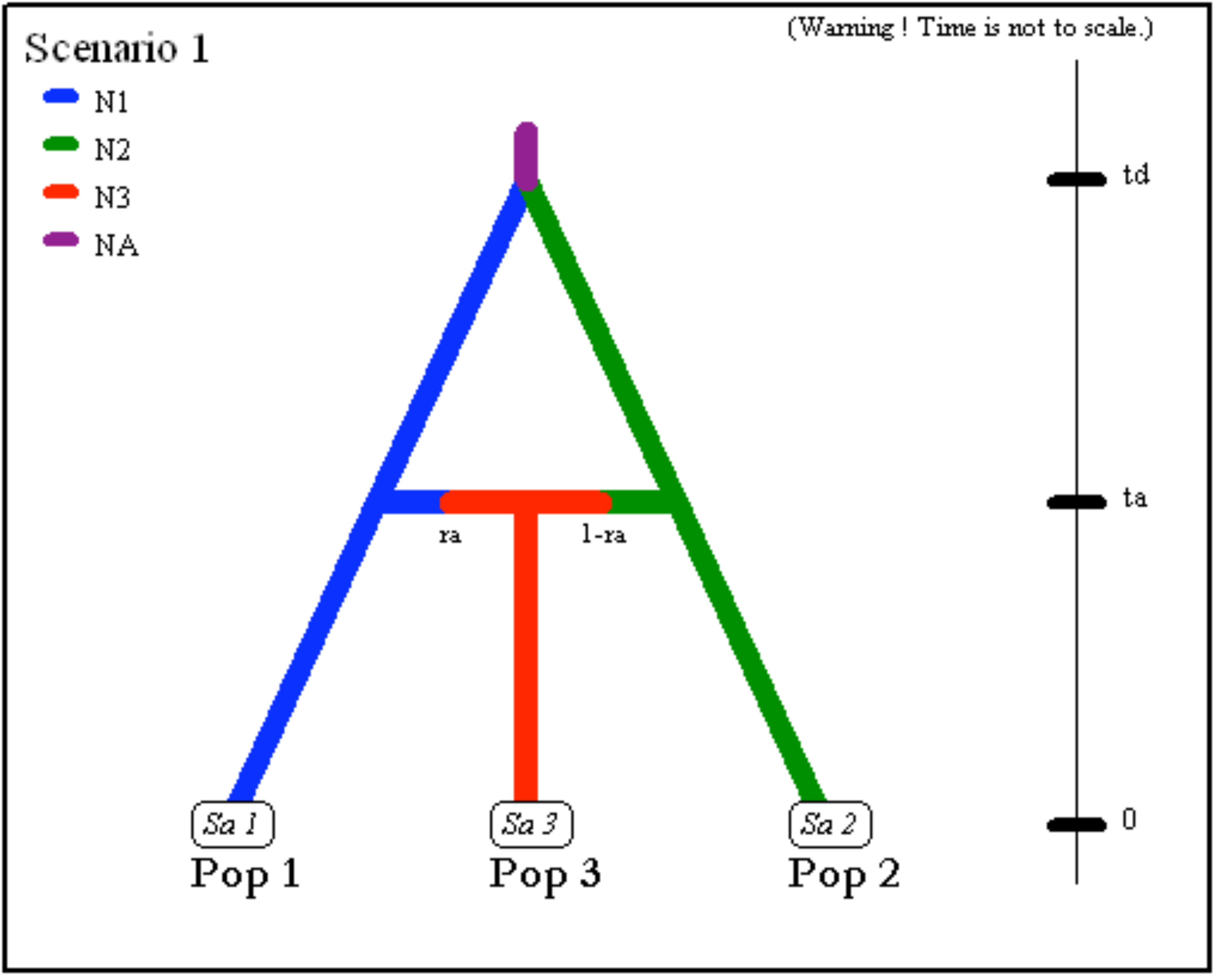

population sizes

N1andN2have diverged at timetdfrom an ancestral population of sizeNA. At timeta, there has been an admixture event between the two populations giving birth to an admixed population (3) with effective sizeN3and with an admixture rateracorresponding to the proportion of genes from population 1.

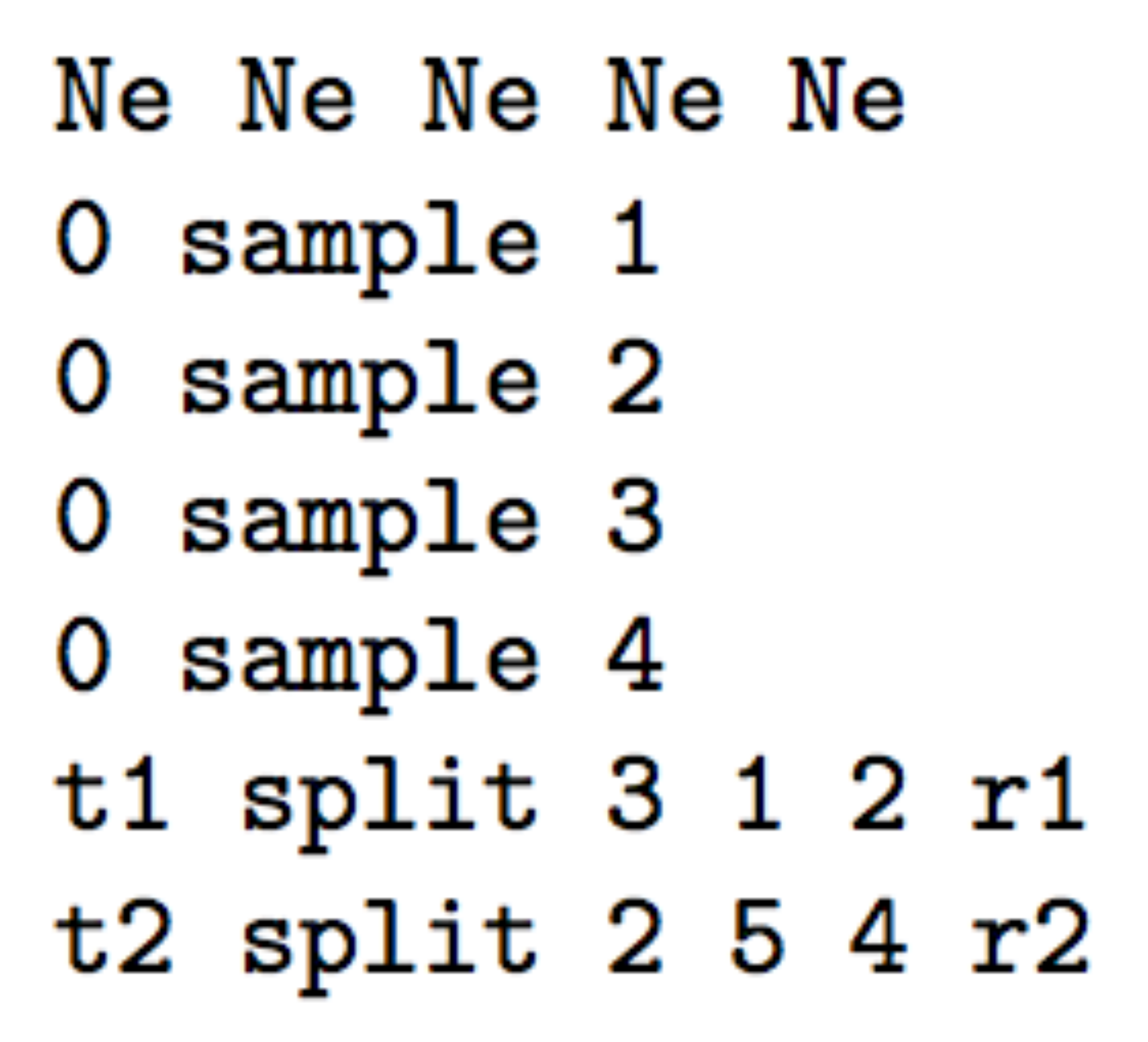

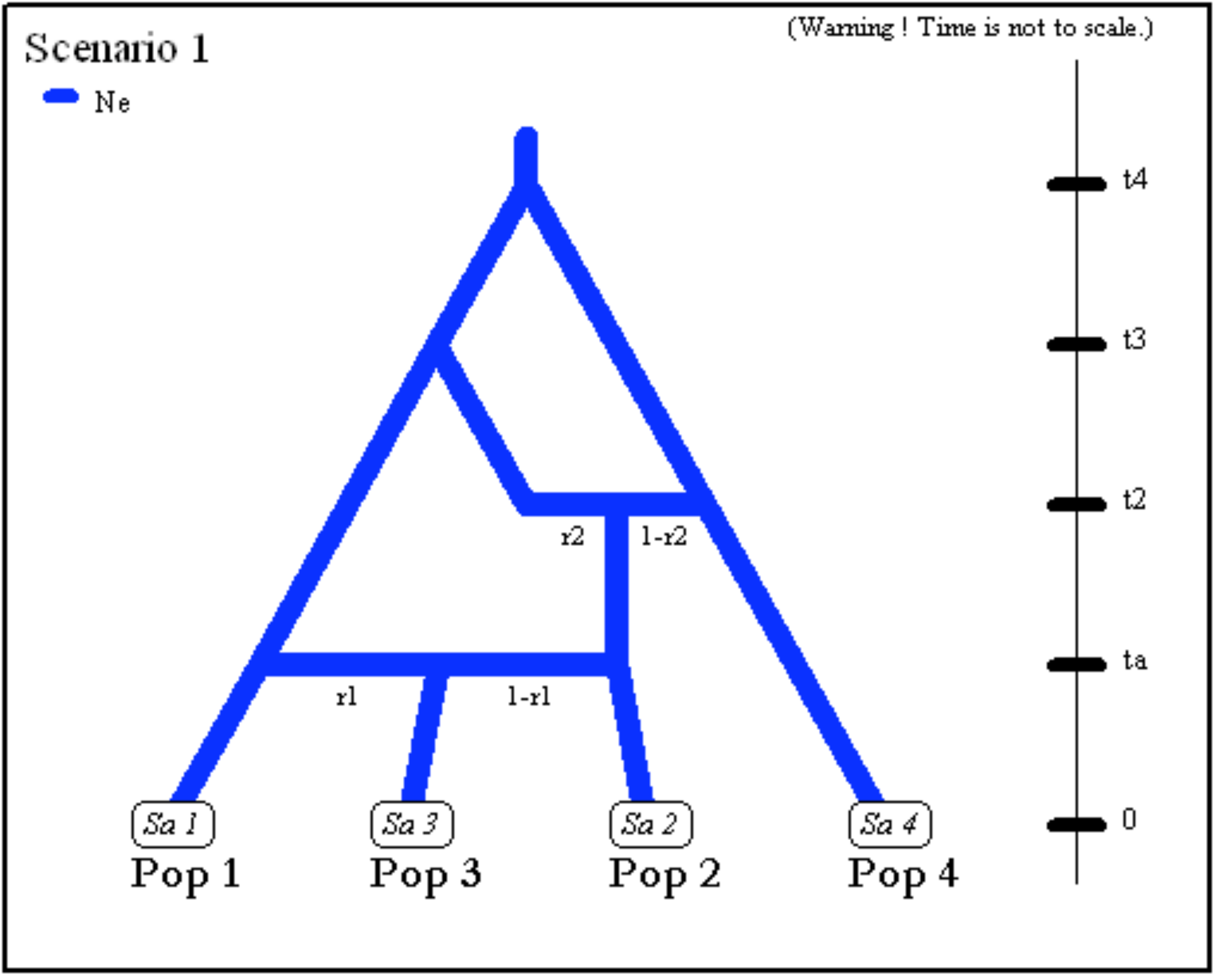

- The next scenario includes four population samples and two

admixture events. All populations have identical effective sizes

(

Ne).

- Note that although there are only four samples, the scenario

includes a fifth unsampled population (also called “ghost

population”). This unsampled population which diverged from

population 1 at time

t3was a parent in the admixture event occurring at timet2. Note also that the first line must include the effective sizes (here Ne) of the five populations. (i.e. the four sampled population and the single unsampled population here at position 5).

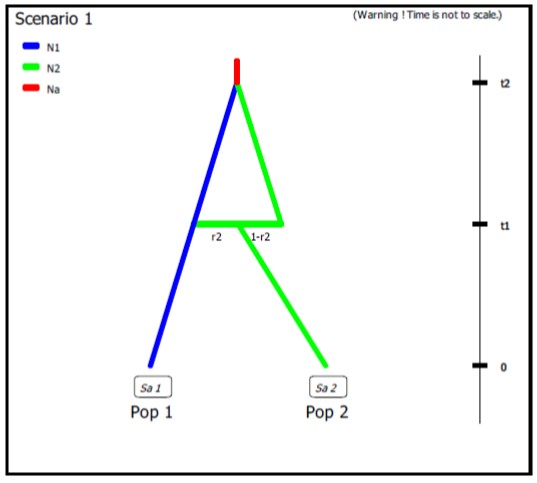

- Example of DIYABC-RF code for unidirectional admixture/introgression where a fraction r2 of genes from population ‘Pop 2’ (with an effective population size N2) introgresses into population ‘Pop 1’ (with a population size N1) at time t1. The code also describes the merge of the two populations ‘Pop 1’ and ‘Pop 2’ into a common ancestor (with a population size Na) at time t2. It is worth noting that: (i) in the observed datafile, there are only two sampled populations (Pop 1 and Pop 2) and the user will need to define two (additional) unsampled populations in the first line of code (Pop 3 and Pop 4 with effective population size N2); (ii) we advise in this case to draw the fraction of genes transferred during the unidirectional admixture (r2) into a uniform distribution with min and max bounds equal to e.g.. 0.01 (very weak introgression from ‘Pop 2’ into ‘Pop 1’) and 0.5 (strong introgression from ‘Pop 2’ into ‘Pop 1’), respectively.

N1 N2 N2 N2 # comment

1

N1 N2 N2 N2 # comment

1

0 sample 1

0 sample 2

t1 split 2 3 4 r2 # comment 2

t1 merge 1 3 # comment 3

t2 merge 1 4 # comment 4

t2 VarNe 1 Na # comment 5

# comment 1: both unsampled populations ‘Pop 3’ and ‘Pop 4’ correspond to ‘Pop 2’, hence their population sizes N2.

# comment 2: at time t1 a fraction r2 of genes from ‘Pop 2’ goes into the unsampled population ‘Pop 3’ and a fraction 1-r2 goes into the unsampled population ‘Pop 4’ (‘Pop 2’ disappears)# comment 3: we immediately merge (cf. still at time t1) the unsampled population ‘Pop 3’ (with the fraction r2 of genes from ‘Pop 2’) into the population ‘Pop 1’ - hence the unidirectional admixture of r2 genes from ‘Pop 2’ into ‘Pop 1’ (‘Pop 3’ disappears)

# comment 4: ‘Pop 4’ which now corresponds to the ex-‘Pop 2’ with the fraction 1-r2 of genes - can be merge into ‘Pop 1’

# comment 5: the population ancestral to ‘Pop 1’ and ‘Pop 2’ now has its own population size Na

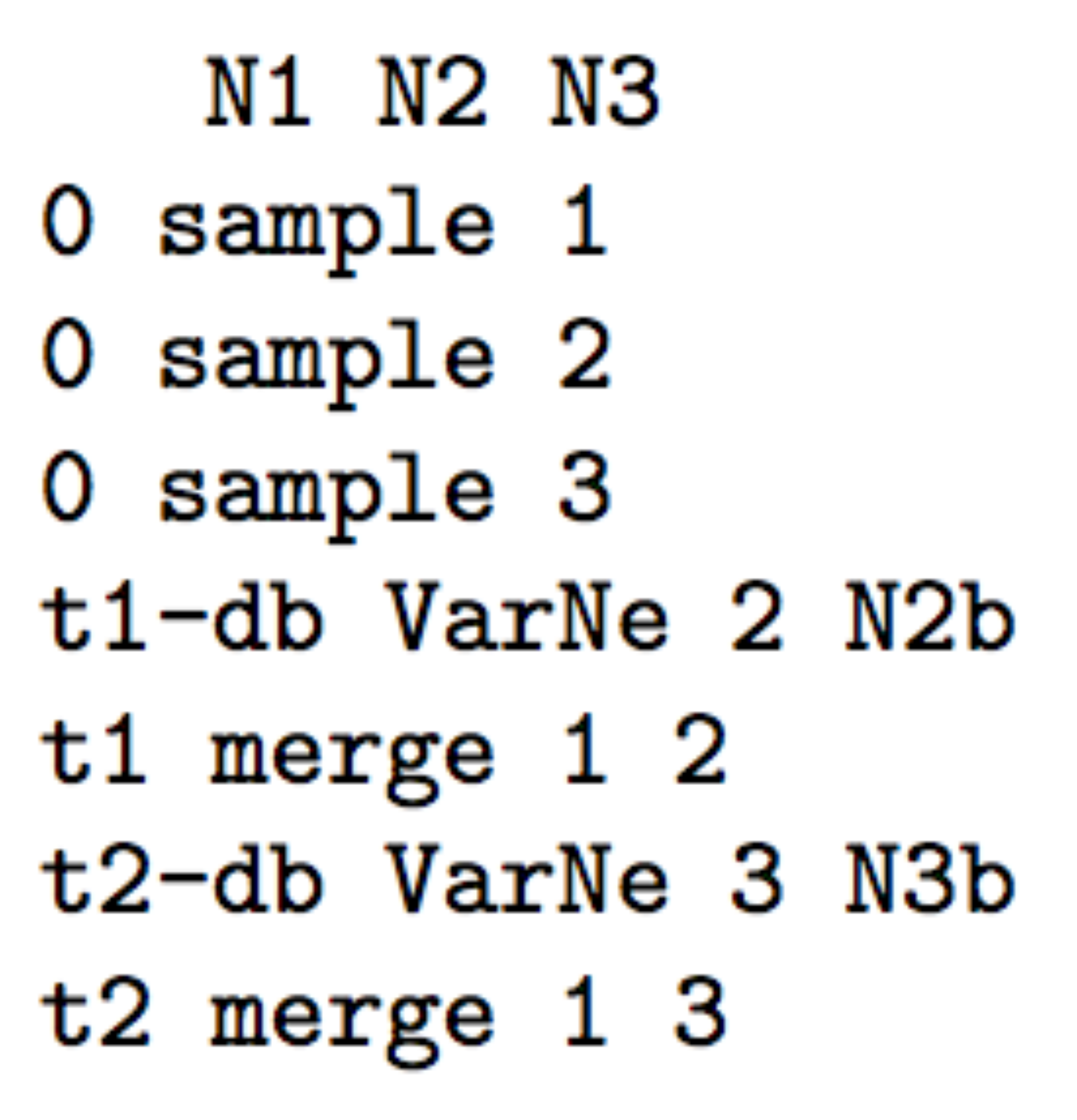

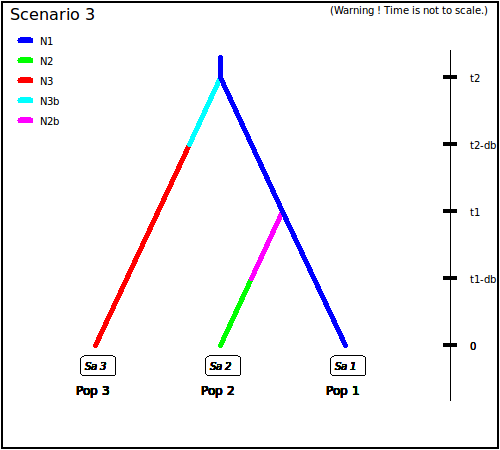

- The following three scenarios correspond to a classic invasion

history from an ancestral population (population 1). In scenario

1, population 3 is derived from population 2, itself derived from

population 1. In scenario 2, population 2 derived from population 3,

itself derived from population 1. In scenario 3, both populations 2

and 3 derived independently from population 1. Note that when a

new population is created from its ancestral population, there is an

initial size reduction (noted here

N2bfor population 2 andN3bfor population 3) for a given number of generation (here db for both populations) mimicking a demographic bottleneck since the invasive population generally starts with a few immigrants. For instance, a low number of Nxb individuals for db generations could corresponds to Nxb values ranging between 5 to 100 individuals and db values ranging from 1 to 10 generations (e.g. Fraimout et al. 2017). If db = 0 then no bottleneck occurs which can be also a coding choice.

Scenario 1

|

|

Scenario 2

|

|

Scenario 3

|

|

2.4 Mutation model parameterization (microsatellite and DNA sequence loci)

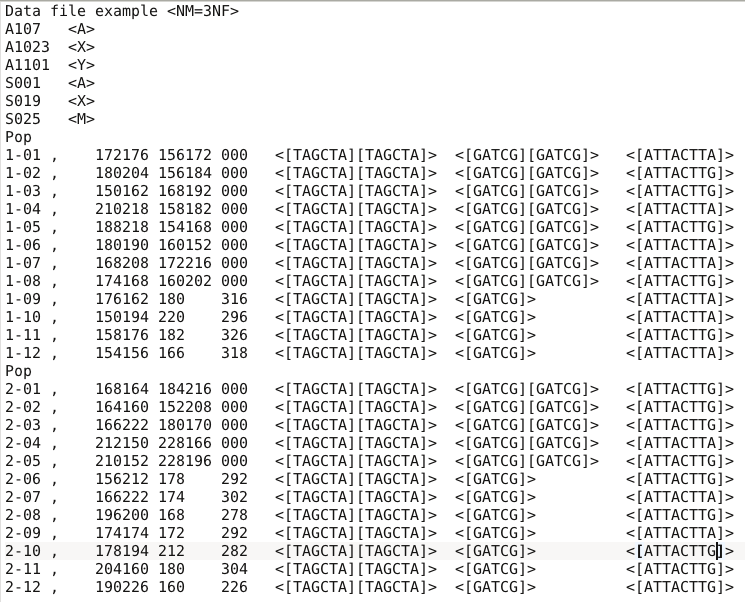

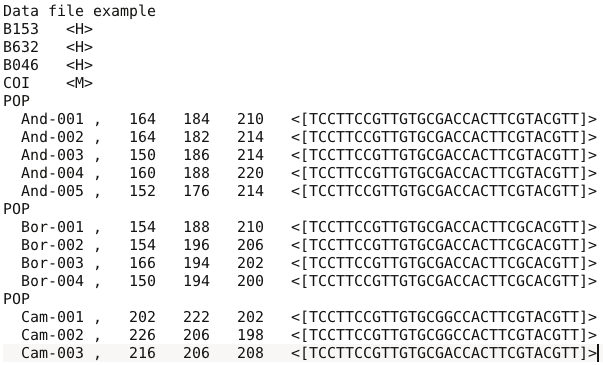

The program can analyze microsatellite data and DNA sequence data altogether as well as separately. SNP loci can only be also analyzed separately from microsatellite data and DNA sequence data. It is worth stressing that all loci in an analysis must be genetically independent. Second, for DNA sequence loci, intra-locus recombination is not considered. Loci are grouped by the user according to its needs. For microsatellite and DNA sequence, a different mutation model can be defined for each group. For instance, one group can include all microsatellites with motifs that are 2 bp long and another group those with a 4 bp long motif. Also, with DNA sequence loci, nuclear loci can be grouped together and a mitochondrial locus form a separate group. SNPs do not require mutation model parameterization (see below for details).

We now describe the parameterization of microsatellite and DNA sequence markers.

2.4.1 Microsatellite loci

Although a variety of mutation models have been proposed for

microsatellite loci, it is usually sufficient to consider only the

simplest models (e.g. Estoup et al. 2002). This has the non-negligible

advantage of reducing the number of parameters. This is why we chose the

Generalized Stepwise Mutation model (GSM). Under this model, a mutation

increases or decreases the length of the microsatellite by a number of

repeated motifs following a geometric distribution. This model

necessitates only two parameters: the mutation rate (µ) and the

parameter of the geometric distribution (P). The same mutation model

is imposed to all loci of a given group. However, each locus has its own

parameters (µi and Pi) and, following a

hierarchical scheme, each locus parameter is drawn from a gamma

distribution with mean equal to the mean parameter value.

Note also that:

-

Individual loci parameters (µi and Pi) are considered as nuisance parameters and hence are never recorded. Only mean parameters are recorded.

-

The variance or shape parameter of the gamma distributions are set by the user and are NOT considered as parameters.

-

The SMM or Stepwise Mutation Model is a special case of the GSM in which the number of repeats involved in a mutation is always one. Such a model can be easily achieved by setting the maximum value of mean P ($\overline{P}$) to 0. In this case, all loci have their $P_{i}$ set equal to 0 whatever the shape of the gamma distribution.

-

All loci can be given the same value of a parameter by setting the shape of the corresponding gamma distribution to 0 (this is NOT a limiting case of the gamma, but only a way of telling the program).

To give more flexibility to the mutation model, the program offers the possibility to consider mutations that insert or delete a single nucleotide to the microsatellite sequence by using a mean parameter (named $\mu_{(SNI)}$) with a prior to be defined and individual loci having either values identical to the mean parameter or drawn from a Gamma distribution.

2.4.2 DNA sequence loci

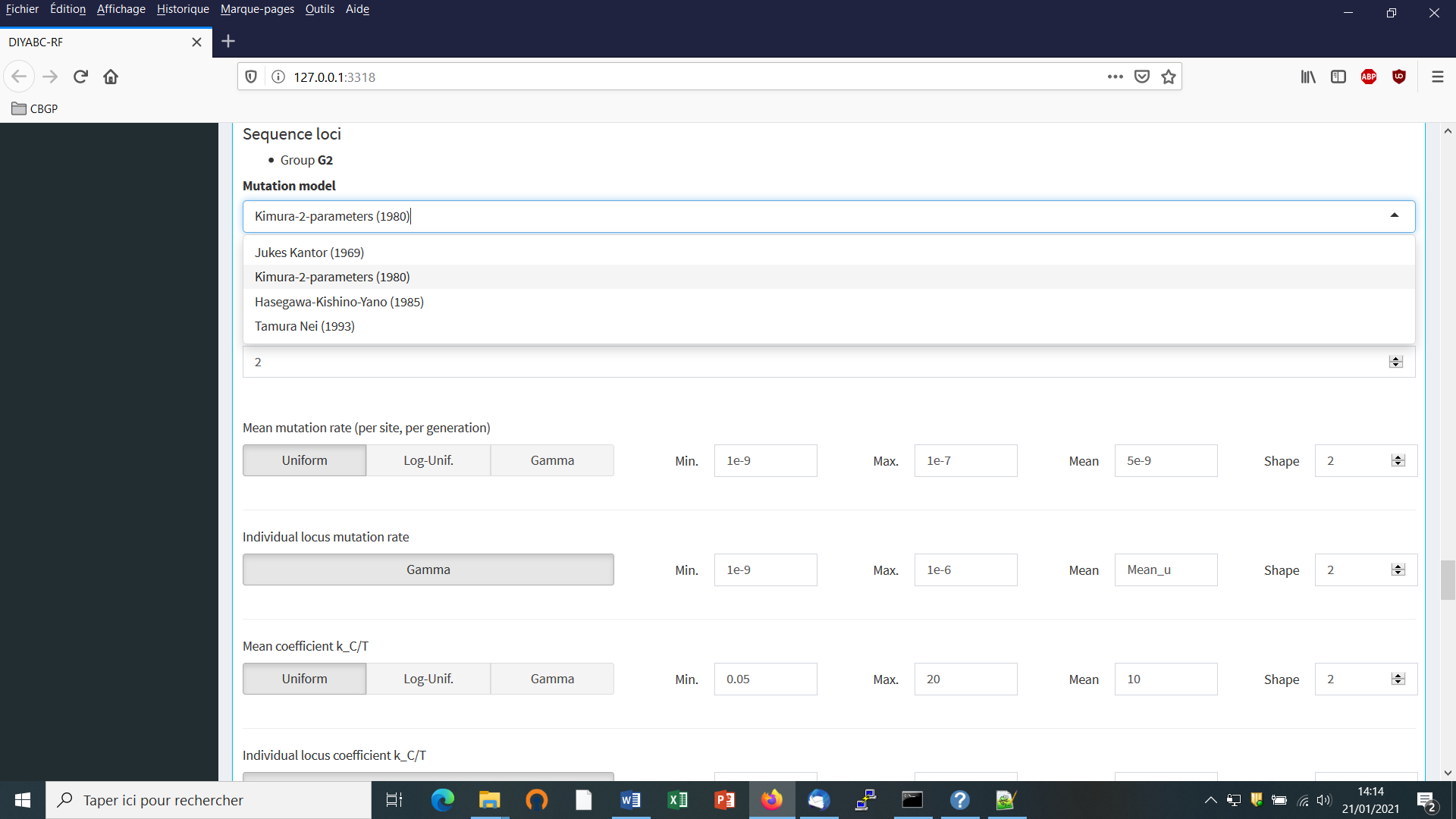

The program does not consider insertion-deletion mutations, mainly because there does not seem to be much consensus on this topic. Concerning substitutions, only the simplest models are considered. We chose the Jukes-Cantor (1969) one parameter model, the Kimura (1980) two parameter model, the Hasegawa-Kishino-Yano (1985) and the Tamura-Nei (1993) models. The last two models include the ratios of each nucleotide as parameters. In order to reduce the number of parameters, these ratios have been fixed to the values calculated from the observed dataset for each DNA sequence locus. Consequently, this leaves two and three parameters for the Hasegawa-Kishino-Yano (HKY) and Tamura-Nei (TN), respectively. Also, two adjustments are possible: one can fix the fraction of constant sites (those that cannot mutate) on the one hand and the shape of the Gamma distribution of mutations among sites on the other hand. As for microsatellites, all sequence loci of the same group are given the same mutation model with mean parameter(s) drawn from priors and each locus has its own parameter(s) drawn from a Gamma distribution (same hierarchical scheme). Notes 1, 2 and 4 of previous subsection (2.4.1) apply also for sequence loci.

2.4.3 SNPs do not require mutation model parameterization – notion of MAF and MRC

SNPs have two characteristics that allow to get rid of mutation models: they are (necessarily) polymorphic and they present only two allelic states (ancestral and derived). In order to be sure that all analyzed SNP loci have the two characteristics, monomorphic loci are discarded right from the beginning of analyses (when the observed dataset is scanned by the program). Note that a warning message will appear if the observed dataset include monomorphic loci, the latter being automatically removed from further analyses by the program. It is assumed that there occurred one and only one mutation in the coalescence tree of sampled genes. We will see below that this largely simplifies (and speeds up) SNP data simulation as one can use in this case the efficient “-s” algorithm of Hudson (2002) (Cornuet et al. 2014). Also, this advantageously reduces the dimension of the parameter space as mutation parameters are not needed in this case. There is however a potential drawback which is the absence of any calibration generally brought by priors on mutation parameters. Consequently, time/effective size ratios rather than original time or effective size parameters can be accurately estimated (e.g. Collin et al. 2021).

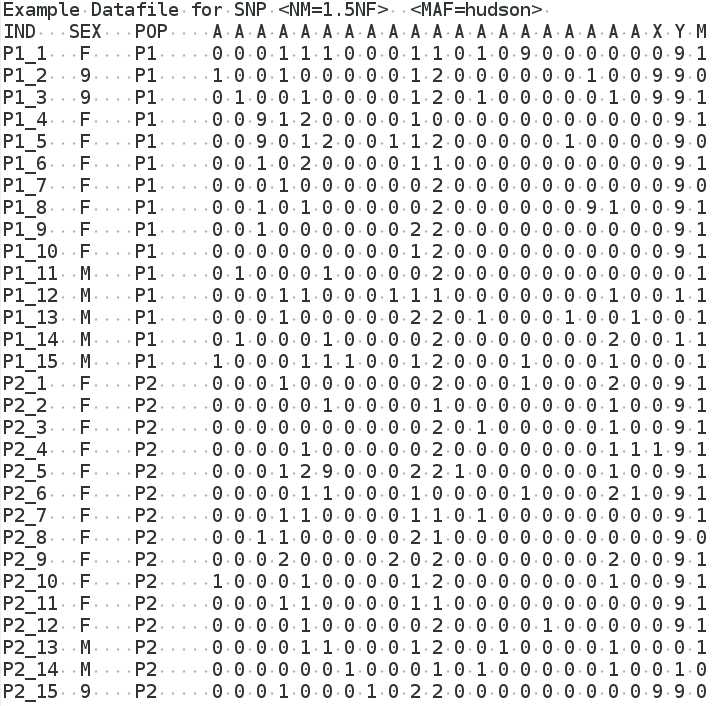

It is worth noting that, using the Hudson’s simulation algorithm for SNP markers leads to applying a kind of default MAF (minimum allele frequency) criterion on the simulated dataset. As a matter of fact, with this simulation algorithm each locus in both the observed and simulated datasets will be characterized by the presence of at least one copy of the SNP alleles over all genes sampled from all studied populations (i.e. pooling all genes genotyped at the locus). In DIYABC-RF, it is possible to impose a different MAF criterion on both the observed and simulated datasets. For each SNP, the MAF is computed pooling all genes genotyped over all studied population samples. For instance, the specification of a MAF equal to 5% will automatically select a subset of m loci characterized by a minimum allele frequency ≥ 5% out of the l loci of the observed dataset and only m loci with a MAF ≥ 5% will be retained in a simulated dataset. In practice, the instruction for a given MAF has to be indicated directly in the headline of the file of the observed dataset (see section 7.1.1 for data file examples). For instance, if one wants to consider only loci with a MAF equal to 5% one will write <MAF=0.05> in the headline. Writing <MAF=hudson> (or omitting to write any instruction with respect to the MAF) will bring the program to use the standard Hudson’s algorithm without further selection. The selection of a subset of loci fitting a given MAF allows: (i) to remove loci with very low level of polymorphism from the dataset and hence increase the mean level of genetic variation of both the observed and simulated datasets, without producing any bias in the analyses; and (ii) to reduce the proportion of loci for which the observed variation may corresponds to sequencing errors. In practice MAF values ≤10% are considered. To check for the consistency/robustness of the ABC results obtained, it may be useful to treat a SNP dataset considering different MAFs (for instance MAF=hudson, MAF=1% and MAF=5%). Note that increasing the MAF leads to increase the simulation times of SNP datasets as more loci need to be simulated with the Hudson’s algorithm to obtain a given number of loci fitting the required MAF.

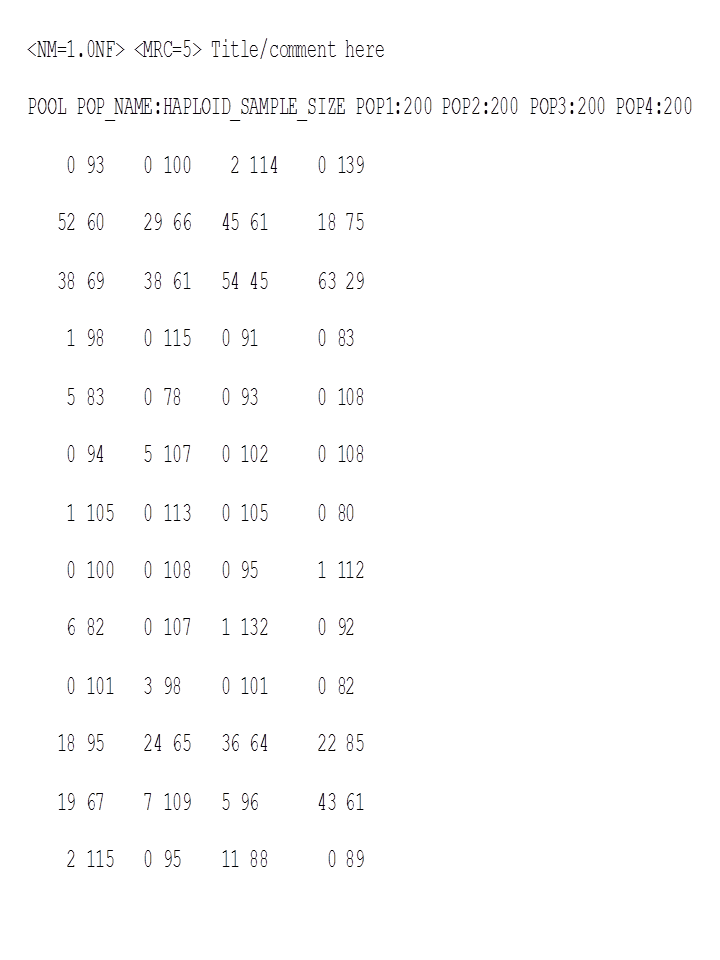

It is worth stressing that the MAF criterion applies to standard Individual sequencing (hereafter IndSeq) SNP data. In addition to IndSeq data, DIYABC-RF allows the simulation and analyses of pool-sequencing SNP data (hereafter PoolSeq data), which basically consist of whole-genome sequences of pools of tens to hundreds of individual DNAs (Gautier et al. 2013; Schlötterer et al. 2014). In practice, the simulation of PoolSeq data consists first in simulating individual SNP genotypes for all individuals in each population pool, and then generating pool read counts from a binomial distribution parameterized with the simulated allele counts (obtained from individual SNP genotypes) and the total pool read coverage (e.g., Hivert et al. 2018). A criterion somewhat similar to the MAF was implemented for PoolSeq data: the minimum read count (MRC). The MRC is the minimum number of sequence reads for each alleles of a SNP when pooling the reads overall population samples. The specification of a MRC equal for instance to 5 will automatically select a subset of m PoolSeq loci for which both alleles have at least five reads among the l loci of the observed dataset and only m loci with a MRC ≥ 5 will be retained in a simulated dataset. In practice, the instruction for a given MRC has to be indicated directly in the headline of the file of the observed dataset (see section 7.1.2 for data file examples). For instance, if one wants to consider only loci with a MRC equal to 5 one will write <MRC=5> in the headline. The selection of a subset of loci fitting a given MRC allows to reduce the proportion of loci for which the observed variation may corresponds to sequencing errors. If MRC is large enough (e.g. MRC=5 or 10), it also removes the loci with very low level of polymorphism from the dataset and hence increase the mean level of genetic variation of both the observed and simulated datasets, without producing any bias in the analyses; In practice MRC values of 2, 3,4 and 5 are recommended. To check for the consistency/robustness of the ABC results obtained, it may be useful to treat a SNP dataset considering different MAFs (for instance MRC=2 and MRC=5). As for the MAF, increasing the MRC leads to increase the simulation times of PoolSeq SNP datasets as more loci need to be simulated with the Hudson’s algorithm to obtain a given number of loci fitting the required MRC.

2.5 Prior distributions

The Bayesian aspect of the ABC-RF approach implies that parameter

estimations use prior knowledge about these parameters that is given by

prior distributions of parameters. The program offers a choice among

usual probability distributions, i.e. Uniform, Log-Uniform, Normal or

Log-Normal for historical parameters and Uniform, Log-Uniform or Gamma

for mutation parameters. Extremum values (min and max) and other

parameters (e. g. mean and standard deviation) must be filled in by the

user. It is worth noting that one can impose some simple conditions on

historical parameters. For instance, there can be two times parameters

with overlapping prior distributions. However, we want that the first

one, say t1, to always be larger than the second one, say t2. For

that, we just need to set t1>t2 in the corresponding

edit-windows. Such a condition needs to be between two parameters and

more precisely between two parameters of the same category (i.e. two

effective sizes, two times or two admixture rates). The limit to the

number of conditions is imposed by the logics, not by the program. The

only binary relationships accepted here are <, >, ≤, and ≥.

2.6 Summary statistics as components of the feature vector

The training set includes values of a feature vector which is a multidimensional representation of any data point (i.e. simulated or observed datasets) made up of measurements (or features) taken from it. More specifically, the feature vector includes a large number of statistics that summarize genetic variation in the way that they allow capturing different aspects of gene genealogies and hence various features of molecular patterns generated by selectively neutral population histories (e.g. Beaumont 2010; Cornuet et al. 2014). For each category (microsatellite, DNA sequences or SNP) of loci, the program proposes a series of summary statistics among those used by population geneticists.

Note: The code name of each statistic mentioned in the various program outputs is given between brackets [XXX] in sections 2.6.1, 2.6.2 and 2.6.3.

2.6.1 For microsatellite loci

Single sample statistics:

-

[NAL] - mean number of alleles across loci

-

[HET] - mean gene diversity across loci (Nei 1987)

-

[VAR] - mean allele size variance across loci

-

[MGW] - mean M index across loci (Garza and Williamson 2001; Excoffier et al. 2005)

Two sample statistics:

-

[N2P] - mean number of alleles across loci (two samples)

-

[H2P] - mean gene diversity across loci (two samples)

-

[V2P] - mean allele size variance across loci (two samples)

-

[FST] -$F_{ST}$ between two samples (Weir and Cockerham 1984)

-

[LIK] - mean index of classification (two samples)

(Rannala and Moutain 1997; Pascual et al. 2007)

-

[DAS] - shared allele distance between two samples (Chakraborty and Jin 1993)

-

[DM2] - distance between two samples (Golstein et al. 1995)

Three sample statistics:

- [AML] - Maximum likelihood coefficient of admixture (Choisy et al. 2004)

2.6.2 For DNA sequence loci

Single sample statistics:

-

[NHA] - number of distinct haplotypes

-

[NSS] - number of segregating sites

-

[MPD] - mean pairwise difference

-

[VPD] - variance of the number of pairwise differences

-

[DTA] - Tajima’s D statistics (Tajima 1989)

-

[PSS] - Number of private segregating sites

(=number of segregating sites if there is only one sample)

-

[MNS] - Mean of the numbers of the rarest nucleotide at segregating sites

-

[VNS] - Variance of the numbers of the rarest nucleotide at segregating sites

Two sample statistics:

-

[NH2] - number of distinct haplotypes in the pooled sample

-

[NS2] - number of segregating sites in the pooled sample

-

[MP2] - mean of within sample pairwise differences

-

[MPB] - mean of between sample pairwise differences

-

[HST] -$F_{ST}$ between two samples (Hudson et al. 1992)

Three sample statistics:

- [SML] Maximum likelihood coefficient of admixture (adapted from Choisy et al. 2004)

2.6.3 For SNP loci

For both IndSeq and PoolSeq SNPs, we have implemented the following (same) set of summary statistics.

1. [ML1p] [ML2p] [ML3p] [ML4p] - Proportion of monomorphic loci for each population, as well as for each pair, triplet and quadruplet of populations.

Mean (m suffix added to the code name) and variance (v suffix) over loci values are computed for all subsequent summary statistics

2. [HWm] [HWv] [HBm] [HBv] - Heterozygosity for each population and for each pair of populations (Hivert et al. 2018).

3. [FST1m] [FST1v] [FST2m] [FST2v] [FST3m] [FST3v] [FST4m] [FST4v] [FSTGm] [FSTGv] - FST-related statistics for each population (i.e., population-specific FST; Weir & Goudet 2017), for each pair, triplet, quadruplet and overall populations (when the dataset includes more than four populations) (Hivert et al. 2018).

4. [F3m] [F3v] [F4m] [F4v] – allele shared Patterson’s f-statistics for each triplet (f3-statistics) and quadruplet (f4-statistics) of populations (Patterson et al. 2012 and Leblois et al. 2018 for the PoolSeq unbiased f3-statistics)

5. [NEIm] [NEIv] - Nei’s (1972) distance for each pair of populations

6. [AMLm] [AMLv] - Maximum likelihood coefficient of admixture computed for each triplet of populations (adapted from Choisy et al. 2004).

2.7 Generating the training set

The training set can include as many scenarios as desired. The prior probability of each scenario is uniform so that each scenario will have approximately the same number of simulated datasets. Only parameters that are defined for the drawn scenario are generated from their respective prior distribution. Scenarios may or may not share parameters. When conditions apply to some parameters (see section 2.3), parameter sets are drawn in their respective prior distributions until all conditions are fulfilled. The simulated datasets (summarized with the statistics detailed in section 2.6) of the training set are recorded for further statistical analyses (using Random Forest algorithms) in a key binary file named reftableRF.bin.

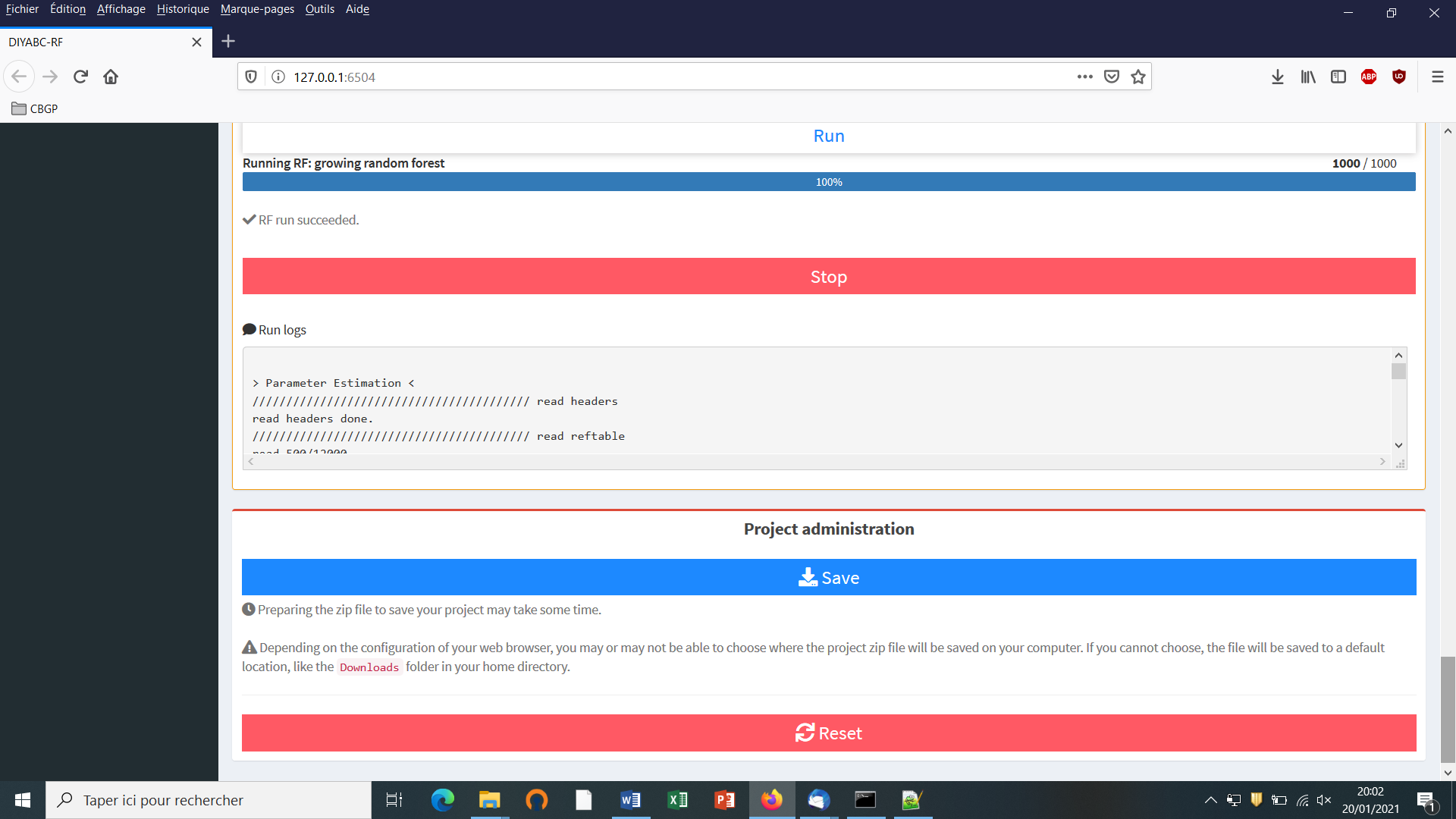

3. RANDOM FOREST ANALYSIS

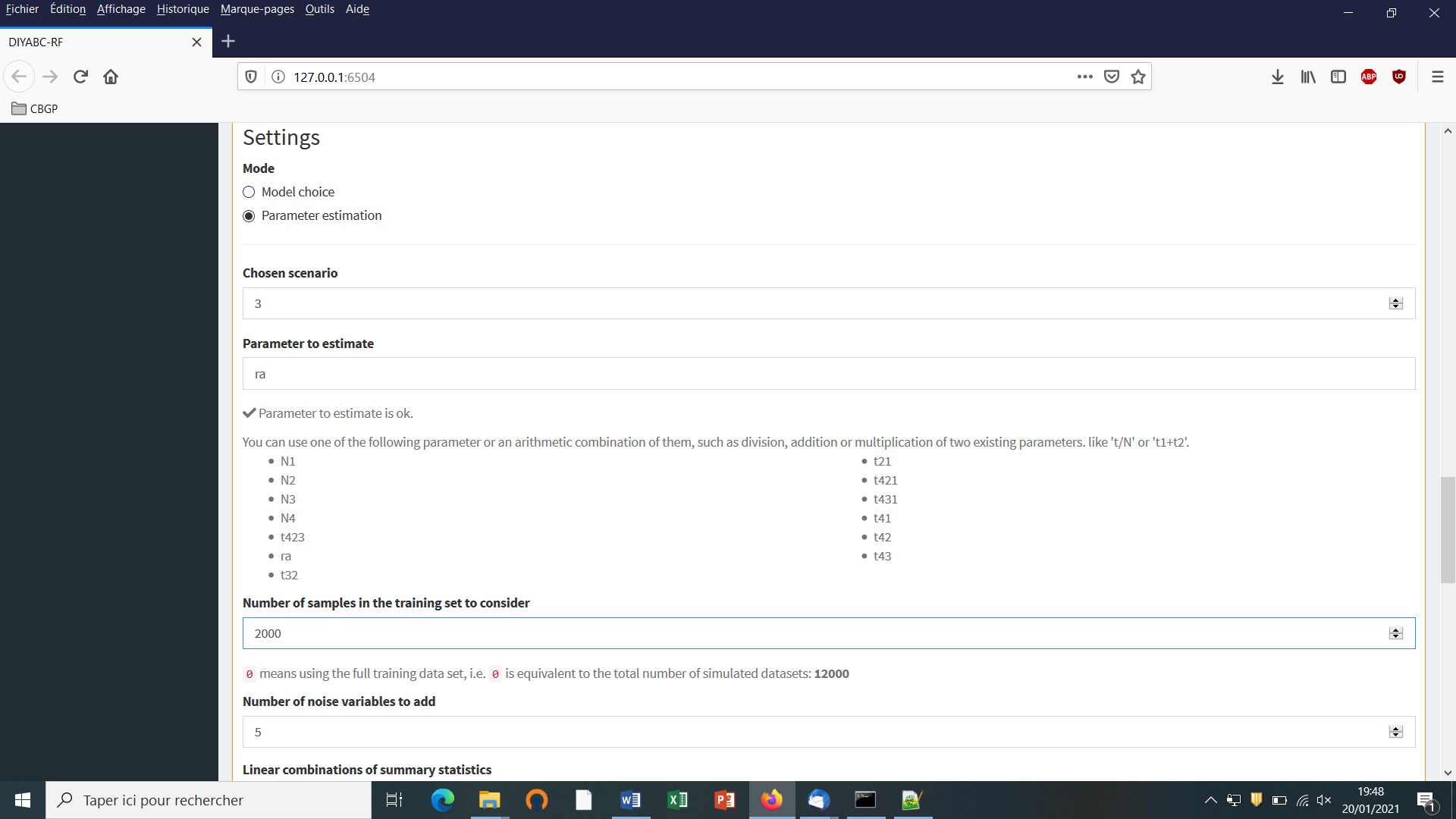

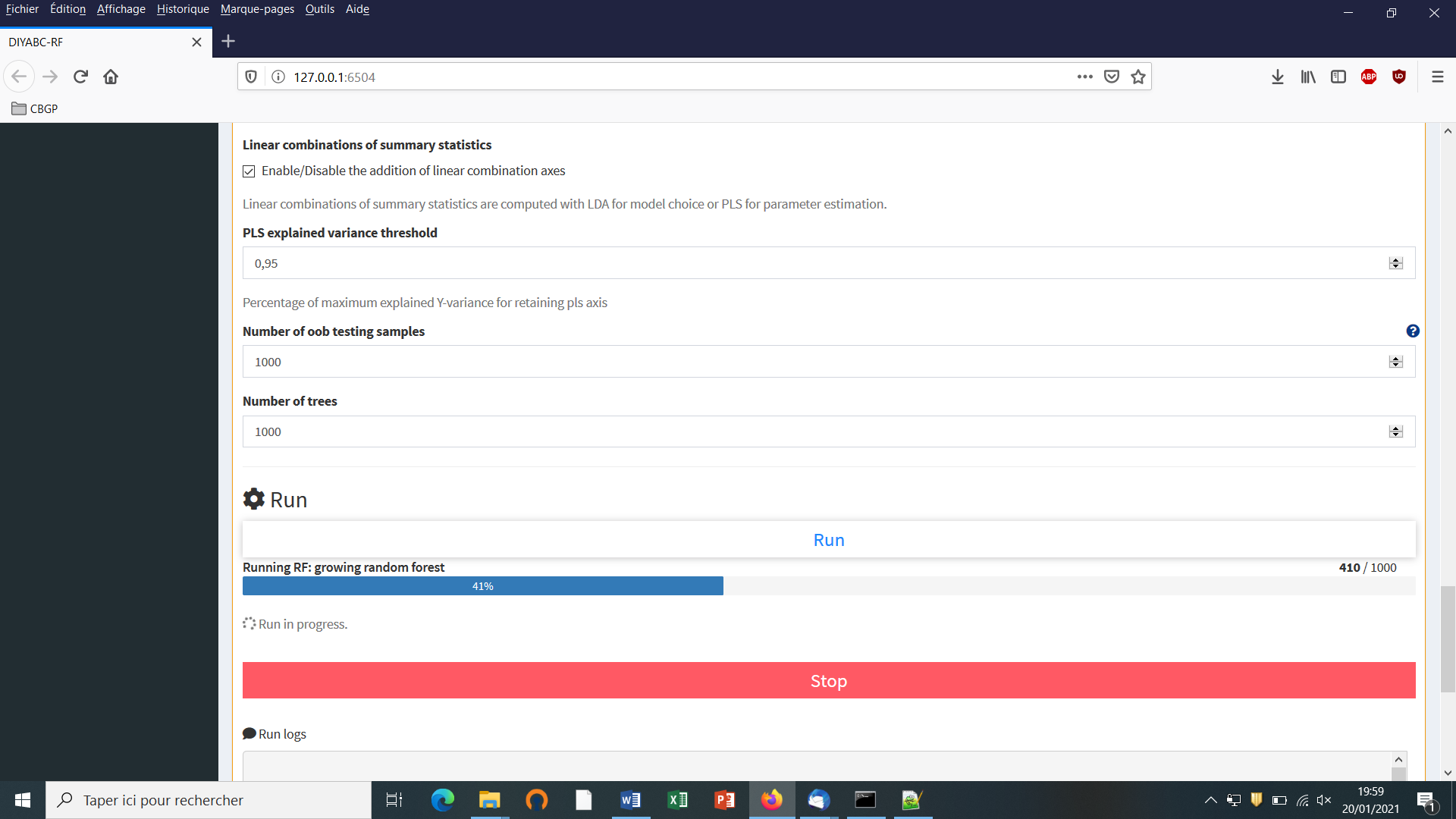

Once the training set has been generated, one can start various statistical treatments using the Random Forest (RF) algorithms implemented in the program and by running the “Random Forest analyses” module.

3.1 Addition of linear combinations of summary statistics to the vector feature

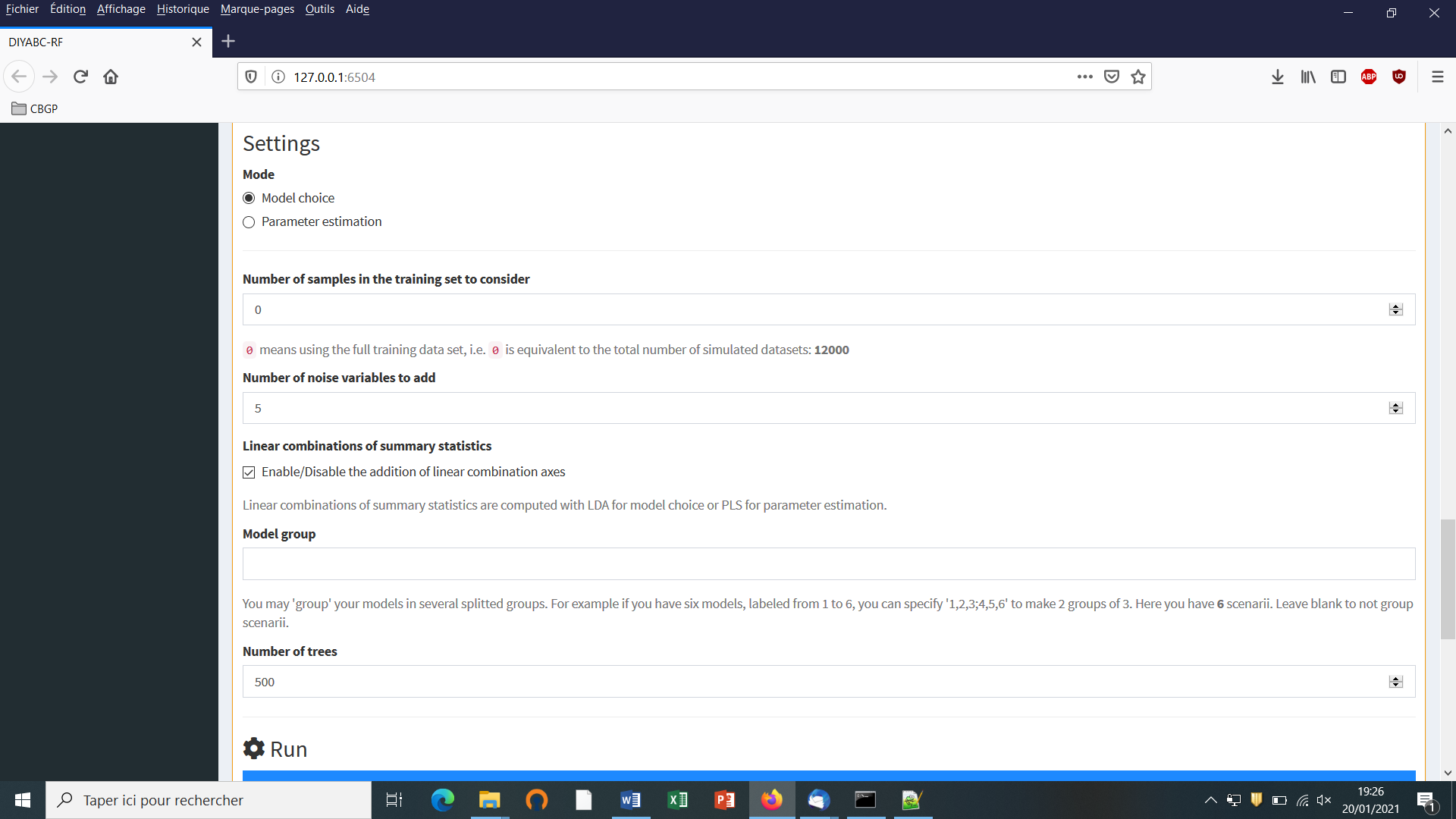

For scenario choice, the feature vector can be enriched before processing RF predictions (default option that can be disabled) by values of the d axes of a linear discriminant analysis (LDA) processed on the above summary statistics (with d equal to the number of scenarios minus 1; Pudlo et al. 2016). In the same spirit, for parameter estimation, the feature vector can be completed (default option that can be disabled) by values of a subset of the s axes of a Partial Least Squares Regression analysis (PLS) also processed on the above summary statistics (with s equal to the number of summary statistics). The number of PLS axes added to the feature vector is determined as the number of PLS axes providing a given fraction of the maximum amount of variance explained by all PLS axes (i.e., 95% by default, but this parameter can be adjusted). Note that, according to our own experience on this issue, the addition into the feature vector of LDA or PLS axes better extract genetic information from the training set and hence globally improved statistical inferences. While the inferential gain turned out to be systematic and substantial for LDA axes (scenario choice), we found that including PLS axes improved parameter estimation in a heterogeneous way, with a negligible gain in some cases (e.g. Collin et al. 2020).

3.2 Prediction using Random Forest: scenario choice

For scenario choice, the outcome of the first step of RF prediction applied to a given target dataset is a classification vote for each scenario which represents the number of times a scenario is selected in a forest of n trees. The scenario with the highest classification vote corresponds to the scenario best suited to the target dataset among the set of compared scenarios. This first RF predictor is good enough to select the most likely scenario but not to derive directly the associated posterior probabilities. A second analytical step based on a second Random Forest in regression is necessary to provide an estimation of the posterior probability of the best supported scenario (Pudlo et al. 2016).

3.3 Prediction using Random Forest: parameter estimation

For parameter estimation, Raynal et al. (2019) extended the RF approach developed in the context of (non-parametric) regression to estimate the posterior distributions of a given parameter under a given scenario. The approach requires the derivation of a new Random Forest for each component of interest of the parameter vector (i.e. “one parameter, one forest strategy”; Raynal et al. 2019). Quite often, practitioners of Bayesian inference report the posterior mean, posterior variance or posterior quantiles, rather than the full posterior distribution, since the former are easier to interpret than the latter. We implemented in DIYABC-RF the methodologies detailed in Raynal et al. (2019) to provide estimations of the posterior mean, variance, median (i.e. 50% quantile) as well as 5% and 95% quantiles (and hence 90% credibility interval) of each parameter of interest. The posterior distribution of each parameter of interest is also inferred using importance weights following Meinshausen (2006)’s work on quantile regression forests.

3.4 Assessing the quality of predictions

To evaluate the robustness of inferences, DIYABC-RFt provides:

-

Global (i.e. prior) error/accuracy metrics corresponding to prediction quality measures computed over the entire data space;

-

Local (i.e. posterior) error/accuracy metrics computed conditionally on the observed dataset and hence corresponding to prediction quality exactly at the position of the observed dataset.

Note that the program used the out-of-bag prediction method for estimating global and local error/accuracy measures (Pudlo et al. 2016; Raynal et al. 2019; Chapuis et al. 2020). The out-of-bag dataset corresponds to the data of the training set that were not selected when creating the different tree bootstrap samples and is hence equivalent to using an independent test dataset (Breiman, 2001; Pudlo et al. 2016; Raynal et al. 2019). Using the out-of-bag prediction method for estimating global and local error/accuracy measures is computationally efficient as this approach makes use of the datasets already present in the training set and hence avoids the computationally costly simulations (especially for large SNP datasets) of additional test datasets.

3.4.1 Metrics for scenario choice

-

Global prior errors including the confusion matrix (i.e. the contingency table of the true and predicted classes – here scenarios - for each example in the training set) and the mean misclassification error rate (over all scenarios).

-

Local posterior error which corresponds to 1 minus the posterior probability of the selected scenario (Chapuis et al. 2020).

3.4.2 Metrics for parameter estimation

-

Global (prior) and local (posterior) NMAE (i.e. normalized mean absolute error): it is the average absolute difference between the point estimate and the true simulated value divided by the true simulated value, with the mean or the median taken as point estimate.

-

Global (prior) and local (posterior) MSE and NMSE (i.e. the mean square error): it is the average squared difference between the point estimate and the true simulated value for MSE, divided by the true simulated value for NMSE, again with the mean or the median taken as point estimate.

-

Several confidence interval measures computed only at the global (prior) scale:

- 90% coverage: it is the proportion of true simulated values located between the estimated 5% and 95% quantiles.

- Mean or median of the 90% amplitude: it is the mean or median of the difference between the estimated 5% and 95% quantiles.

- Mean or median of the relative 90% amplitude: it is the mean or median of the difference between the estimated 5% and 95% divided by the true simulated value.

4. PRACTICAL CONSIDERATIONS FOR ABC-RF TREATMENTS

Random Forest is often (positively) considered as a “tuning-free” method in the sense that it does not require meticulous calibrations. This represents an important advantage of this method (for instance compared to standard ABC methods, Neural Network methods and more sophisticated Deep Learning methods; Collin et al. 2020), especially for non-expert users. In practice, we nevertheless advise users to consider several checking points, thereafter formalized as questions, before finalizing inferential treatments using DIYABC-RF.

4.1 Are my scenarios and/or associated priors compatible with the observed dataset?

This question is of prime interest and applies to ABC-RF as well as to any alternative ABC treatments. This issue is particularly crucial, given that complex scenarios and high dimensional datasets (i.e., large and hence very informative datasets) are becoming the norm in population genomics. Basically, if none of the proposed scenario / prior combinations produces some simulated datasets in a reasonable vicinity of the observed dataset, this is a signal of incompatibility and it is not recommended to attempt any inferences. In such situations, we strongly advise reformulating the compared scenarios and/or the associated prior distributions in order to achieve some compatibility in the above sense. DIYABC-RF proposes a visual way to address this issue through the simultaneous projection of datasets of the training database and of the observed dataset on the first Linear Discriminant Analysis (LDA) axes. In the LDA projection, the (target) observed dataset has to be reasonably located within the clouds of simulated datasets. The program also proposes some complementary dedicated tools: (i) a Principal Component Analysis (PCA) representing on 2-axes plans the simulated dataset from the training set and the observed dataset; and (ii), for each summary statistics, the proportion of simulated data (considering the total training set) that have a value below the value of the observed dataset. A star indicates proportions lower than 5% or greater than 95% (two stars, <1% or >1%; three stars, <0.1% or >0.1%). The latter numerical results can help users to reformulating the compared scenarios and/or the associated prior distributions in order to achieve some compatibility (see e.g. Cornuet et al. 2010). See also the complementary and more basic procedure based on Principal Component Analysis (PCA) proposed in section “5.4.7 Step 7: Prior-scenario checking PRE-ANALYSIS (optional but recommended)”.

4.2 Did I simulate enough datasets for my training set?

A rule of thumb is, for scenario choice to simulate between 2,000 and 20,000 datasets per scenario among those compared (Pudlo et al. 2016; Estoup et al. 2018), and for parameter estimation to simulate between 10,000 and 100,000 datasets under a given scenario (Raynal et al. 2019; Chapuis et al. 2020). To evaluate whether or not this number is sufficient for RF analysis, we recommend to compute error/accuracy metrics such as those proposed by DIYABC-RF from both the entire training set and a subset of the latter (for instance from a subset of 80,000 simulated datasets if the training set includes a total of 100,000 simulated datasets). If error (accuracy) metrics from the subset are similar, or only slightly higher (lower) than the value obtained from the entire database, one can consider that the training set contains enough simulated datasets. If a substantial difference is observed between both values, then we recommend increasing the number of simulated datasets in the training set.

4.3 Did my forest frow enough trees?

According to our experience, a forest made of 500 to 2,000 trees often constitutes an interesting trade-off between computation efficiency and statistical precision (Breiman, 2001; Chapuis et al. 2020; Pudlo et al. 2016; Raynal et al. 2019). To evaluate whether or not this number is sufficient, we recommend plotting error/accuracy metrics as a function of the number of trees in the forest. The shapes of the curves provide a visual diagnostic of whether such key metrics stabilize when the number of trees tends to a given value. DIYABC-RF provides such a plot-figure as output.

5. RUNNING (EXAMPLE) DATASET TREATMENTS USING THE GRAPHIC USER INTERFACE (GUI)

5.1 Launching the GUI

-

The GUI is the standard and user-friendly interface to use the software DIYABC-RF. You can configure the required parameters and follow the progress directly in the interface and through log files. A given GUI session (i.e. a window) allows working on a single project. You can launch several GUI sessions (i.e. several windows) on a single computer hence allowing working on several projects in parallel.

-

When using the operating system Microsoft Windows, launch the GUI by double-clicking on the file DIYABC-RF_GUI.bat included in the DIYABC-RF_GUI_xxx_windows.zip file available from https://diyabc.github.io. When using MacOS, launch the GUI by double-clicking on the icon of the file DIYABC-RF_GUI.command included in the DIYABC-RF_GUI_xxx_macos.zip file available from https://diyabc.github.io

It is worth stressing that the program can also be run locally as any shiny application using R (only possibility on Linux). You can visit https://diyabc.github.io/gui/#r-package-installation for more details.

The main pipeline of DIYABC-RF includes two modules corresponding to the two main phases:

- Module 1 = “Training set simulation” where users specify how simulated data will be generated under the ABC framework to produce a training set.

- Module 2 = “Random Forest analysis” (necessitating the presence of a training set) which guides users through scenario choice and parameter inference by providing a simple interface for the supervised learning framework based on Random Forest methodologies.

An additional (auxiliary) module named “Synthetic data file generation” is also available from the GUI (cf. section 8). It can be used to easily generate data file(s) corresponding to synthetic “ground truth” raw data (not summarized through statistics) under a given historical scenario and a set of fixed parameter values, for instance for benchmarking purpose. The formats of such simulated data files are the same as those described in section 7.1 for observed (i.e. real) datasets for which one usually wants to make inferences.

5.2 What is a DIYABC-RF project?

A DIYABC-RF project consists of different files, related to the training set simulation and random forest analyses. A project includes at least one observed dataset and one header file associated to a (already generated or not) training set. The header file, named headerRF.txt, contains all information necessary to compute a training set associated with the data: i.e. the scenarios, the scenario parameter priors, the characteristics of loci, the loci parameter priors and the list of summary statistics to compute using the Training set simulation module. As soon as the first records of the training set have been simulated, they are saved in the training set file, named reftableRF.bin. In addition, the file statobsRF.txt, stores the value of the summary statistics computed from the observed dataset.

Outputs of Random forest analyses are available in sub-directories (each one corresponding to a specific setting that you may have configured for the random forest analyses) in the project directory; see the corresponding section 7.3 for more details regarding the content of outputs generated by a Random Forest analysis.

It is worth stressing that you need to SAVE a project to avoid losing your work before quitting the GUI. A project zip file will be generated and can be saved. If you want to later continue with a project, or clone/modify an existing project, you can provide the key files of an existing projec in the GUI when starting a project.

Finally, we strongly advised NOT to manually edit project-related files in a text editor, unless you know what you are doing!

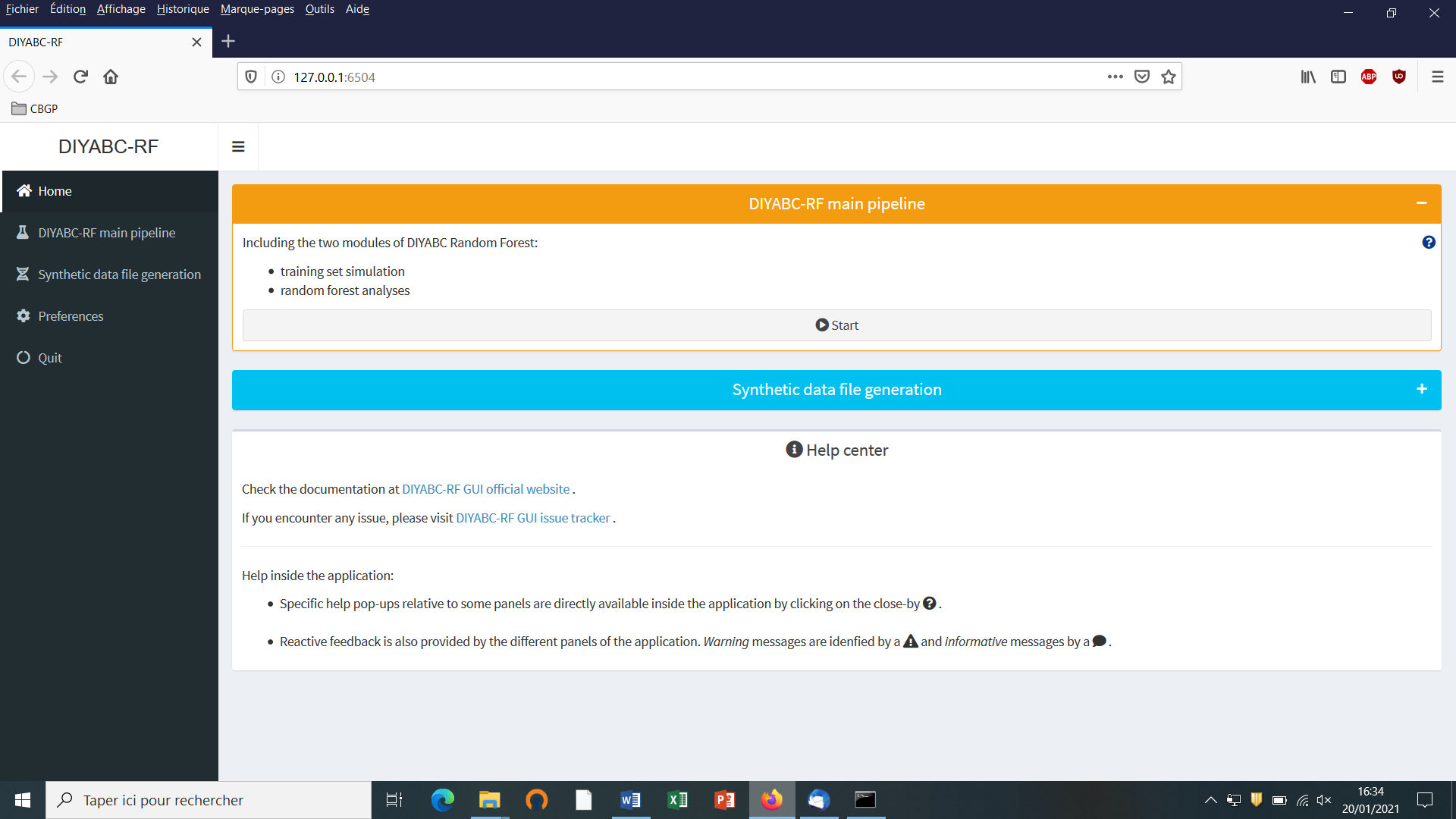

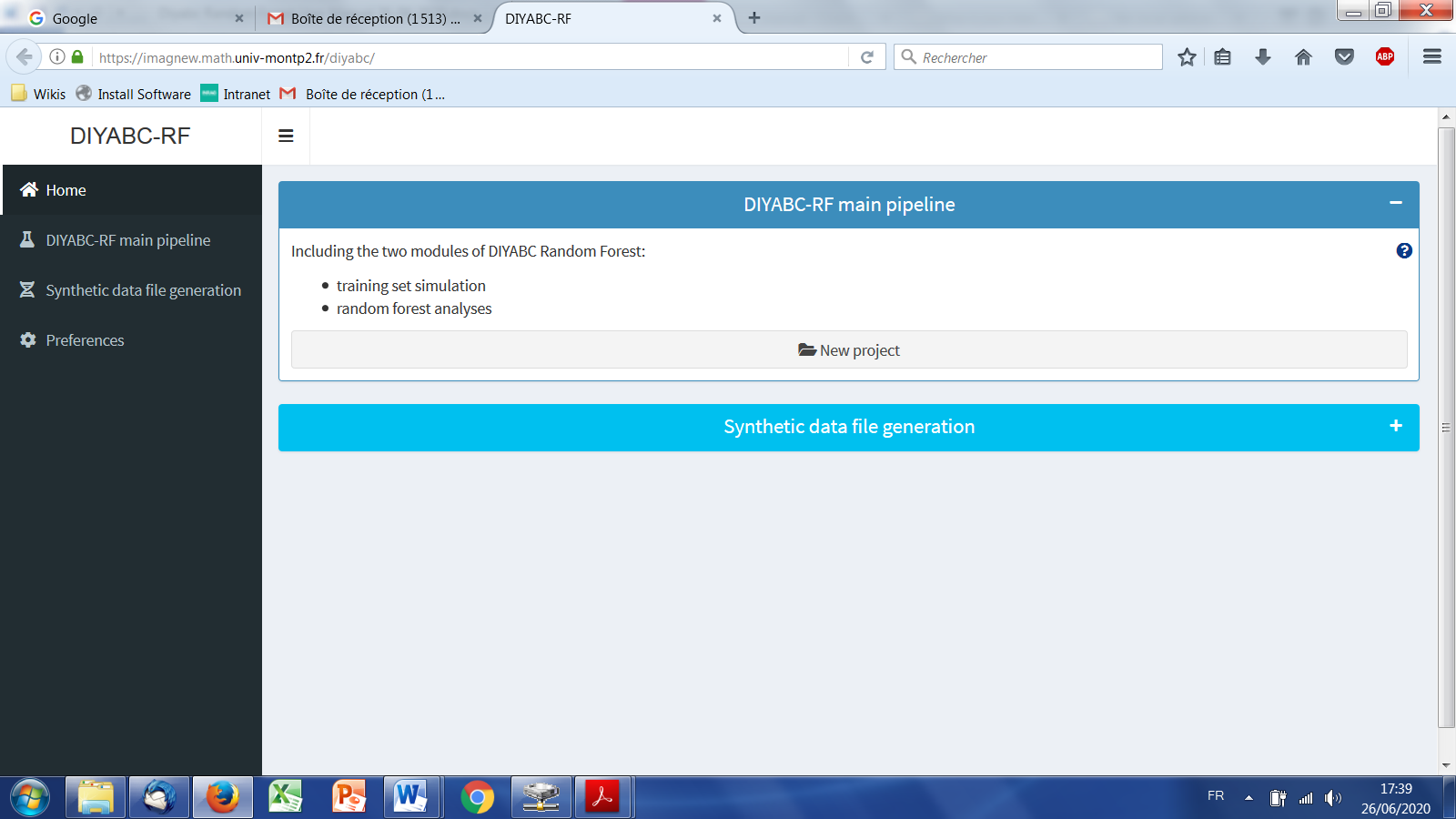

5.3 Main options of the home screen

When launching the GUI, the home screen appears like this:

-

Access to main functionalities: you can access to the two main functionalities of the program (i.e. training set simulation and random forest analyses) in two ways: (i) by clicking on the “Start” button in the middle of the Home panel or (ii) by clicking on the “DIYABC-RF main pipeline” button in the upper-left part of the panel.

-

Access to other functionalities:

-

To access to the panels allowing generating files corresponding to full in silico datasets (also called pseudo-observed datasets) click on the “Synthetic data file generation” button in the middle of the panel or on the “Synthetic data file generation” button in the upper-left part of the panel.

-

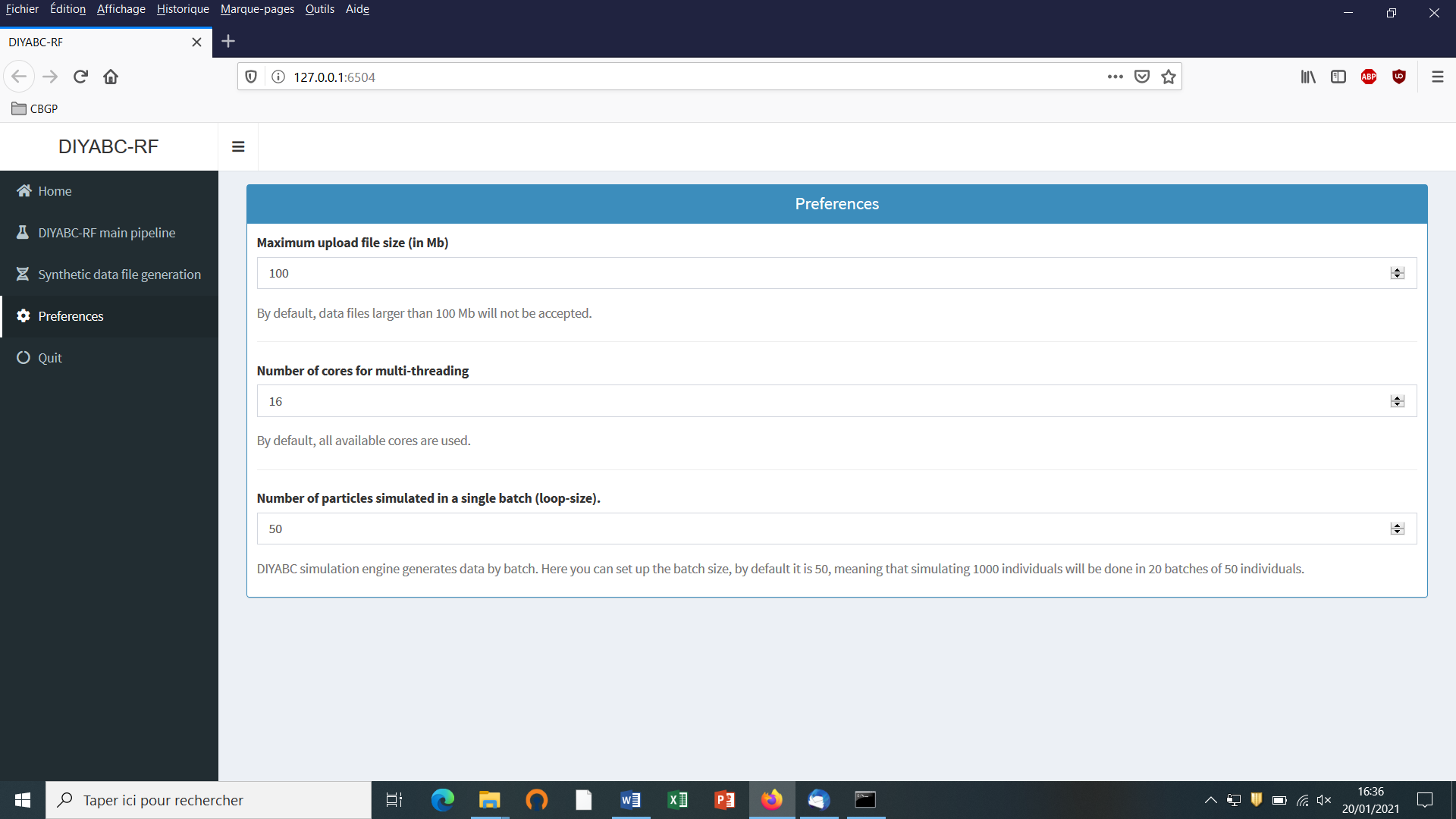

Clicking on the “Preference” button (upper left) gives access to the following screen

The different options proposed are self-meaning. Default values can be changed by the user. Note that the loop-size option corresponds to the number of simulated datasets distributed over all computer threads and stored in the RAM before writing them into the training set file (reftableRF.bin).

5.4 How to generate a new IndSeq SNP training set

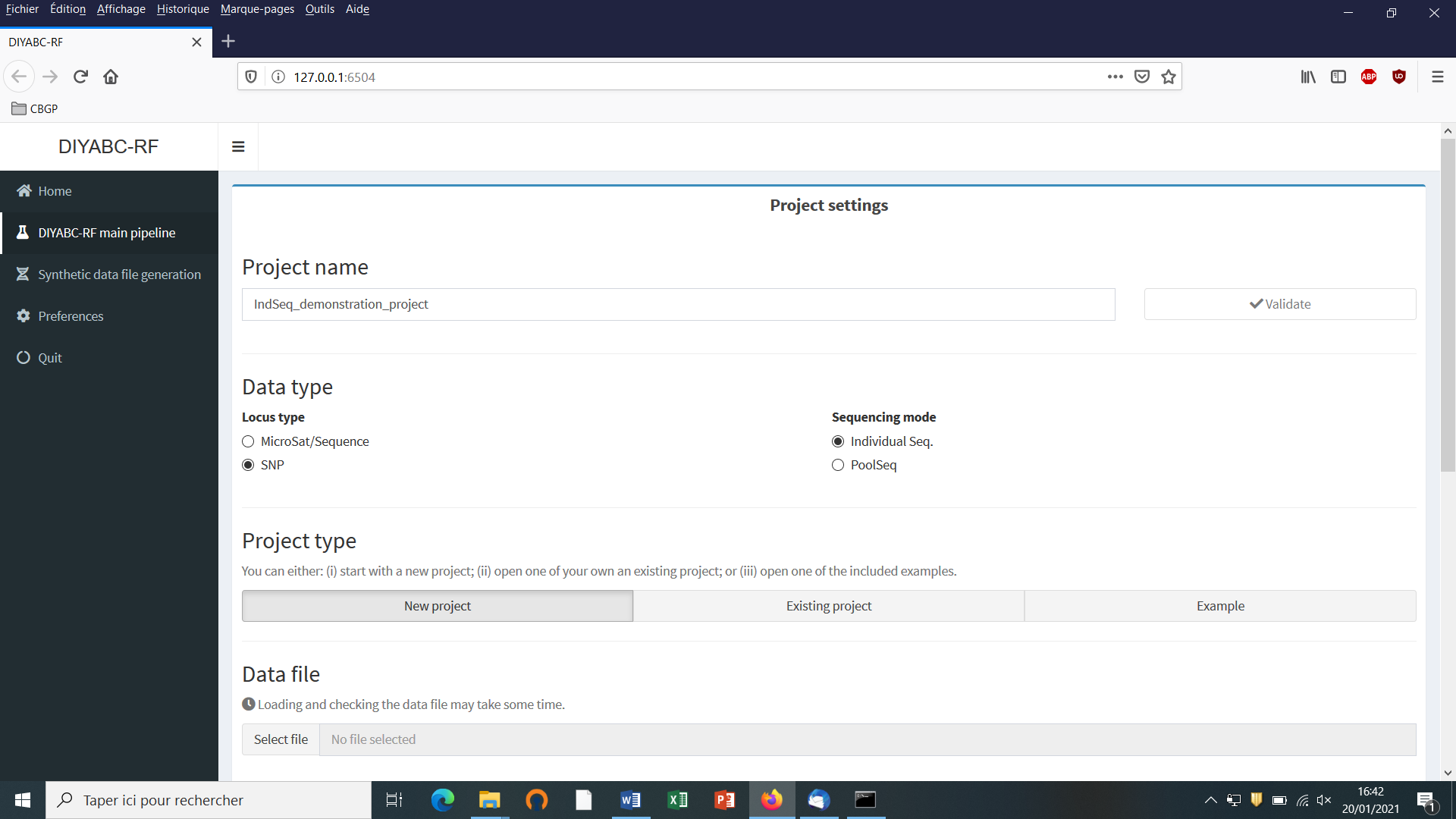

5.4.1 Step 1: defining a new IndSeq project

Defining a new project requires different steps which are not strictly the same whether the data are microsatellites/DNA sequences (MSS) or SNP (IndSeq or PoolSeq). Let’s start with an IndSeq SNP project. Click on the “Start” button in the middle of the Home panel (or on the “DIYABC-RF main pipeline” button). The following panel appears.

-

Enter a project name in the “Project name” window (here IndSeq_demonstration_project). Validate the project name.

-

Select the Data type including the locus type (Microsat/sequence or SNP) and the sequencing mode (for SNP only; IndSeq or PoolSeq). Here select SNP and Individual Seq.

-

Select the “New project” button as we want to implement a new project from scratch.

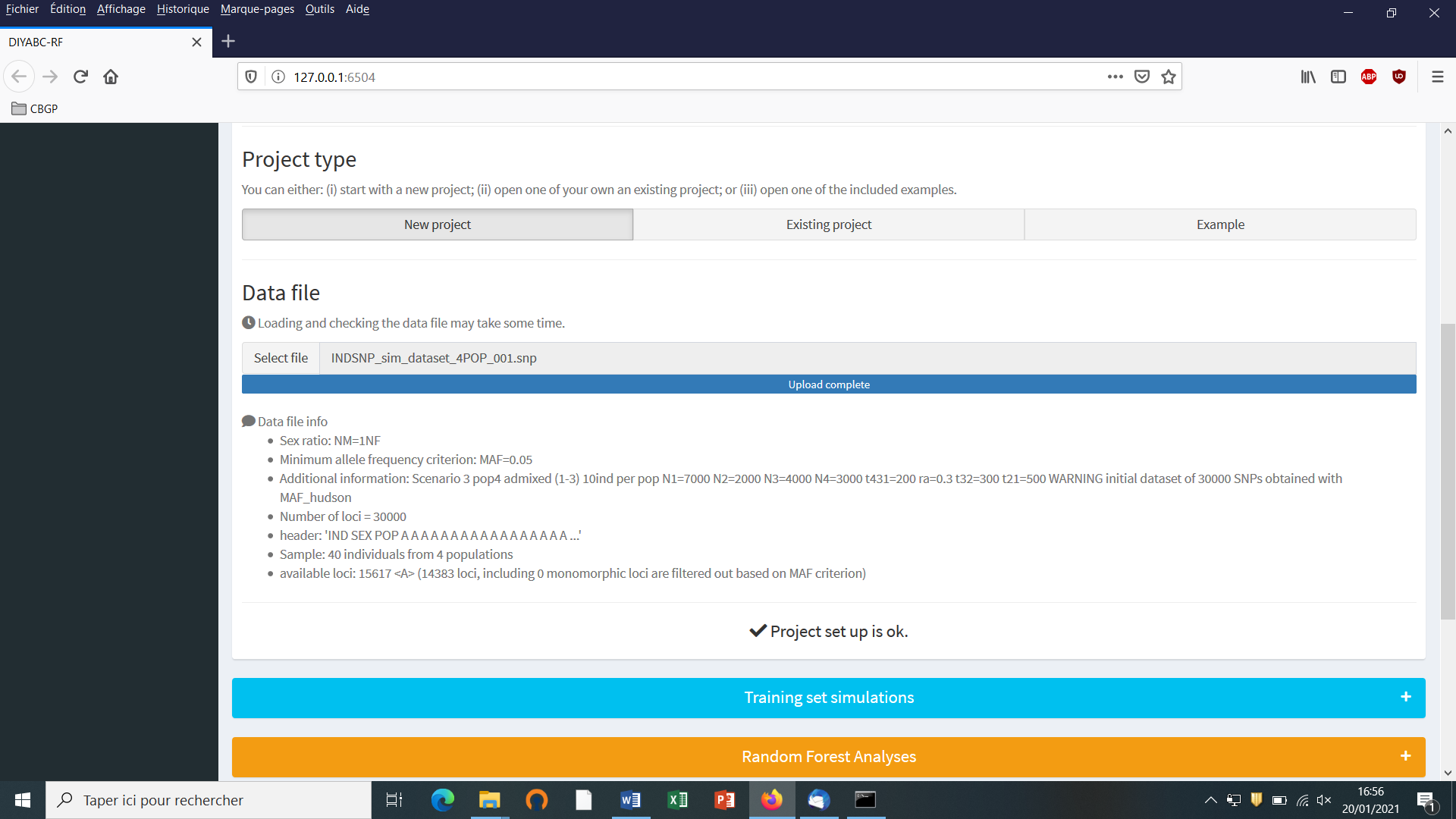

5.4.2 Step 2: choosing the data file

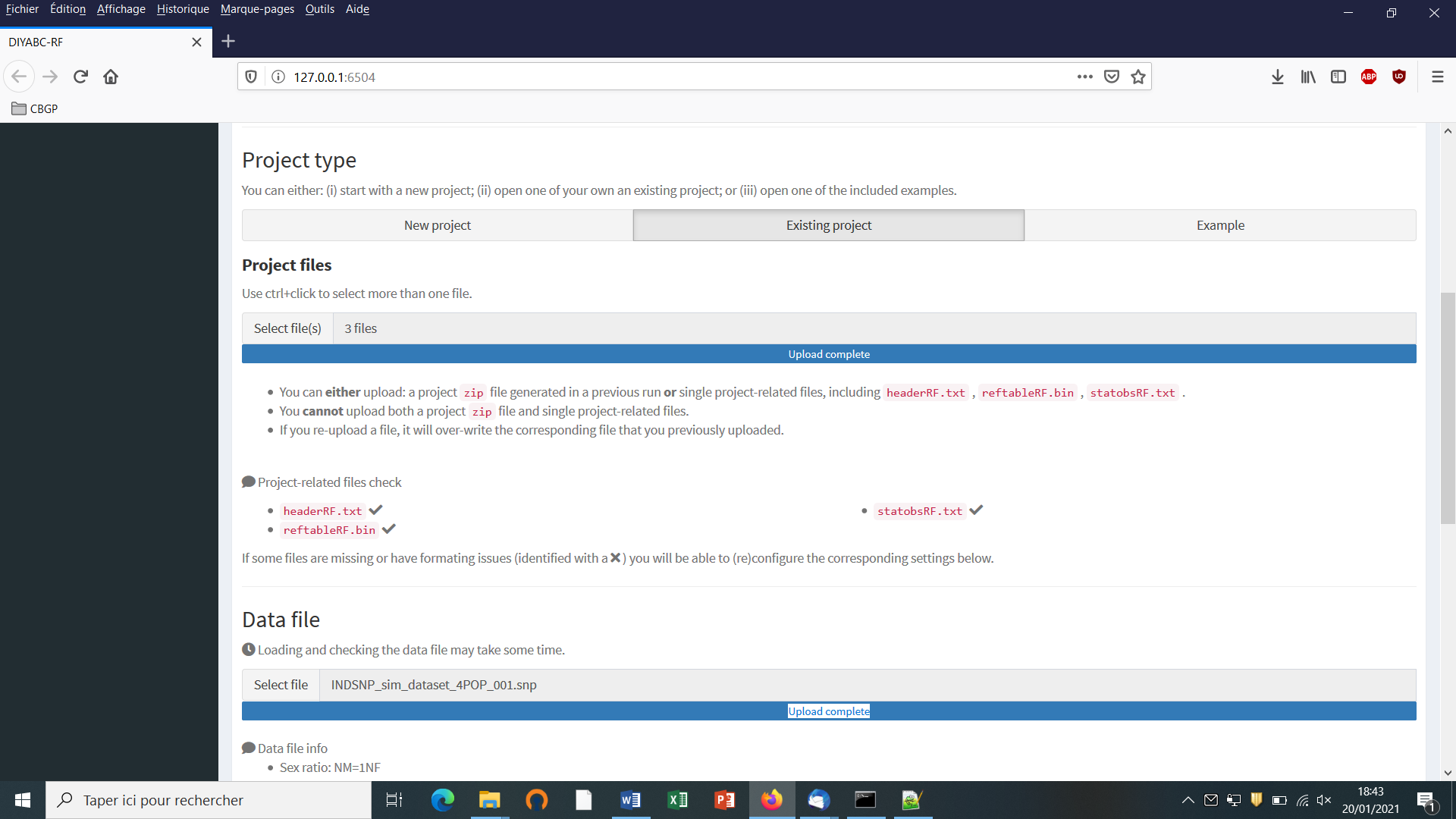

- Choose a data file (an IndSeq format data file in this case) using the “Select file” browser (here INDSNP_sim_dataset_4POP_001.snp). A large size dataset might take some times to be uploaded and checked by the program. A short summary of the specificities of the loaded datafile appears: here the total number of loci (30000), the minimum allele frequency chosen for simulation (MAF=0.05), the sex ratio indicated by the user (NM=1NF), the total number of individuals and populations, the locus type and their corresponding numbers (A= autosomal, M=mitochondrial…; see section 7.1 for details about datafile format), and the number of available loci based on the MAF criterion (here 15617 <A>, as14383 loci, including 0 monomorphic loci, have been filtered out due to a MAF<5% by the program after scanning the proposed observed dataset).

- A “Project set up is ok” message appears at the bottom of the panel if all items have gone correctly. You can then go the next steps by clicking on the large blue color “Training set simulation” “+” button”. The following panel appears.

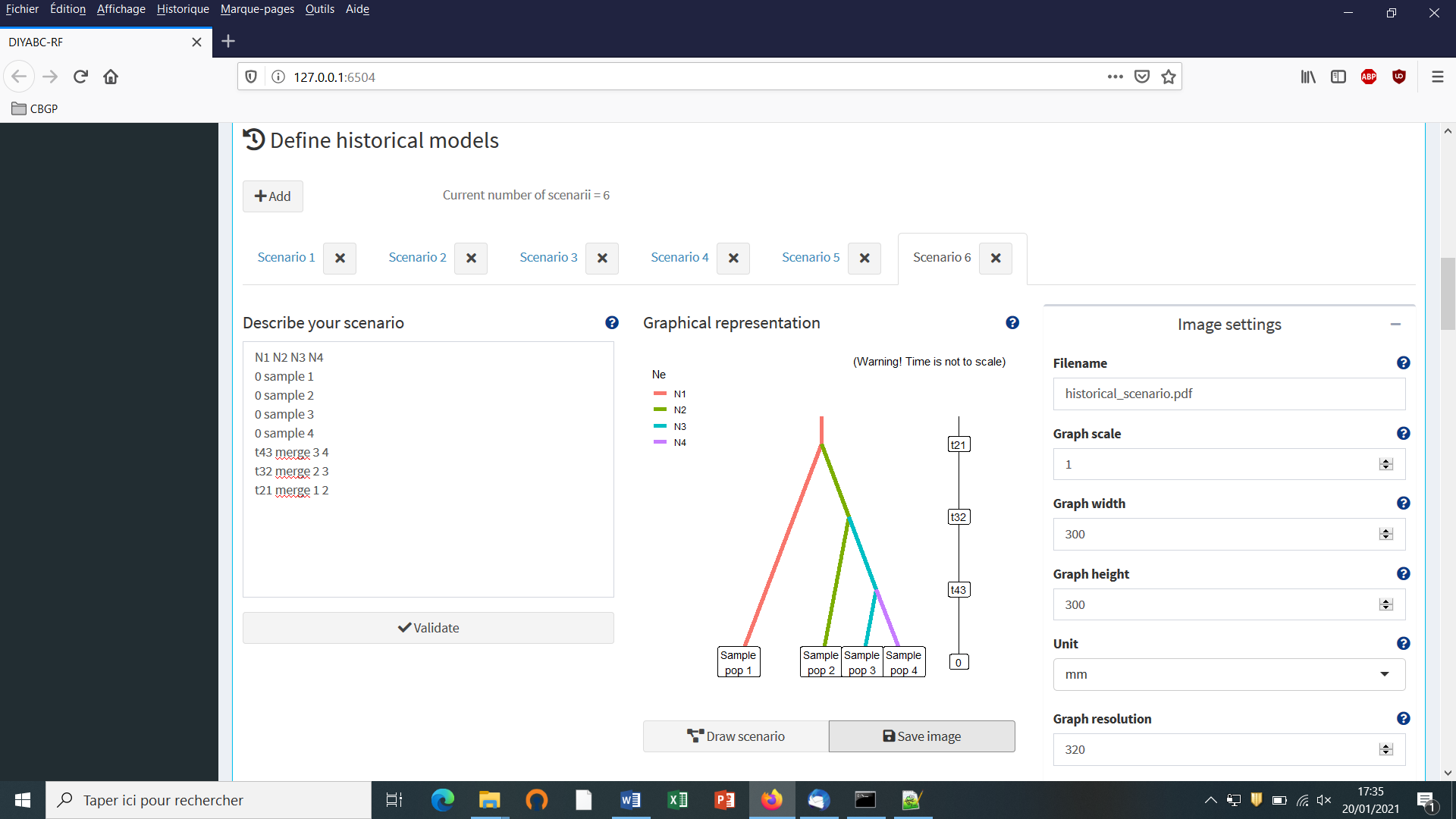

5.4.3 Step 3: Inform the historical model

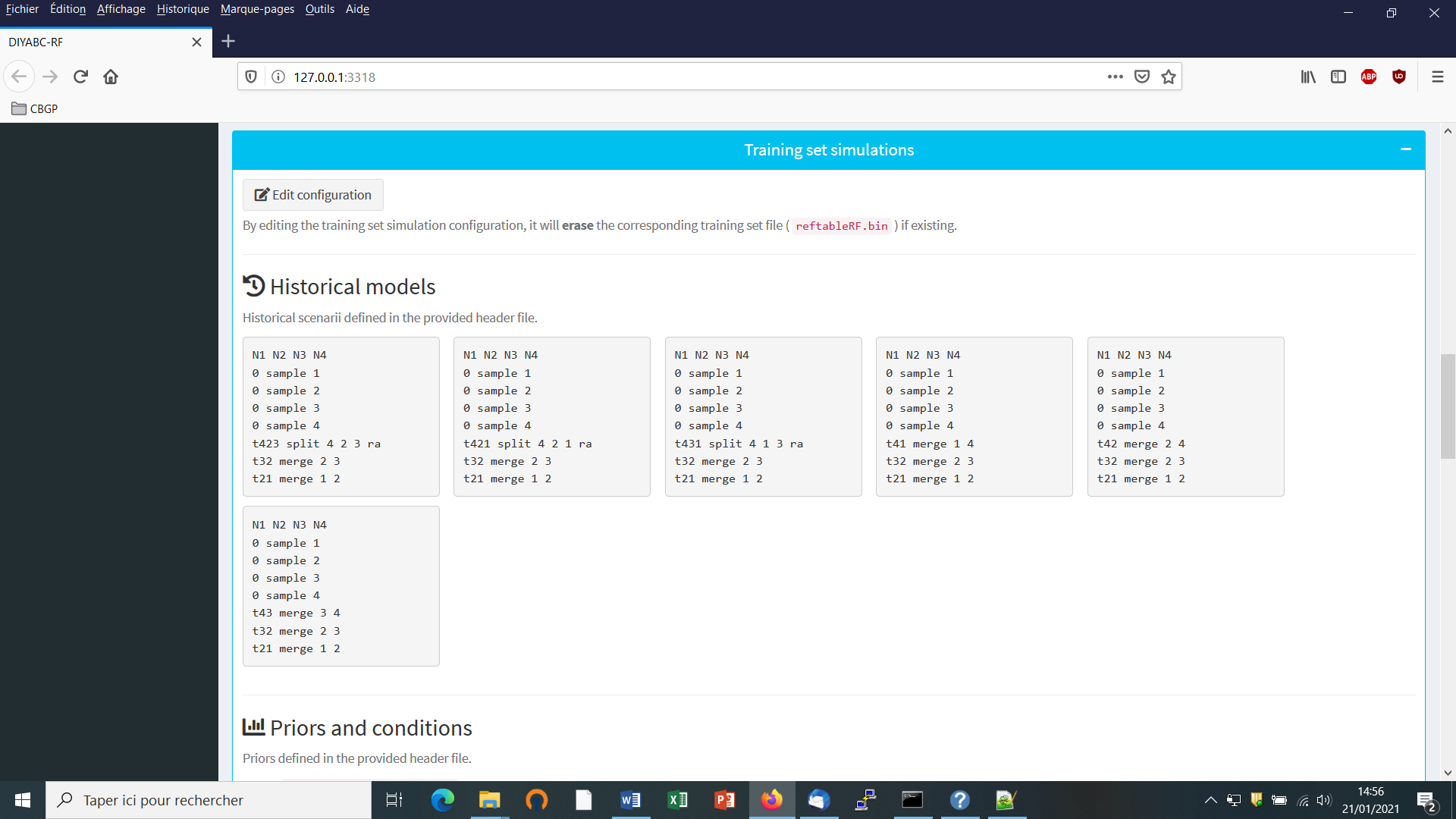

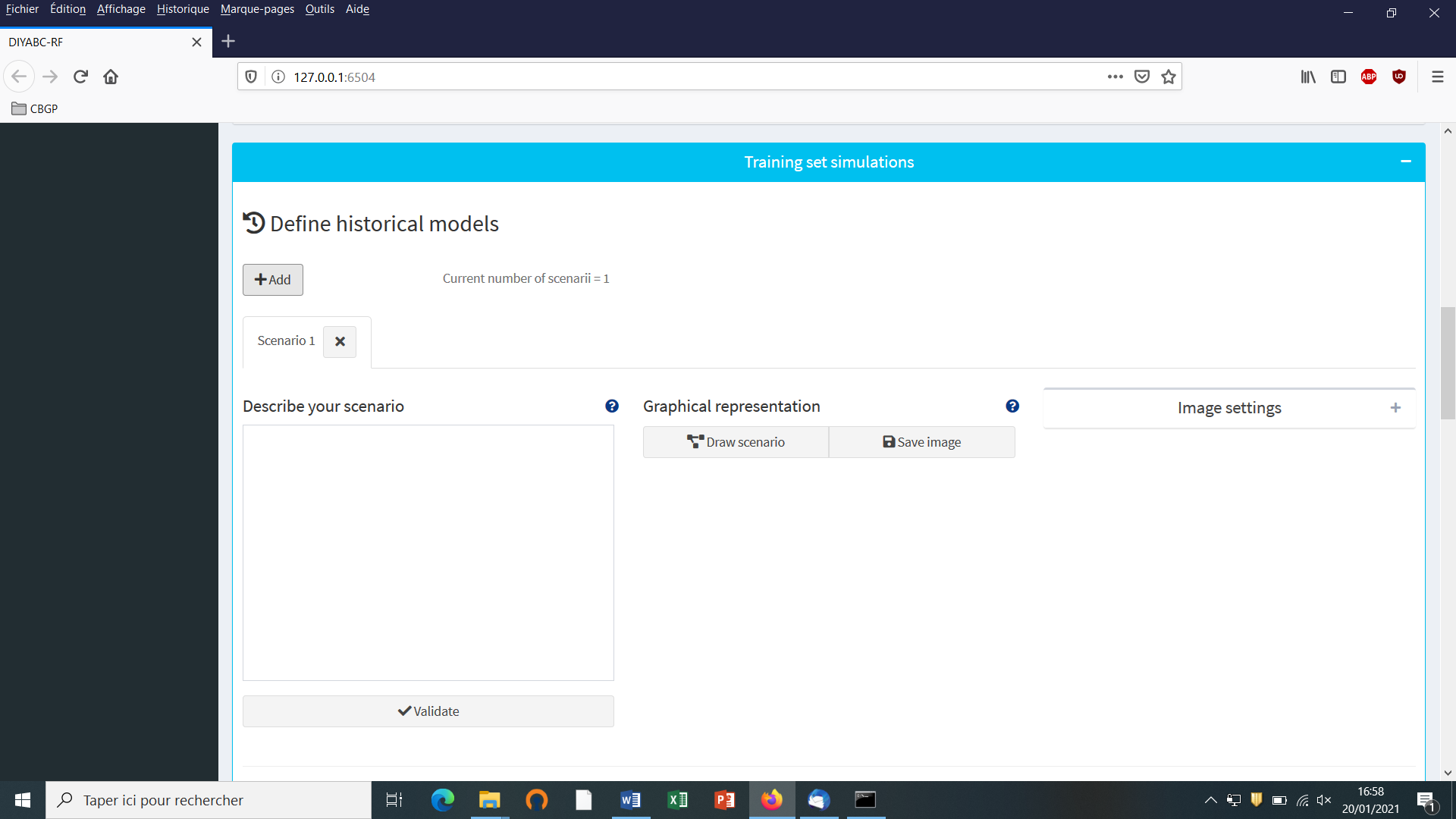

Click on the “Add” button of the “Define historical models” section. The following panel appears:

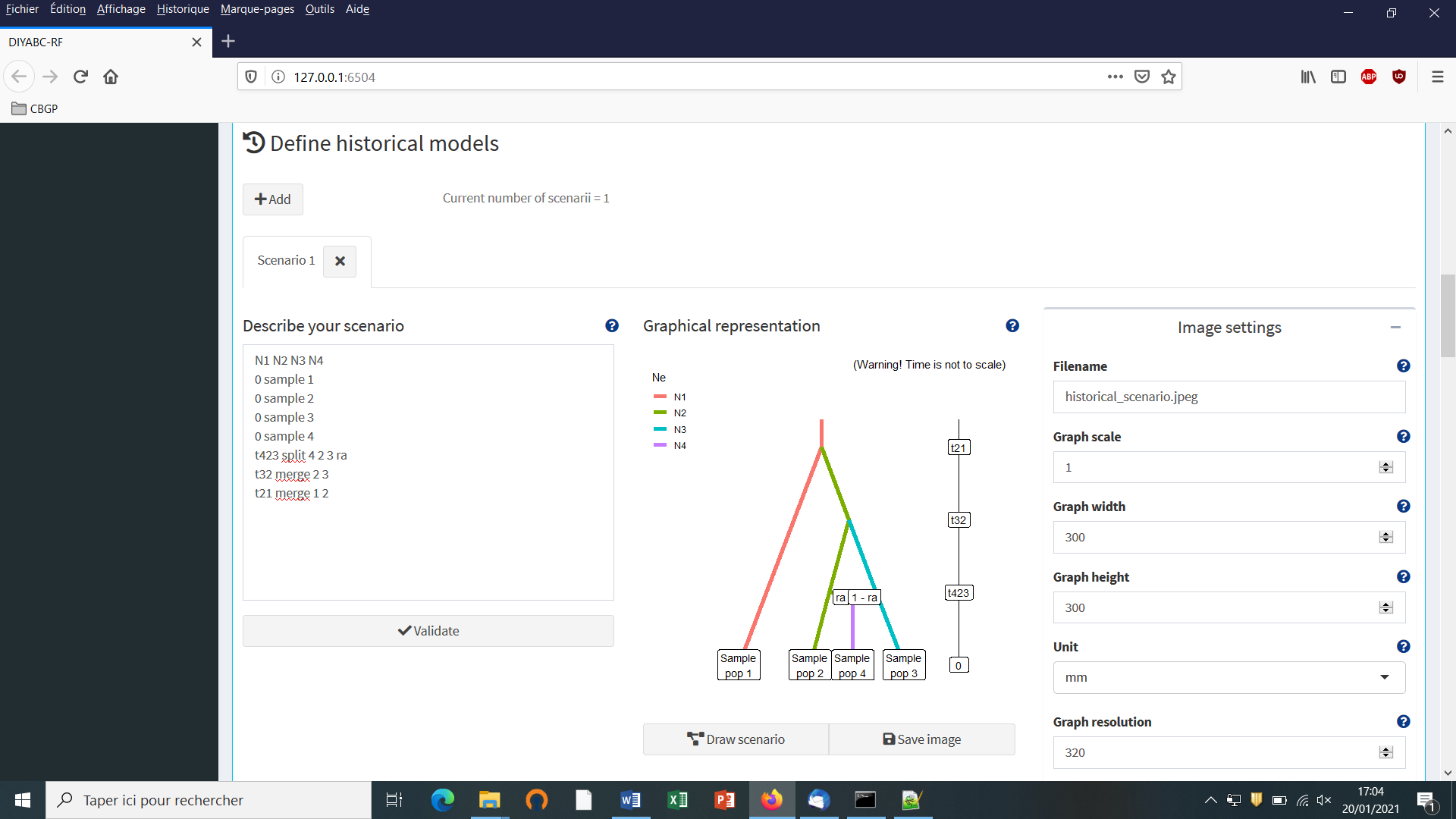

Write the code of a first scenario (scenario 1) in the edit window ”Describe your scenario” and click on the “Validate” button.

- If we click on the “Draw scenario” button, the logic of the scenario is checked and if it is found OK, and if the scenario is draw-able, a graphical representation of the scenario appears. You can save it by clicking on the “Save image” button. Figures are saved in ‘fig’ sub-folder in the project directory. Possible extensions are: ‘eps’, ‘ps’, ‘tex’, ‘pdf’, ‘jpeg’, ‘tiff’, ‘png’, ‘bmp’, ‘svg’.

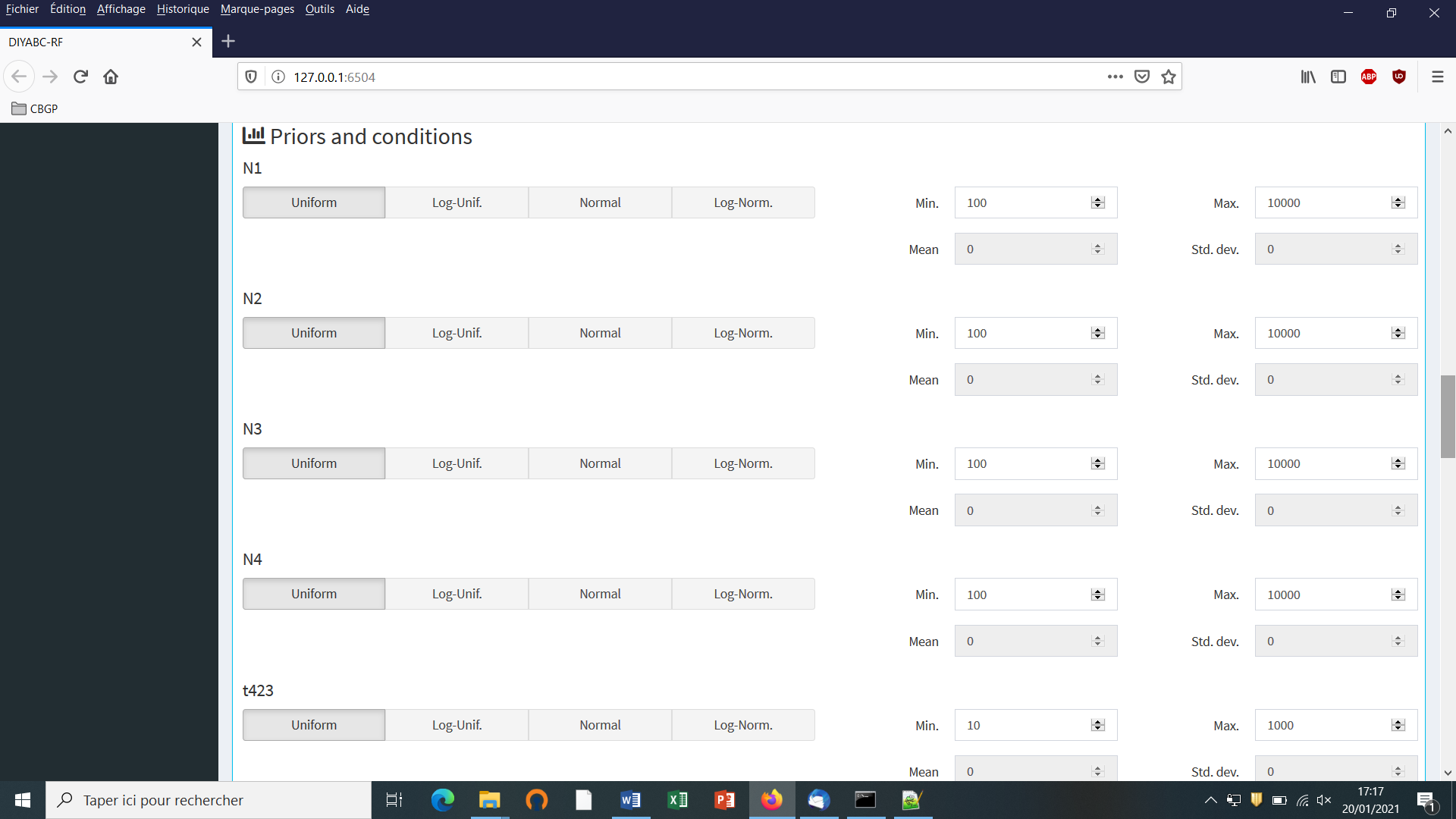

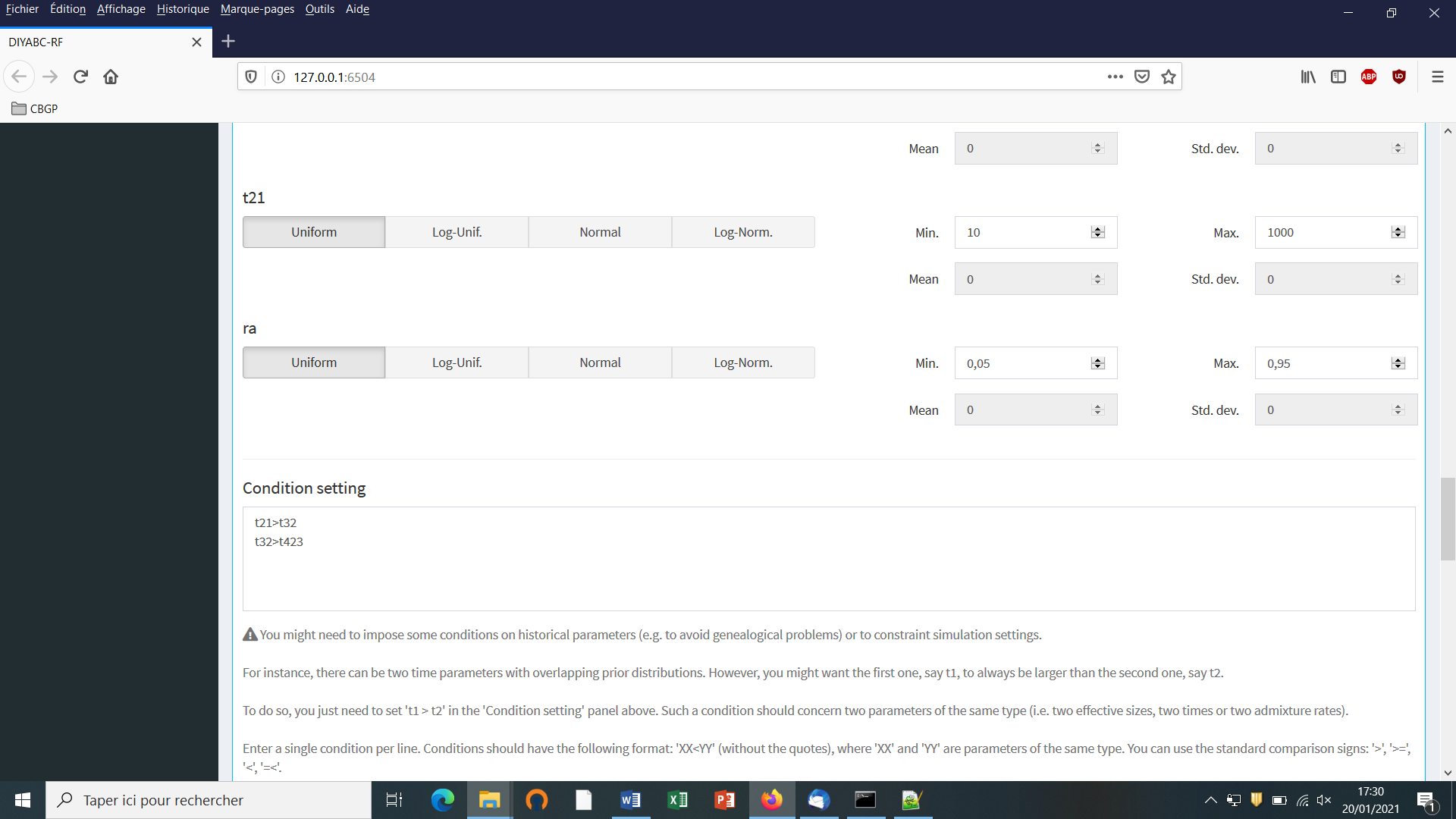

- The “Priors and conditions” frame which appears below allows

choosing the prior distribution of each parameter of the scenario. A

parameter is anything in the scenario that is not a keyword (here

sample,merge and split), nor a numeric value. In our example scenario, parameters are hence:N1, N2, N3, N4, ra, t21, t32andt423. We choose a uniform distribution and change the min and max values (100 and 10000 for N values - 10 and 1000 for t values; see panel below).

- In our example, we also need to specify conditions for the priors on

t21, t32 and t432such thatt21>t32 and t32>t423.

To do this we write t21>t32 and t32>t432 on two successive lines in the “Condition setting” frame. As noted in the panel, conditions should have the following format: XX<YY. where ‘XX’ and ‘YY’ are parameters of the same type. You can use the standard comparison signs: ‘>’, ‘>=’, ‘<’, ‘=<’. It is worth stressing that the omission of such conditional constraints on merge times (cf. a population needs to exist in the past to allow coalescence events in it) is one of the most frequent implementation error made by DIYABC-RF users. If forgotten a message “Error in simulation process: check your scenarios, priors and conditions” appears. Note that the occurrence of a too large number of time conditional constraints within a scenario may substantially slow down simulations as a valid t parameter vector will be retain and run only once all conditions are fulfilled.

- We then can add some other scenarios in additional windows by clicking on the button “Add” (one or several times if one wants to add a single or several other scenarios). In the present example, we simulate datasets from six different scenarios that we want to compare (see Collin et al. 2020 example based on pseudo-observed datasets for details); hence the six scenario windows that we have completed with instructions for each one. For the last sixth scenario the panel looks like this:

You need to complete time conditions for the six scenarios by writing t21>t32, t42<t21, t43<t32, t32>t423, t431<t32 and t421<t21 on successive lines in the “Condition setting” frame.

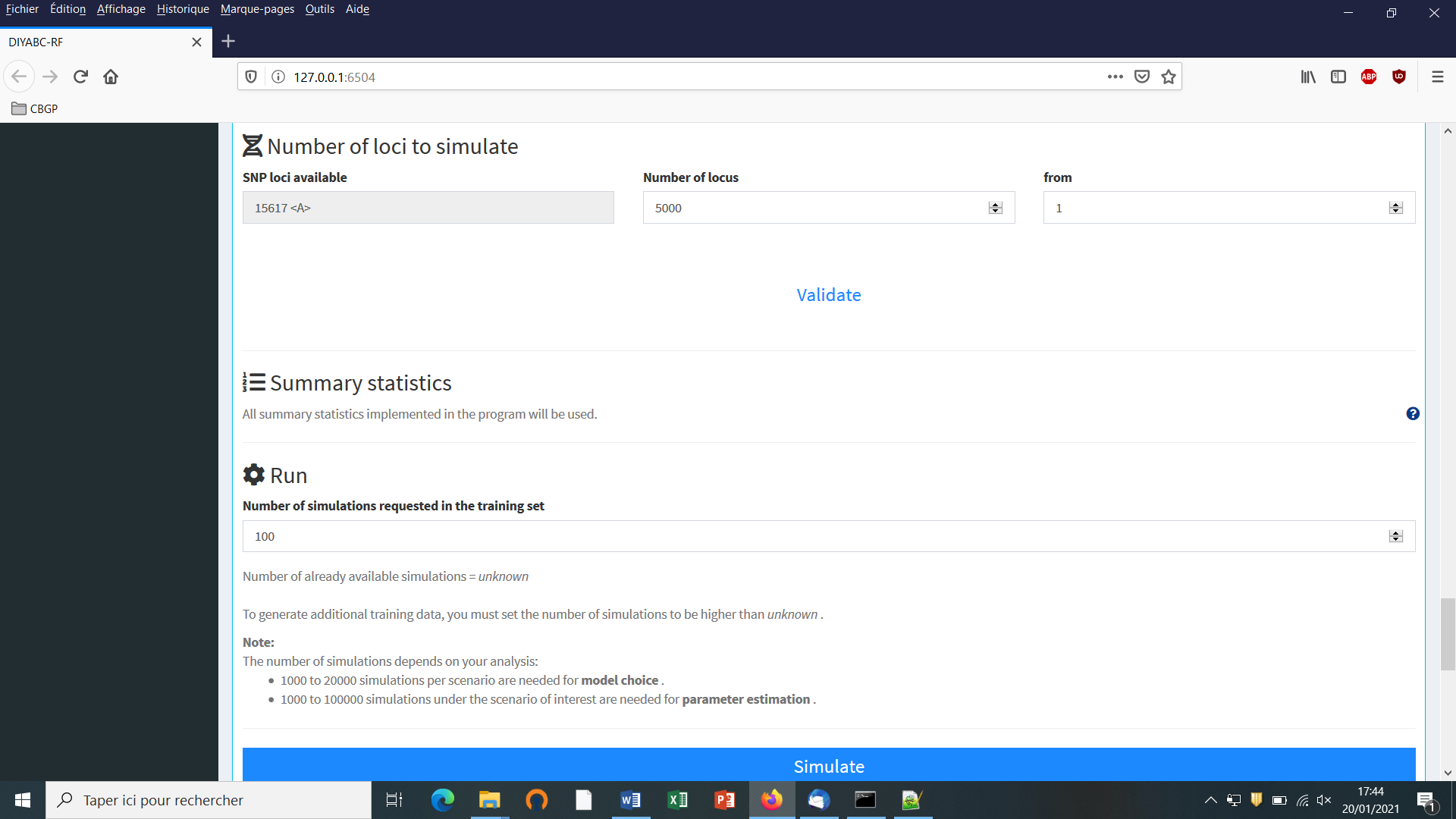

5.4.4 Step 4: Inform number of loci to simulate (and chromosome type)

Choose the number of SNP locus that you want to simulate for each chromosome type define in the data file (A for autosomal diploid loci, H for autosomal haploid loci, X for X-linked or haplo-diploid loci, Y for Y-linked loci and M for mitochondrial loci; see section 7.1.1). Here we have only type <A> SNP loci. According to our own experience, analyzing (evolutionary neutral) scenarios using 5000 to 20000 SNP loci is sufficient to obtain robust results. In this example, we choose to consider simulations based on a subset of 5000 SNP loci (with <MAF=0.05> as indicated previously in the headline of the observed dataset file) taken in order from the first SNP locus of the data file (users may want to change this stating point), by replacing 30000 by 5000 in the corresponding frame. Click on the button “Validate”.

It is worth stressing that choosing a subset of 5000 SNP loci taken from the SNP locus 5001 of the data file would lead to a training set generated from a set of 5000 loci completely independent from the previous one. Generating by this way different training sets is a way to process independent analyses of a given dataset (a sufficiently large number of SNP loci available in the observed dataset is obviously needed to do that).

5.4.5 Step 5: Summary statistics

In the present version of the program, all available summary statistics are computed as mentioned in the interface below the “Summary statistics” item (see previous panel-copy) and as detailed when clicking on the button “?” on the right:

For both IndSeq and PoolSeq SNP loci, the following set of summary statistics has been implemented.

1. Proportion of monomorphic loci for each population, as well as for each pair and triplet of populations (ML1p, ML2p, ML3p)

Mean and variance (over loci) values are computed for all subsequent summary statistics.

2. Heterozygosity for each population (HW) and for each pair of populations (HB)

3. FST-related statistics for each population (FST1), for each pair (FST2), triplet (FST3), quadruplet (FST4) and overall (FSTG) populations (when the dataset includes more than four populations)

4. Patterson’s f-statistics for each triplet (f3-statistics; F3) and quadruplet (f4-statistics; F4) of populations

5. Nei’s distance (NEI) for each pair of populations

6. Maximum likelihood coefficient of admixture (AML) computed for each triplet of populations.

Note the short code name for each type of summary statistics (MLP1p, HW, FST2, F3,…). Such code names of statistics (plus the suffix m and v for mean and variance over loci, respectively; see section 2.6.3) will be used in most files produced by the program, including the key files headerRF.txt, reftableRF.bin and statobsRF.txt.

5.4.6 Step 6: Simulate the training set

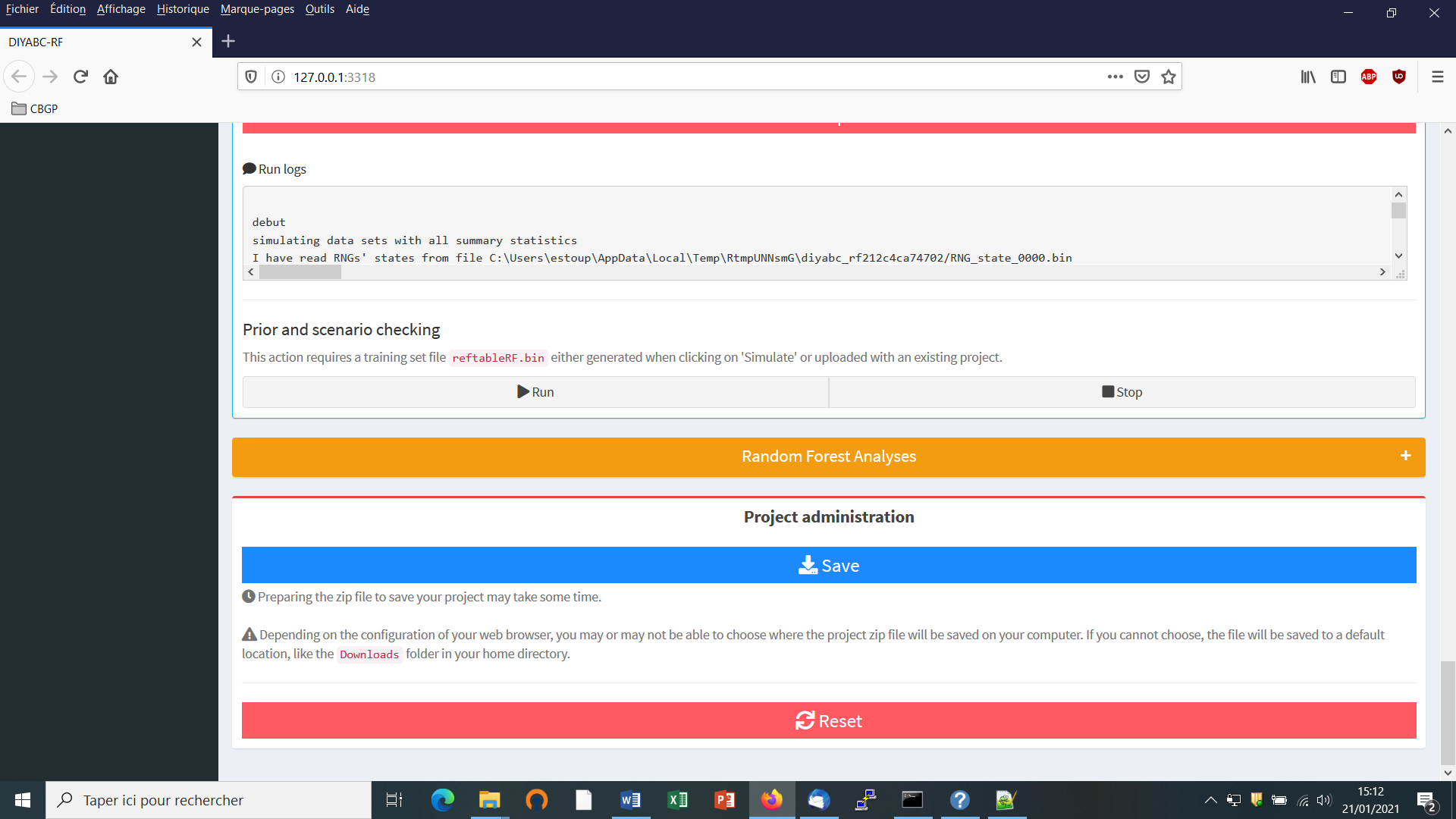

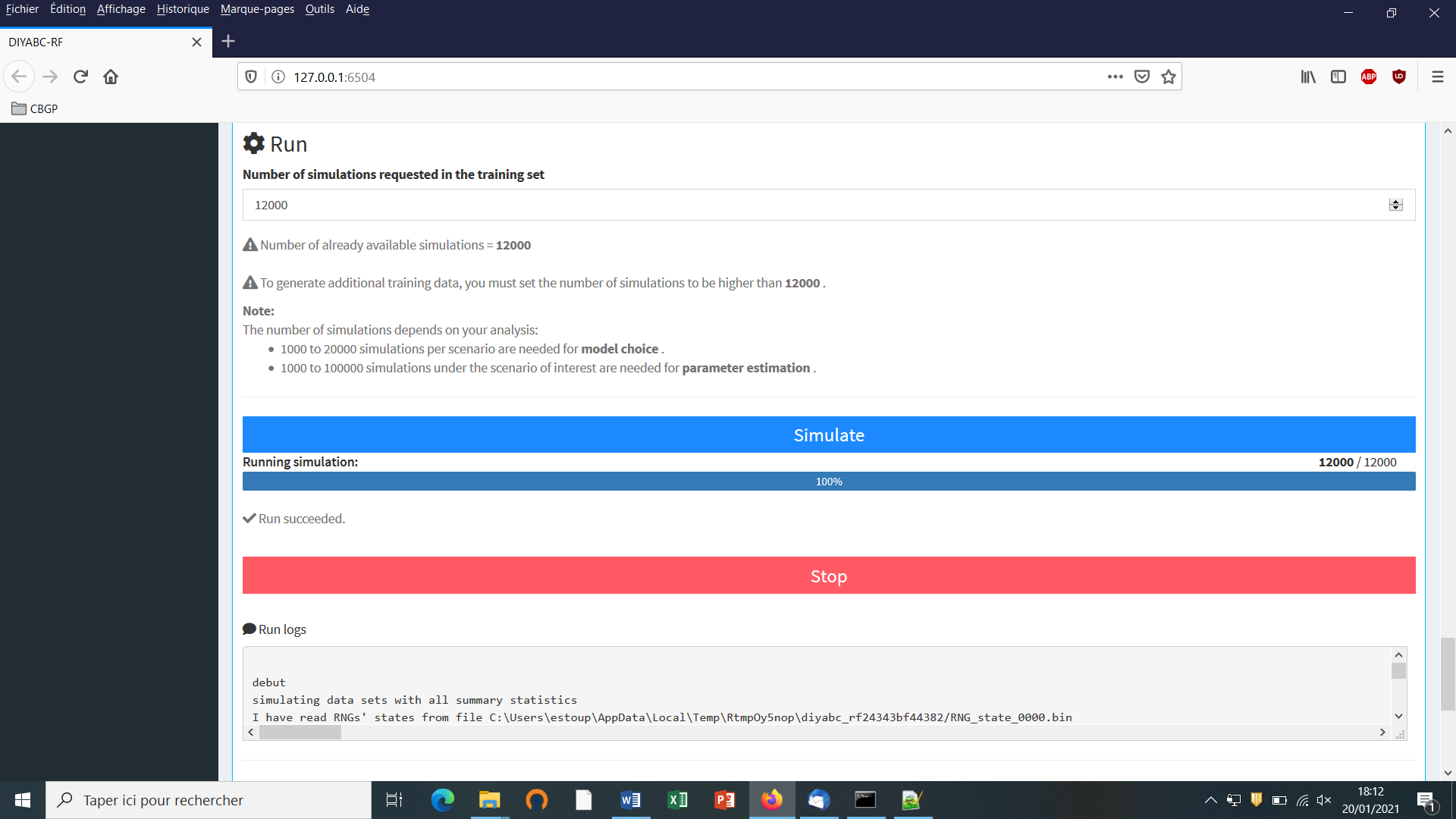

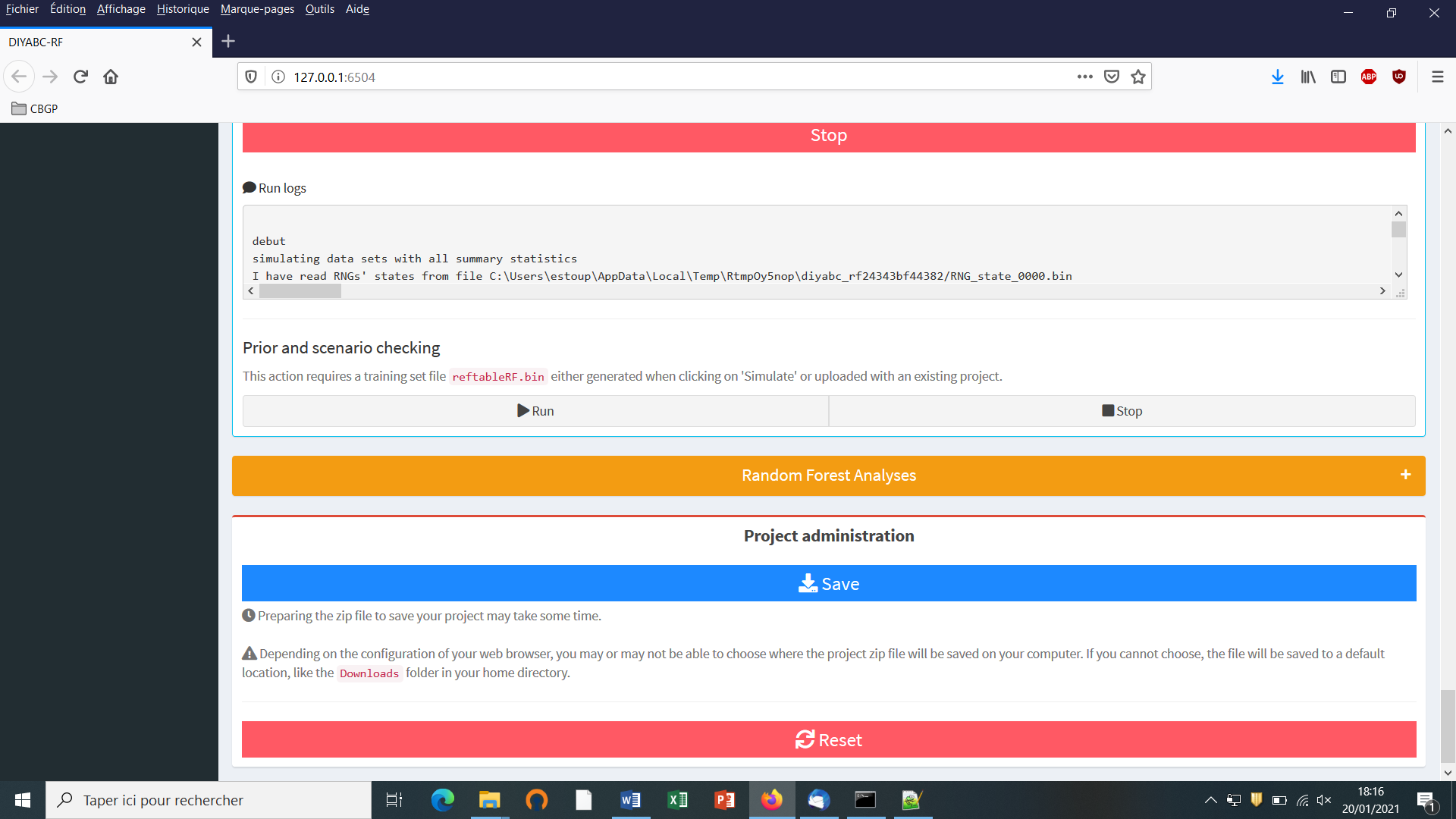

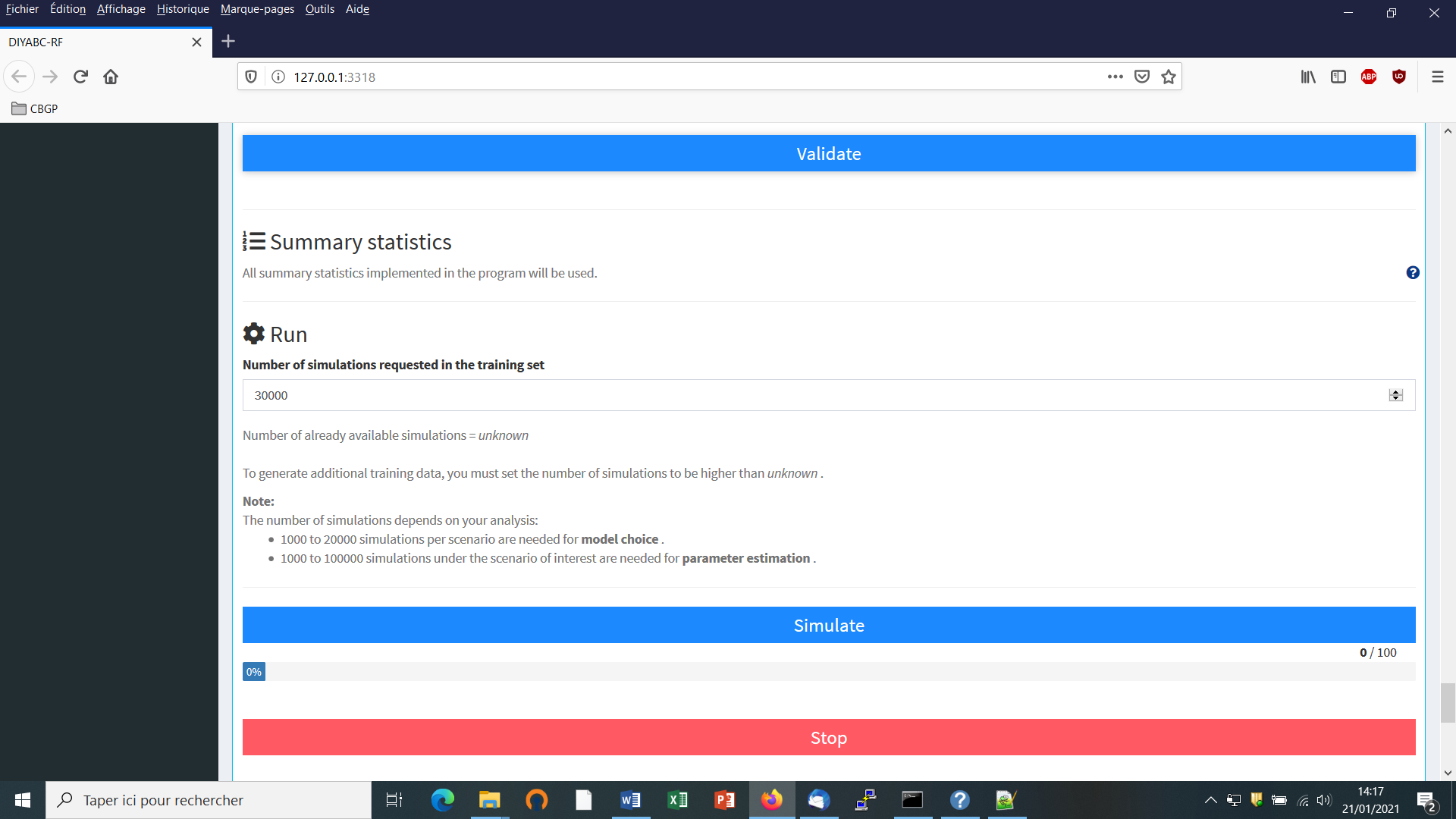

You can access the “training set simulation” module by clicking on the corresponding + button on the far right of the box blue title bar “Training set simulation”. The following “Run” panel appears.

-

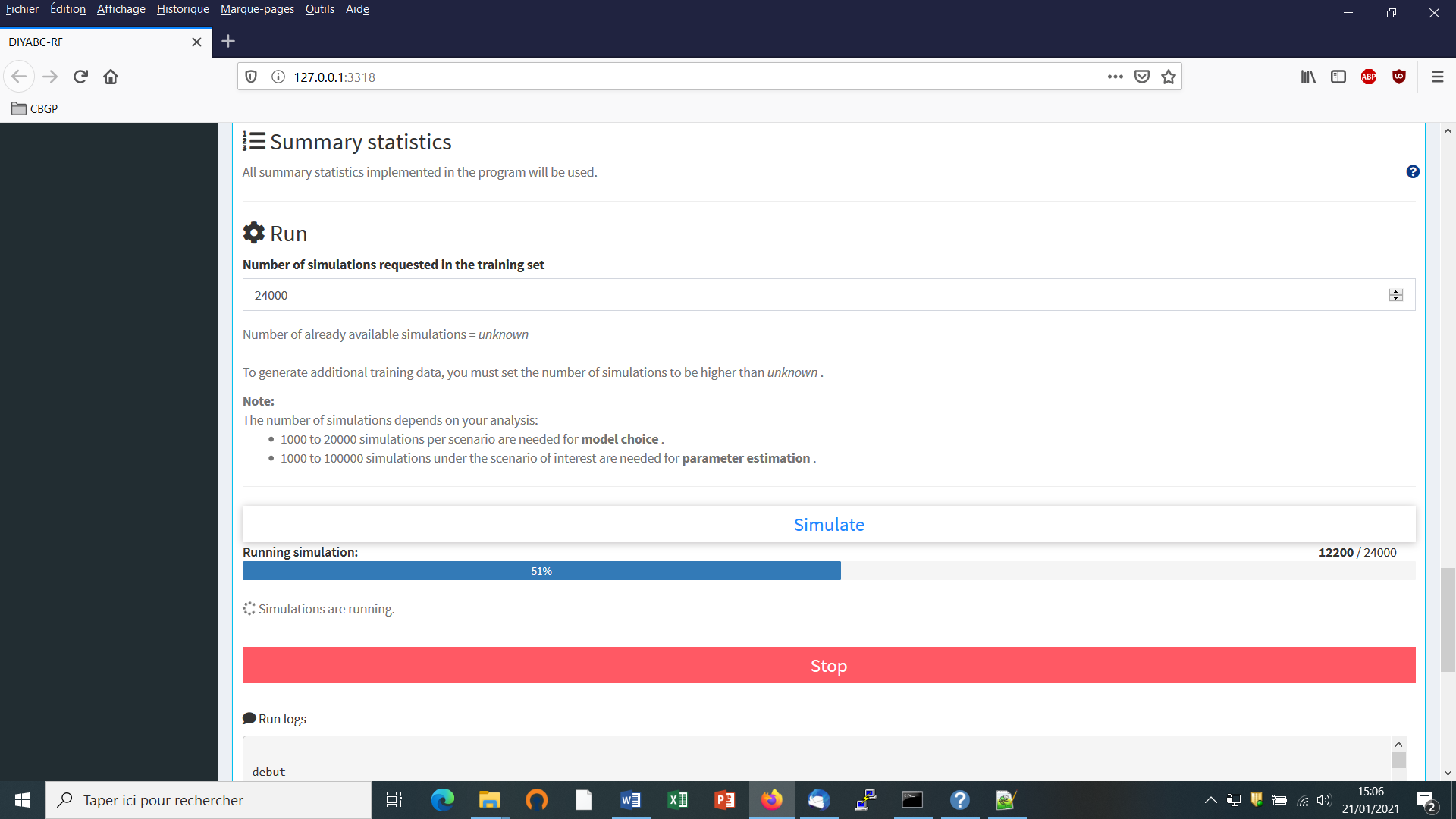

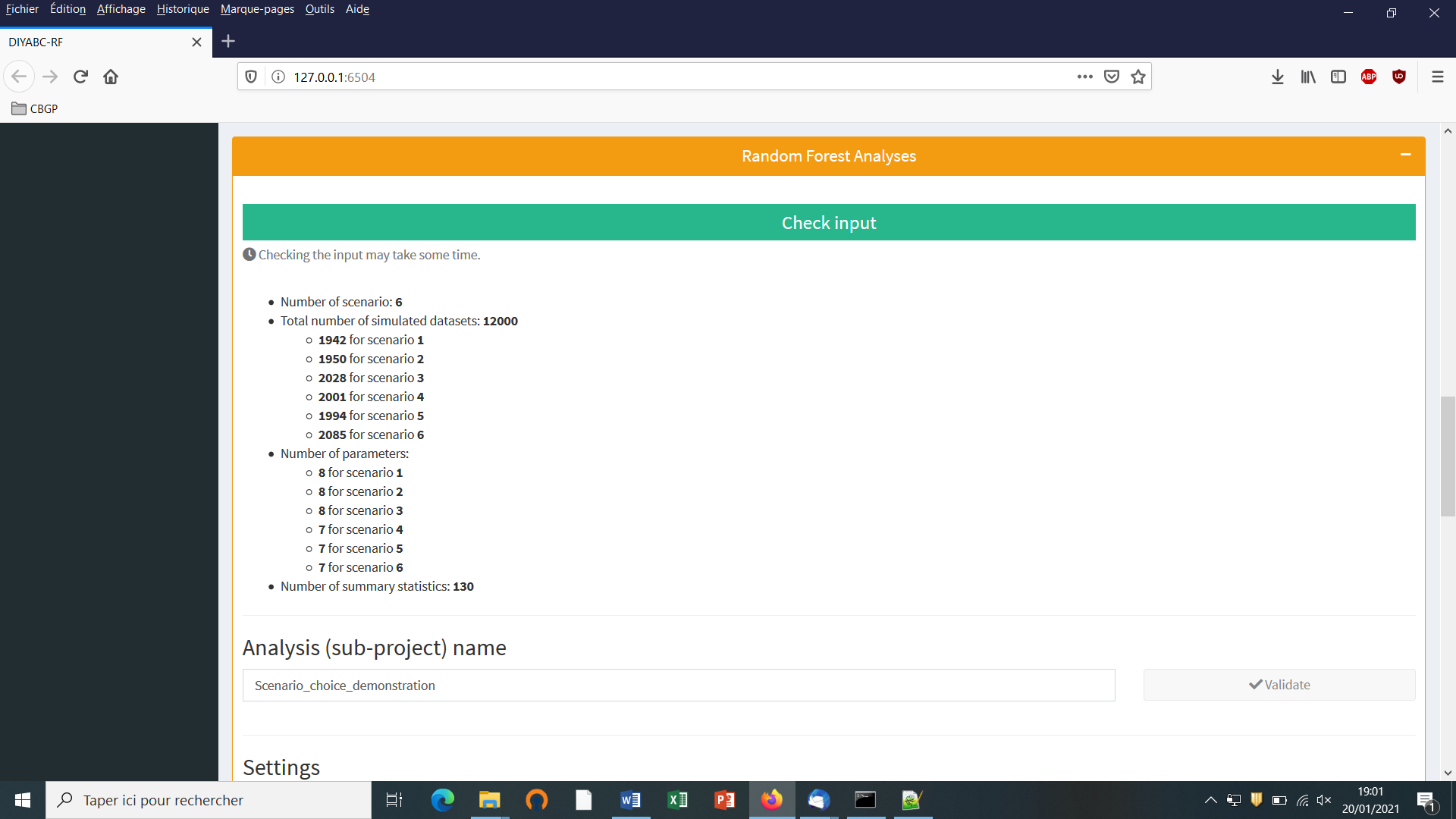

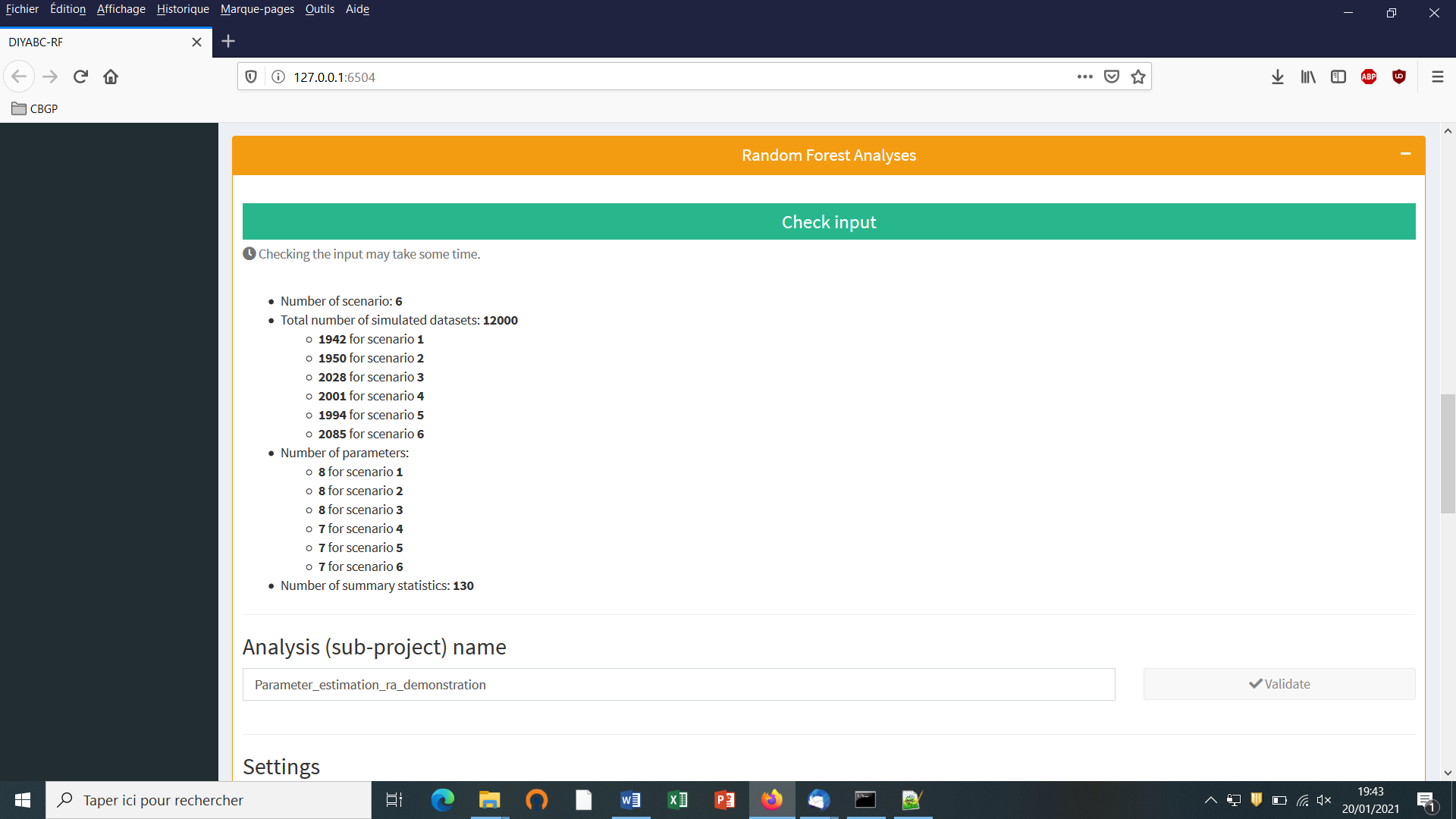

Indicate the required number of datasets to simulate in the training set: we here change the default value of 100 into 12000 (in order to simulate 2000 datasets for each of the six scenarios)

-

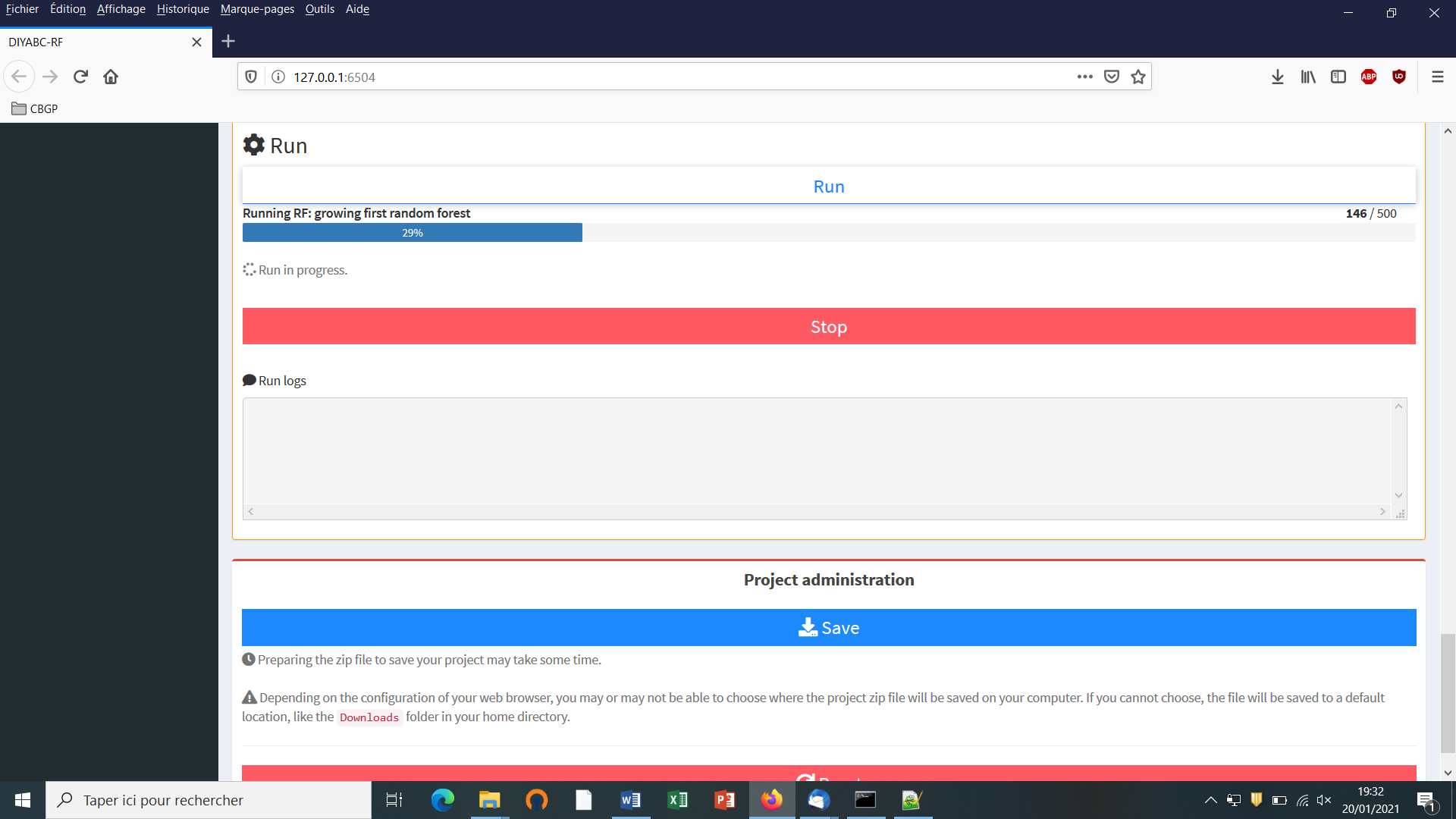

Launch the generation of the simulated datasets of the training set by clicking on the large blue color button “Simulate”. The number of datasets already simulated is given in the “Running simulation” progress bar below.

-

You can stop the generation of simulated datasets by clicking on the “STOP” button. Both a “Run succeeded” message appears when all requested simulations have been processed.

-

Do not forget to click on the “Save” button in the “Project administration” lower panel (see panel below) to implement and save a concatenated zip file including various input and output files (see section 7 for a description of the content of some of those files) on your computer. The name of the implemented/saved concatenated .zip file is the one given at the start in the “Project name” window (here IndSeq_demonstration_project).

- Warning: The “Reset” button at the very bottom part of the “Project administration” panel restart the pipeline from scratch removing all configurations previously set up and all data and result files generated.

5.4.7 Step 7:Prior-scenario checking PRE-ANALYSIS (optional but recommended)

Before performing a full ABC-RF analysis on a large size training set, we advise using the prior-scenario checking option on a training set of small size (e.g. including 500 to 1000 simulated dataset per scenarios). As a matter of fact, this pre-analysis option is a convenient way to reveal potential mis-specification of models (scenarios) and/or prior distributions of parameters (and correct it). This action requires a training set file (reftableRF.bin) and a statobsRF.txt file either generated when clicking on the “Simulate” button or uploaded with an existing project. When clicking on the “Run” button of the “Prior and scenario checking” option (see previous panel copy) the program will generate two specific outputs:

-

A Principal Component Analysis (PCA) representing on 2-axes plans the simulated dataset from the training set and the observed dataset as graphical output. Note that this figure is somewhat similar in its spirit to the graphical output LDA_training_set_and_obs_dataset.png obtained when running the scenario choice option in “Random Forest Analyses”. The latter output is different, however, as it corresponds to the projection of the datasets from the training set linear discriminant analysis (LDA) axes (see section 7.3 for details).

-

A numerical output file, named pcaloc1_locate.txt, which includes for each summary statistics and for each scenario, the proportion of simulated data (considering the total training set) that have a summary statistics value below the value of the observed dataset. A star indicates proportions lower than 5% or greater than 95% (two stars, <1% or >1%; three stars, <0.1% or >0.1%). The presence of such star symbols is a sign of substantial mismatch between the observed dataset and the simulated datasets. The latter numerical results can help users to reformulating the compared scenarios and/or the associated prior distributions in order to achieve some compatibility (see e.g. Cornuet et al. 2010). If only a few stars are observed at a few summary statistics for one or several scenarios, one can conclude that prior-scenario conditions are suitable enough to further process Random Forest analysis, and this potentially on a training set including more simulated datasets.

5.5 How to generate a new PoolSeq SNP training set

Follow the same steps described in section 5.4 for IndSeq SNPs.

- Step 1: Defining a new PoolSeq SNP project see section 5.4.1

Click on the “Start” button of the home screen. Select SNP as project type and PoolSeq as sequencing mode

-

Step 2: Choosing the data file see section 5.4.2 and select a dataset file characterized by a PoolSeq format (format detailed in section 7.1.2).

-

Step 3: Inform the Historical model see section 5.4.3

-

Step 4: Inform chromosome type and number of loci see section 5.4.4

Note: The panel for PoolSeq SNPs is simpler than for IndSeq SNPs because PoolSeq SNPs are considered as located on autosomal chromosomes only.

-

Step 5: Summary statistics: see section 5.4.5

-

Step 6: Simulate the training set see section 5.4.6

-

Step 7 (optional but recommended): Prior-scenario checking PRE-ANALYSIS see section 5.4.7

5.6 How to generate a new microsatellite (and/or DNA sequence) training set

Follow the same steps described in section 5.4 for IndSeq SNPs.

- Step 1: Defining a new or microsatellites/DNA sequences project see section 5.4.1

Click on the “Startt” button of the home screen. Select Microsat/Sequences as project type (not any sequencing mode is needed)

-

Step 2: Choosing the data file see section 5.4.2 and select a dataset file characterized by a Microsatellite and/or DNA Sequences format (format detailed in section 7.1.3).

-

Step 3: Inform the Historical model see section 5.4.3

-

Step 4: The panel “Inform chromosome type and number of loci” is replaced by the panel “Inform the genetic model” see details below in section 5.6.1

-

Step 5: Summary statistics: different sets of summary statistics are computed as compared to SNP summary statistics see details below in section 5.6.2

-

Step 6: Simulate the training set see section 5.4.6

-

Step 7 (optional but recommended): Prior-scenario checking PRE-ANALYSIS see section 5.4.7

5.6.1 Step 4: Inform the genetic model

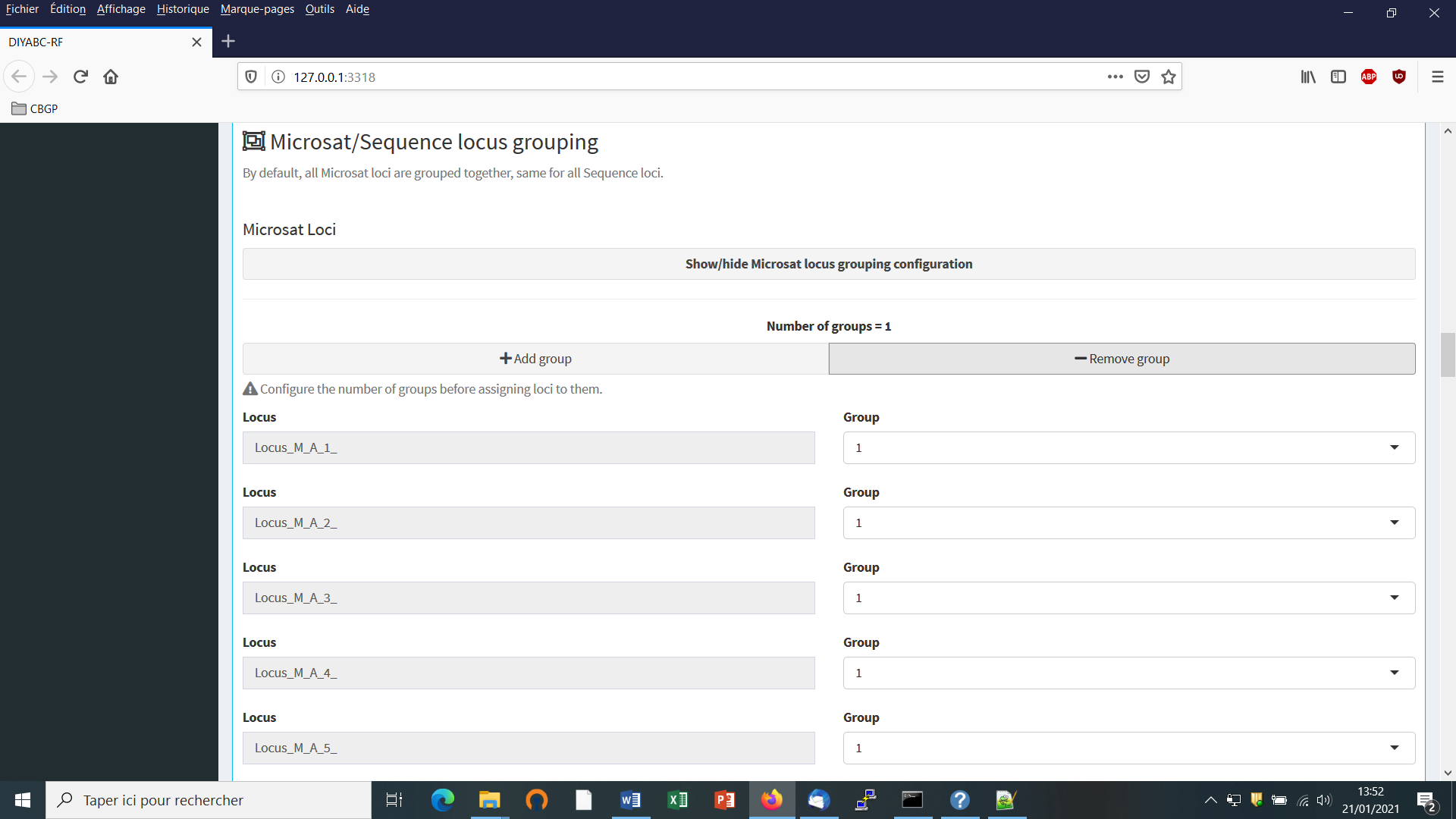

For microsatellite markers: Informing the genetic model can be done using the following panels (implemented here from a toy example including microsatellites + DNA sequences named TOY_EXAMPLE2_microsatellites_DNAsequence_two_pops_ancient_admixture; see section 10. “TOY EXAMPLES” for details about this toy example).

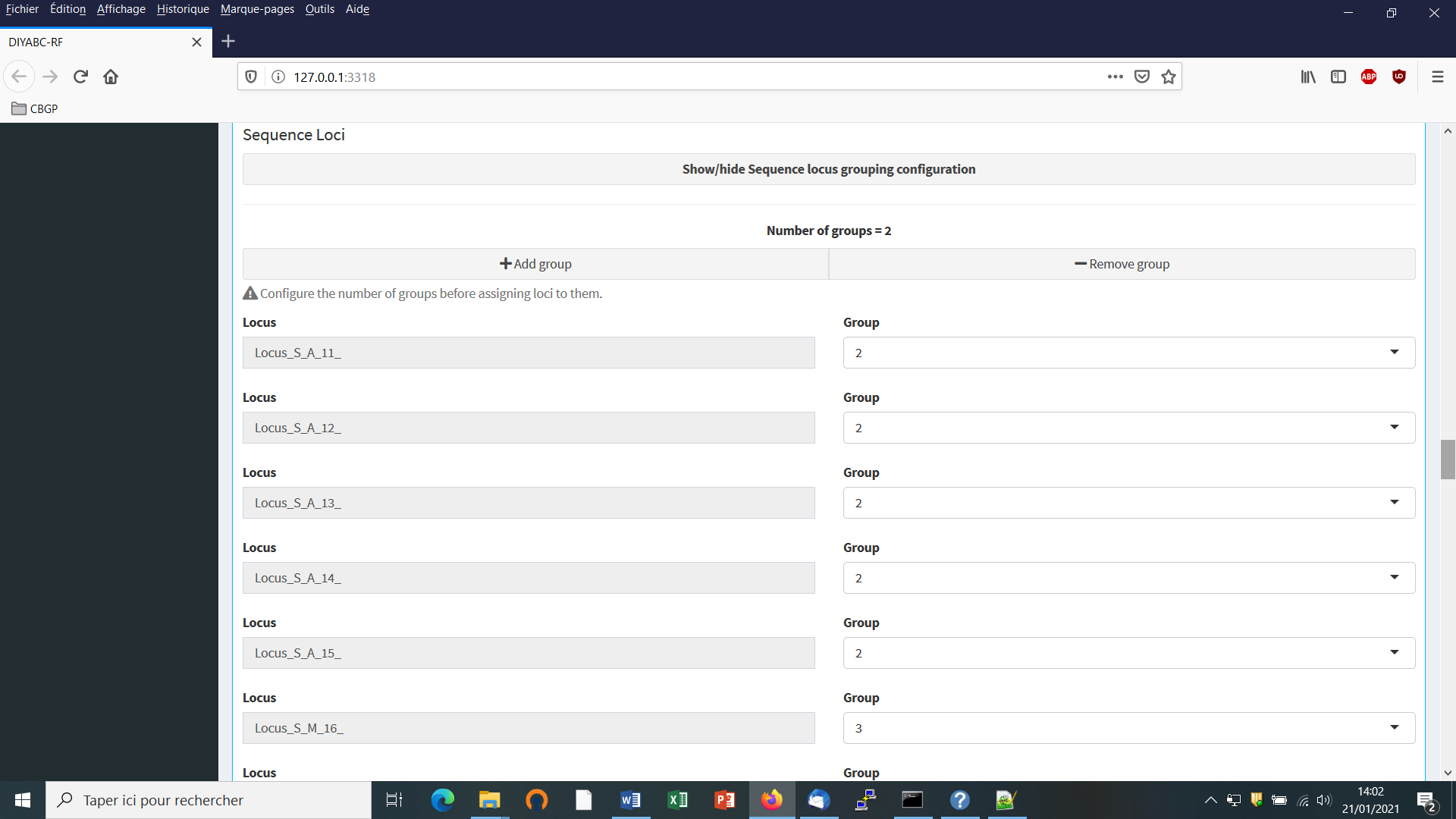

- First: define the number of groups of markers (a group been defined by different mutational modalities). By default, all microsatellite loci are grouped together, same for all DNA sequence loci. If you want to define more than a single group then configure the number of groups (cf button “Add group”) and then assign each locus to one of those groups using the Group arrow on the right.

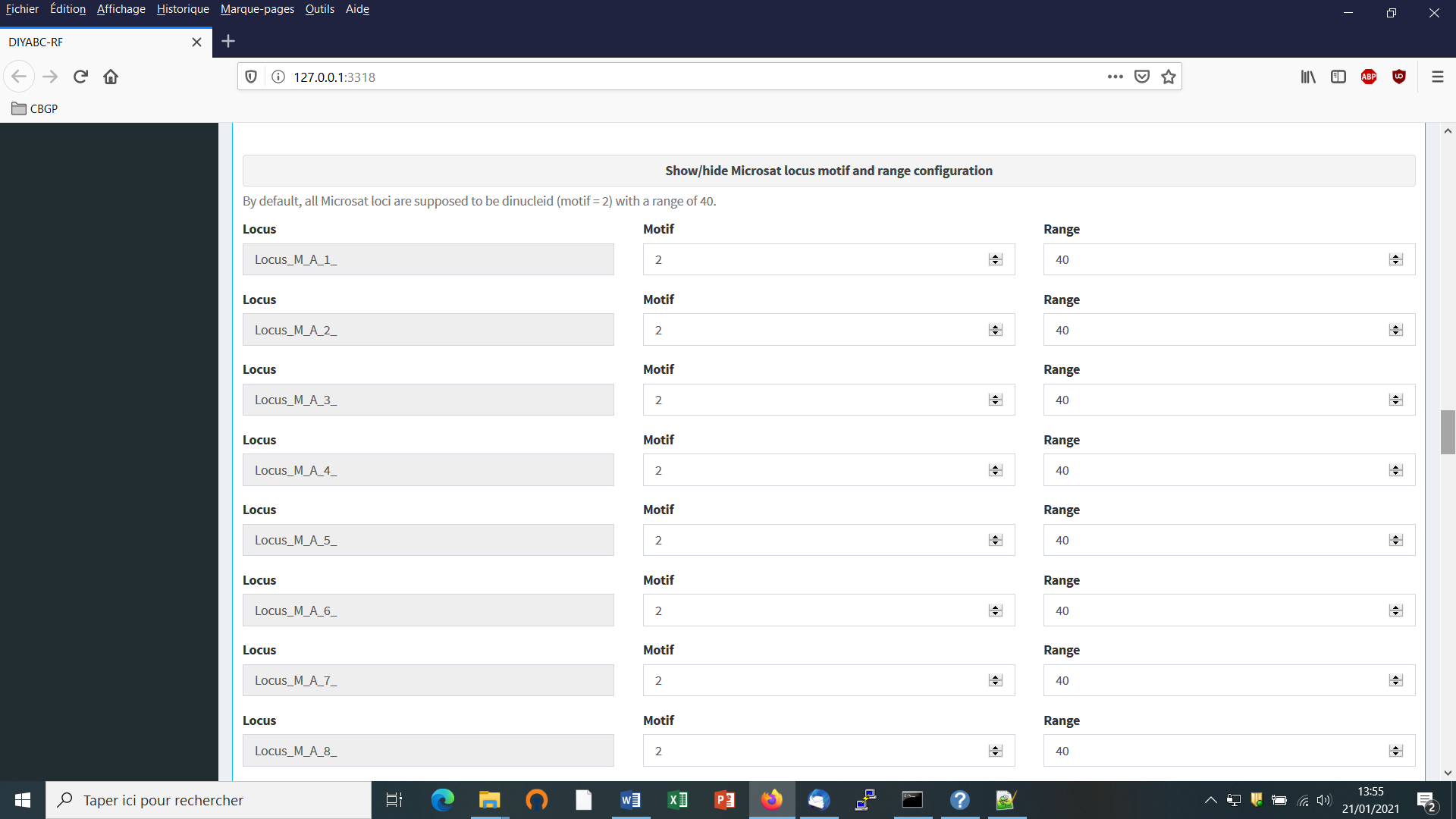

- Second: define the motif size and continuous range of motif variation. By default, all microsatellite loci are supposed to be dinucleotidic (motif = 2) with a range of 40.

It is worth stressing that the values for motif size and allelic range are just default values and do not necessarily correspond to the actual microsatellite observed dataset. The user who knows the real values for its data is required to set the correct values at this stage. If the range is too short to include all values observed in the analyzed dataset, a message appears in a box asking to enlarge the corresponding allelic range. Note that the allelic range is measured in number of motifs, so that a range of 40 for a motif length of 2 bp means that the difference between the smallest and the longest alleles should not exceed 80 bp. It is worth stressing that the indicated allelic range (expressed in number of continuous allelic states) corresponds to a potential range which is usually larger than the range observed from the analyzed dataset (cf. all possible allelic states have usually not been sampled). In practice it is difficult to assess the actual microsatellite constraints on the allelic range; to do that one needs allelic data from several distantly related populations/sub-species as well as related species which is rarely the case(see Pollack et al. 1998; Estoup et al. 2002). We achieved a meta-analysis from numerous primer notes documenting the microsatellite allelic ranges of many (i.e. > 100) different species (and related species). We used the corrective statistical treatment on such data proposed by (Pollack et al. 1998). Our results pointed to a mean microsatellite allelic range of 40 continuous states (hence the default allelic range value of 40 mentioned in the program). We also found, however, that range values greatly varied among species and among loci within species (unpublished results). We therefore recommend the following pragmatic choice when considering the allelic range of your analyzed microsatellite dataset: (i) if the difference in number of motif of your locus is < 40 motifs in the analyzed dataset then leave the default allelic range value of 40. (ii) if the difference in number of motif of your locus is >40 motifs in your dataset then take Max_allele_size $-$ Min_allele_size)/motif size + say 10 additional motifs to re-define the allelic range of the locus in the corresponding DIYABC-RF panel (e.g. (200 nu $-$ 100 nu)/2 + 10 = 50 + 10 = 60 as allelic range).

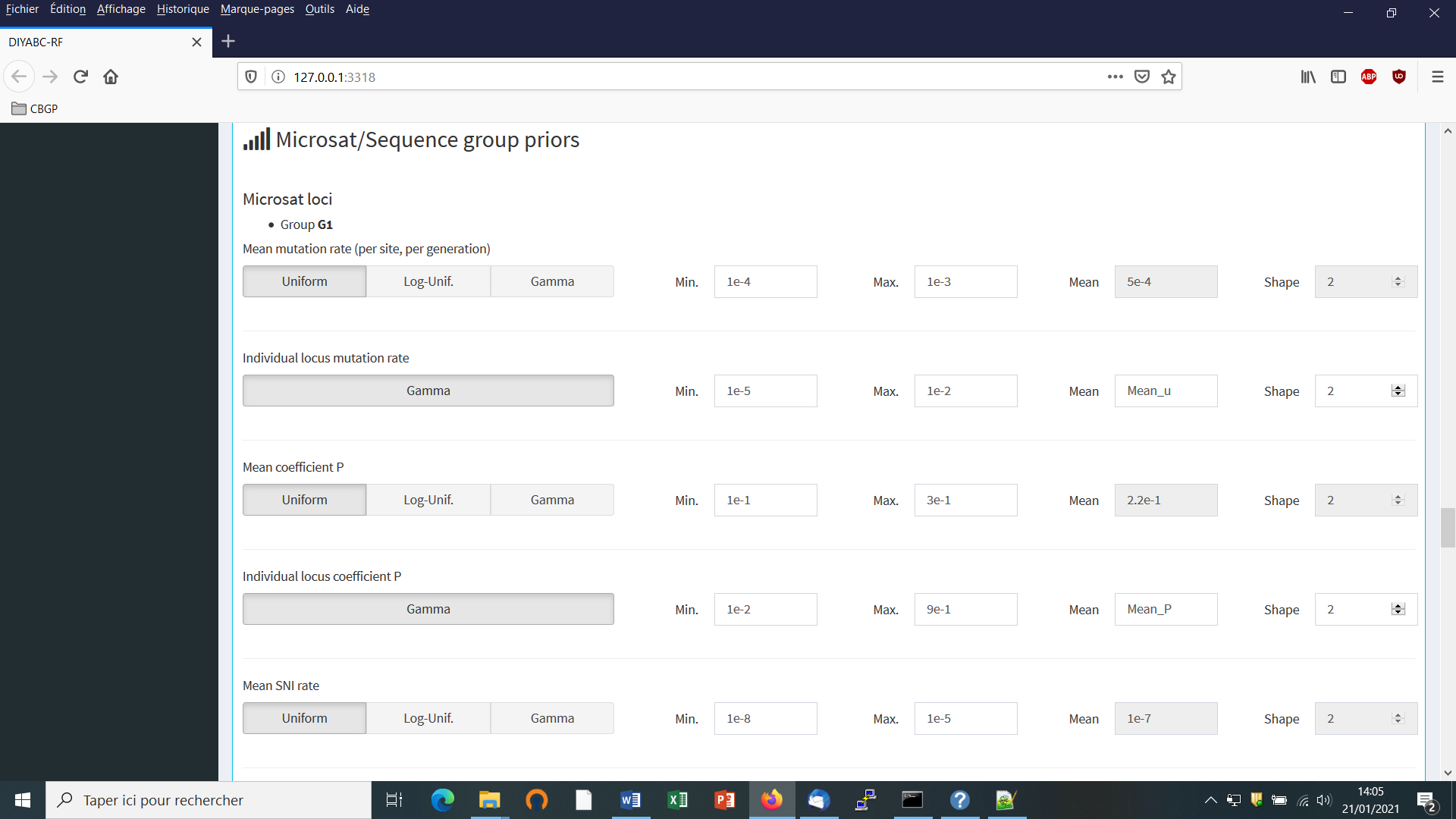

- Third: adjust the prior specificities of mutational modalities as you want using the corresponding buttons in the panel below. Otherwise keep the default values as the latter have been reported for many eukaryote species (e.g. Estoup et al. 2002; but see Fraimout et al. 2017).

For DNA sequence markers: Informing the genetic model can be done using the following panels (implemented here from the same toy example as above).

- We need to define at least one group of DNA sequence loci.

-

Suppose that we want all DNA sequence loci in the same group because we consider that they all have similar mutational modalities. We then put “2” in the button “Group” for all loci (Group 1 is for the previous microsatellite markers). Otherwise you can associate some loci to additional groups (after clicking on the button “Add group”) if you consider that such loci have different mutational modalities (for instance here Locus_S_M_16_ corresponding to a sequence of mitochondrial DNA).

-

Adjust the prior specificities of mutational modalities (for the different DNA sequence groups you have defined previously) using the corresponding buttons in the panel below. Otherwise keep the default values as the latter have been reported for many eukaryote species (e.g. Cornuet et al. 2010).

The default values are indicated in the panel. Note that the default mean mutation rate is not suited to mitochondrial DNA which generally evolves at a faster rate than nuclear DNA (Haag-Liautard et al., 2008). So we set its value to 10-8. For all other mutation model parameters, one can just keep the default values. See section 2.4.2 “DNA sequence loci” for details about the mutation models proposed for DNA sequences.

- Before launching a run to simulate the training set, do not forget to validate your microsatellite and/or DNA settings by clicking on the blue-color button “Validate”. Indicate the number of simulations in the corresponding button (here 30000) and click on the button “Simulate” to launch the simulation of the training set.

5.6.2 Step 5: Summary statistics

In the present version of the program, all available summary statistics are computed as mentioned in the interface below the “Summary statistics” item (see previous panel-copy) and as detailed when clicking on the button “?” on the right:

For microsatellite loci

The following set of summary statistics has been implemented.

- Single sample statistics:

1. mean number of alleles across loci (NAL)

2. mean gene diversity across loci (HET)

3. mean allele size variance across loci (VAR)

3. mean M index across loci (MGW)

- Two sample statistics:

1. mean number of alleles across loci (two samples) (N2P)

2. mean gene diversity across loci (two samples) (H2P)

3. mean allele size variance across loci (two samples) (V2P)

${3.F}_{ST}$ between two samples (FST)

4. mean index of classification (two samples) (LIK)

5. shared allele distance between two samples (DAS)

6. distance between two samples (DM2)

- Three sample statistics:

1. Maximum likelihood coefficient of admixture (AML)

For DNA sequence loci

The following set of summary statistics has been implemented.

- Single sample statistics:

1. number of distinct haplotypes (NHA)

2. number of segregating sites (NSS)

3. mean pairwise difference (MPD)

4. variance of the number of pairwise differences (VPD)

5. Tajima’s D statistics (DTA)

6. Number of private segregating sites (PSS)

7. Mean of the numbers of the rarest nucleotide at segregating sites (MNS)

8. Variance of the numbers of the rarest nucleotide at segregating sites (VNS)

- Two sample statistics:

1. number of distinct haplotypes in the pooled sample (NH2)

2. number of segregating sites in the pooled sample (NS2)

3. mean of within sample pairwise differences (MP2)

4. mean of between sample pairwise differences (MPB)

${5.F}_{ST}$ between two samples (HST)

-

Three sample statistics:

- Maximum likelihood coefficient of admixture (SML)

5.7 How to work from an “Existing project” (for any type of markers)

Working from an existing project is particularly appealing when one wants to:

(i) objective 1: simply add more simulations in the training set of the reftableRF.bin of an existing project without changing anything in the headerRF.txt.

(ii) objective 2: use the headerRF.txt content of an existing project as a base to generate a new headerRF.txt file including different changes for instance in the scenarios formulated, in the definition of priors, the number of loci to simulate…

Let’s consider that one wants to work from the IndSeq_demonstration_project previously generated (and including a training set with 12000 simulation records) as described in the above section 5.4.

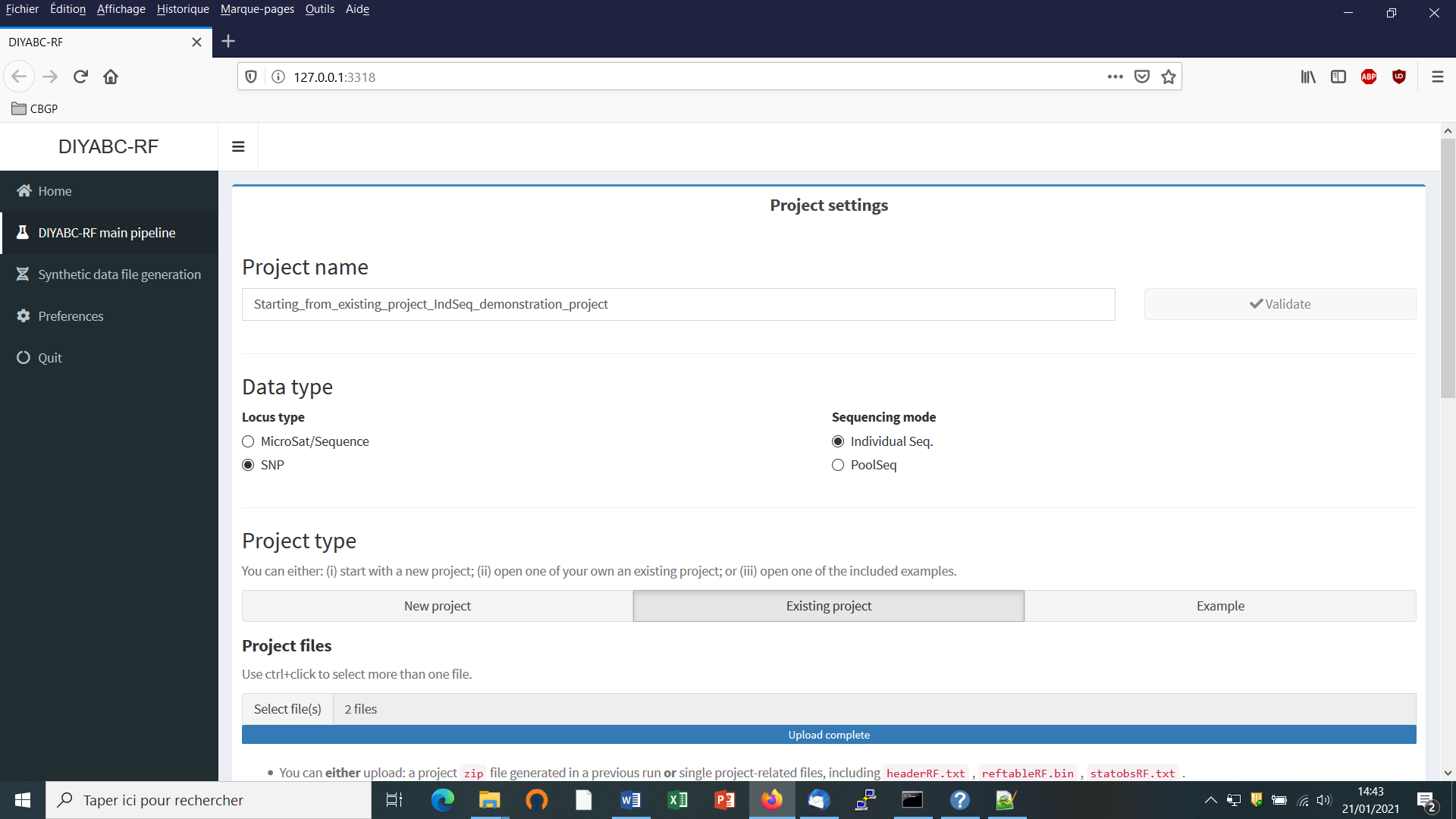

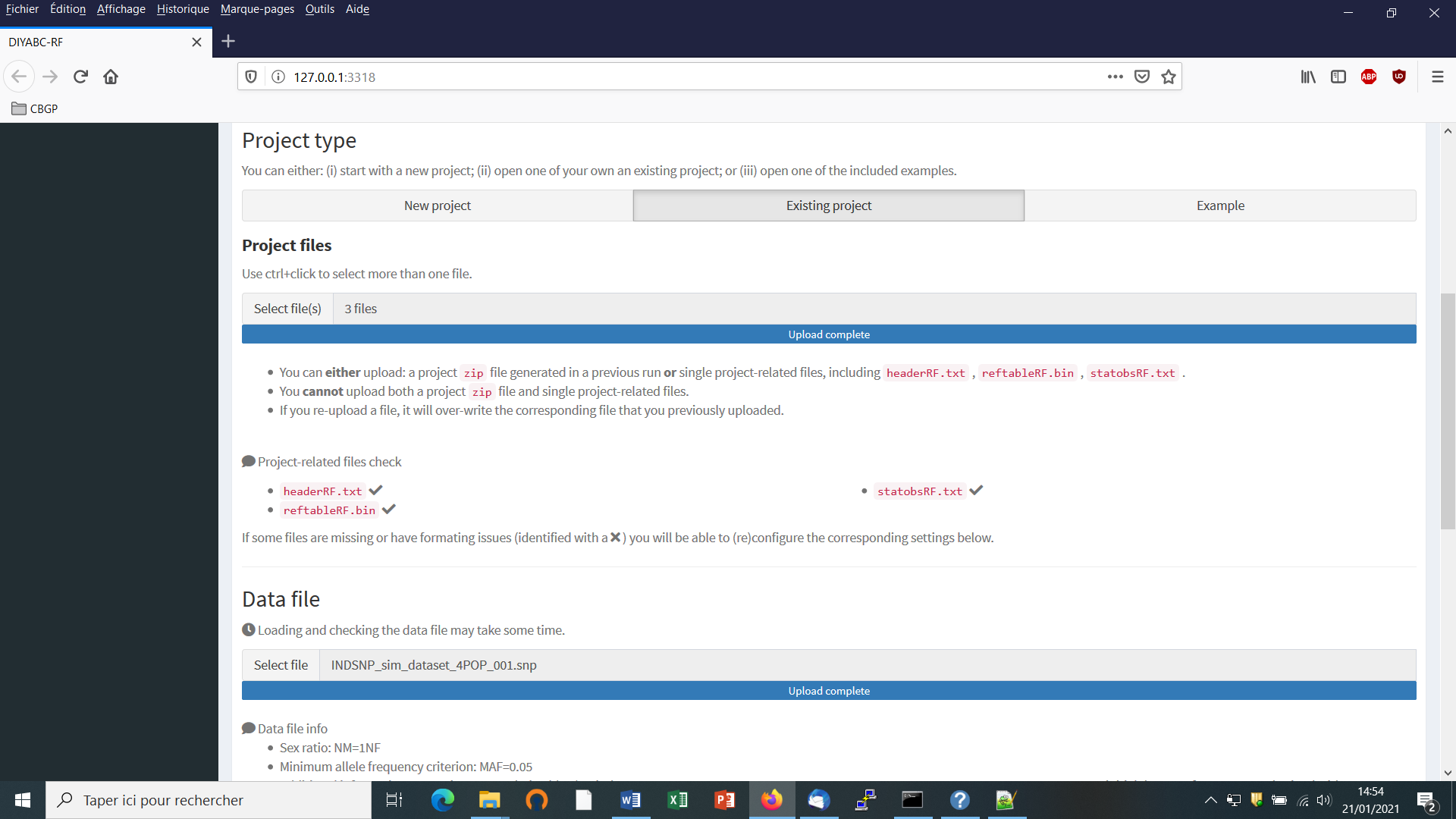

- Click on the “Start” button in the middle of the Home panel. The following panel appears.

-